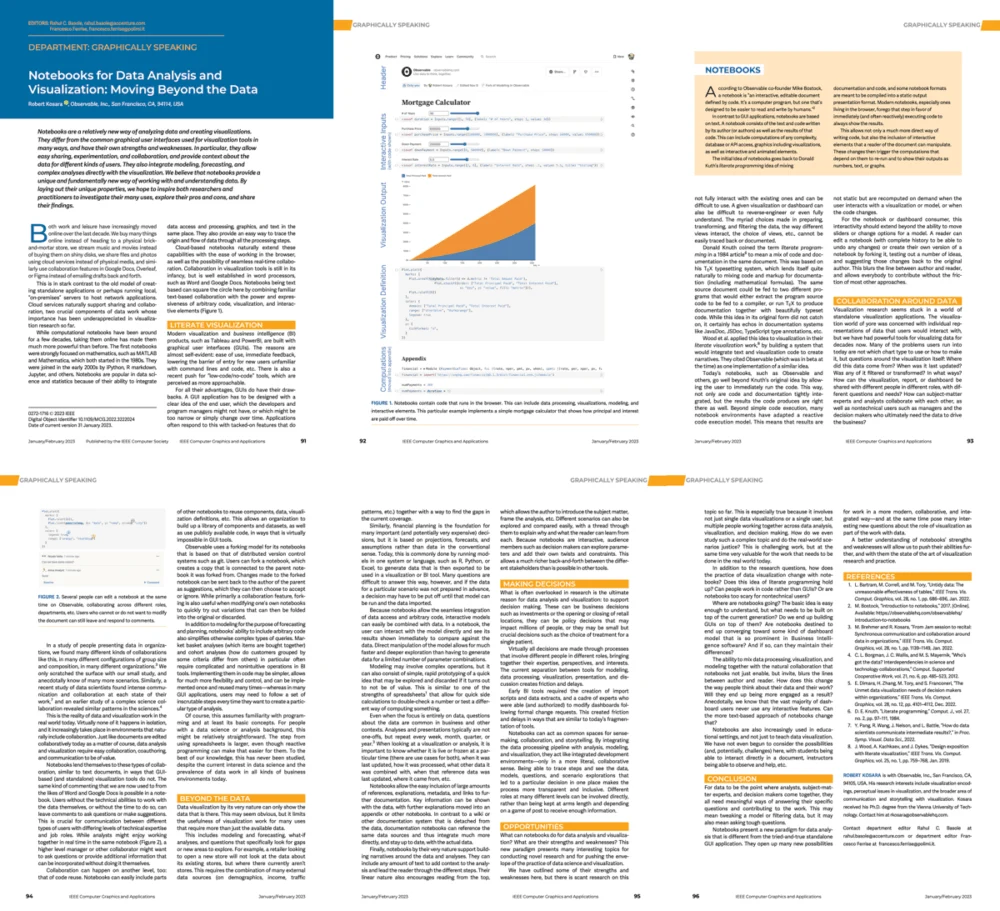

The landscape of data science and business intelligence (BI) is undergoing a significant transition as computational notebooks emerge as a robust alternative to traditional graphical user interface (GUI) tools. In a recent publication for the "Graphically Speaking" column in the journal Computer Graphics and Applications (CG&A), Robert Kosara, a prominent researcher in the field of data visualization and currently a developer advocate at Observable, explores the expanding role of these notebooks. His paper, titled "Notebooks for Data Analysis and Visualization: Moving Beyond the Data," argues that while the research community has historically prioritized GUI-based visualization tools, the practical advantages of notebooks—ranging from reusability to integrated modeling—demand a more rigorous academic focus.

The Evolution of Data Interfaces: From GUIs to Literate Programming

For the past two decades, the data visualization industry has been dominated by GUI-centric software such as Tableau, Microsoft Power BI, and Qlik. These tools democratized data analysis by allowing non-technical users to create complex charts through drag-and-drop interactions. However, as data complexity has increased, the limitations of "black box" GUI tools have become more apparent.

Computational notebooks represent a return to, and an evolution of, the concept of "literate programming" first introduced by Donald Knuth in the 1980s. In this paradigm, code, explanatory text, and visual outputs are interspersed within a single document. This format allows for a narrative flow that explains the "why" behind the data, rather than just presenting the "what."

Kosara’s research highlights that while GUIs are excellent for rapid exploration, they often lack the transparency required for deep collaboration and reproducibility. Notebooks, by contrast, serve as a living record of the analytical process. They allow researchers and practitioners to integrate data cleaning, statistical modeling, and final visualization within a single, shareable environment.

A Chronology of Computational Notebook Development

The rise of the notebook as a primary tool for data visualization did not happen overnight. Its trajectory can be traced through several key milestones in the history of computing:

- 1988: Wolfram Mathematica: The introduction of the notebook interface in Mathematica set the standard for combining symbolic mathematics, text, and graphics.

- 2001: IPython: Fernando Pérez released IPython, providing an interactive shell for Python that would eventually lay the groundwork for the modern web-based notebook.

- 2011: R Markdown: The launch of R Markdown allowed R users to create dynamic documents that combined code and output in various formats, including PDFs and HTML.

- 2014: Project Jupyter: The spin-off of IPython into Project Jupyter marked a turning point, making notebooks language-agnostic and accessible via the browser.

- 2016: Observable: Founded by Mike Bostock (the creator of D3.js), Observable introduced a reactive notebook environment specifically optimized for data visualization and collaborative web development.

- 2023: The AI Integration Era: The current year marks the integration of Large Language Models (LLMs) like ChatGPT into notebook environments, enabling automated code generation and natural language data querying.

Supporting Data: The Growth of Notebook Environments

The shift toward notebook-based visualization is supported by significant industry data. According to the 2022 Kaggle State of Data Science and Machine Learning survey, over 80% of data scientists reported using Jupyter notebooks as their primary development environment. Furthermore, GitHub’s "State of the Octoverse" reports have consistently shown a year-over-year increase in the number of Jupyter Notebook files (.ipynb) hosted on the platform, reaching millions of repositories.

In the corporate sector, the adoption of "Notebook-as-a-Service" platforms has accelerated. Companies like Databricks, Google (via Colab), and Amazon (via SageMaker) have built multi-billion dollar ecosystems around the notebook interface. This commercial success underscores a critical point made in Kosara’s paper: the industry has moved faster than academic research in recognizing the value of the notebook format for data communication.

Key Advantages Over Traditional GUI Tools

Kosara identifies several core strengths that position notebooks as superior for specific high-stakes data tasks:

1. Integration of Analysis and Modeling

In a GUI tool, the data is often prepared in one environment (like SQL or Excel) and visualized in another. Notebooks eliminate this friction by allowing the user to perform complex data transformations and machine learning modeling in the same space where the visualization is generated.

2. Reusability and Version Control

Because notebooks are essentially code-based files, they can be tracked using version control systems like Git. This allows teams to see exactly how a visualization changed over time and who made those changes—a task that is notoriously difficult with proprietary GUI file formats.

3. Facilitation of Collaboration

Modern notebook platforms like Observable and Google Colab allow for real-time collaborative editing, similar to Google Docs. This enables a data scientist, a designer, and a stakeholder to work on the same visualization simultaneously, bridging the gap between technical execution and business requirements.

Identifying the Research Gap

Despite their widespread use, Kosara notes that visualization research has largely ignored notebooks. Most academic papers still focus on novel interaction techniques for graphical interfaces or automated chart recommendations.

The "untapped research space" identified in the paper includes several critical areas:

- State Management: How do users keep track of variables and data states in a non-linear notebook environment?

- The "Hidden State" Problem: Notebooks can often produce different results if cells are run out of order. Research into making notebooks more robust and less prone to user error is vital.

- Hybrid Interfaces: Exploring "low-code" or "no-code" bridges that allow GUI-like interactions within a code-based notebook.

- Perception and Communication: How do stakeholders perceive data differently when presented in a narrative notebook versus a static dashboard?

The Impact of Artificial Intelligence on Notebook Workflows

A significant update to the discourse surrounding notebooks is the rapid integration of Generative AI. While Kosara’s paper was written just as the current AI wave was beginning, he acknowledges that notebooks are the "ideal" environment for exploring AI tools.

LLMs such as ChatGPT and GitHub Copilot thrive in notebook environments because they can generate code snippets that are immediately executable and visualizable. This creates a feedback loop where the human acts as an editor and orchestrator, using the notebook as a canvas to refine AI-generated analysis. This trend is expected to lower the barrier to entry for notebook-based visualization, potentially drawing in users who previously relied on GUIs due to a lack of coding expertise.

Official Responses and Industry Reactions

The publication of Kosara’s paper has sparked discussion among visualization experts and software engineers. While many in the academic community have welcomed the call for more diverse research, some proponents of GUI tools argue that notebooks still possess a steep learning curve that prevents widespread adoption among non-technical executives.

Inferred reactions from the BI community suggest a "best of both worlds" approach is emerging. For instance, companies like Tableau have begun implementing "Tableau Pulse" and other features that mimic the narrative and automated insights found in notebooks, while notebook platforms are adding more GUI-like components to appeal to a broader audience.

Broader Implications for Business and Science

The implications of "Moving Beyond the Data" are profound for both the corporate and scientific worlds. In science, the "reproducibility crisis" has led to a demand for more transparent methods. Notebooks provide a solution by allowing peer reviewers to see the exact code used to generate figures in a paper, ensuring that the results are not just visual artifacts but are backed by sound data processing.

In the business world, the shift toward notebooks signifies a move away from static dashboards toward "data storytelling." As organizations seek to make more data-driven decisions, the ability to explain the context, assumptions, and limitations of an analysis becomes just as important as the final chart.

Robert Kosara’s contribution to Computer Graphics and Applications serves as a call to action for the visualization community. By acknowledging the power of computational notebooks, researchers can begin to address the practical challenges of modern data work, ultimately leading to more reliable, collaborative, and insightful data analysis. As the boundaries between coding, AI, and design continue to blur, the notebook stands as the likely centerpiece of the next generation of data tools.