The digital landscape is undergoing a profound transformation with the ascent of conversational AI search engines, a development poised to redefine how users interact with information and reshape the competitive terrain of the internet. First reported by Fast Company in February 2024, these innovative tools, powered by advanced large language models (LLMs), move beyond traditional keyword-based search to offer direct, summarized answers to user queries by retrieving and synthesizing information from vast online repositories [1]. This evolution represents a significant departure from the search paradigm dominated by Google for decades, ushering in a new era of information retrieval characterized by immediacy and a conversational interface.

The Genesis of a New Search Paradigm

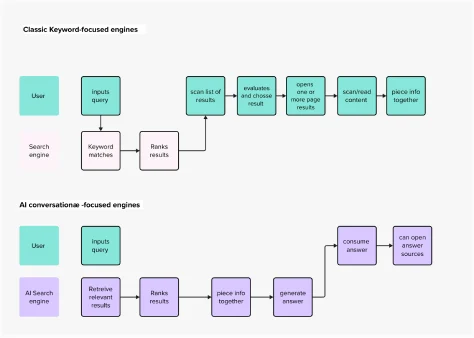

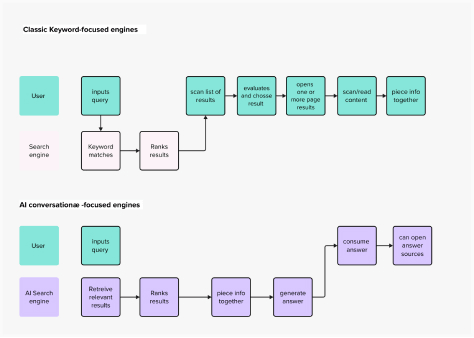

The current surge in conversational AI search engines is a direct consequence of the generative AI boom, particularly ignited by the public launch of ChatGPT in late 2022. This event catalyzed a wave of experimentation across the tech industry, with academic and scientific research institutions actively spearheading advancements in the field [2]. The enthusiasm surrounding these new applications stems from their potential to offer a more intuitive and efficient method of accessing information, fundamentally altering user expectations for digital interactions. Historically, search engines functioned as sophisticated indexing systems, presenting users with a list of links to potentially relevant web pages. The onus was then on the user to navigate these links, evaluate sources, and synthesize information to find their desired answers. This model, while effective, often involved significant cognitive load and time investment.

The shift towards conversational AI search engines marks a technological push, where the sheer power and capability of generative AI are driving market innovation [3]. This mirrors previous technological revolutions where breakthroughs in core technologies, such as the internet itself or mobile computing, spurred entirely new product categories and user experiences. However, the true measure of this evolution lies in its benefit to users. The history of technology is replete with examples of powerful innovations that failed to gain widespread adoption due to a disconnect from genuine user needs or an oversight of human-centered design principles.

Redefining the User Experience: A New Mental Model

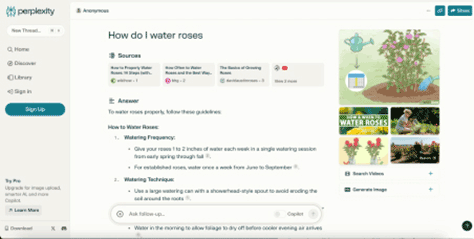

At the heart of conversational AI search engines lies a fundamentally different mental model for interaction. Platforms like Perplexity AI exemplify this shift. Upon visiting Perplexity’s homepage, users encounter familiar elements: an input field for queries and a central display area for results. However, the resemblance to classic search engines ends there. Unlike a traditional search engine results page (SERP) that provides a list of links, Perplexity directly serves a collated answer, synthesizing snippets of information from multiple sources. This design mirrors the conversational chatbot interface popularized by ChatGPT, making the interaction feel more like a dialogue than a query-and-response system.

This direct answer approach significantly streamlines the user journey. In classic search, users frequently engage in a multi-step process: entering a query, scanning a SERP for promising links, clicking through to individual websites, and then sifting through content to extract the relevant information. Conversational AI search engines condense this into a single, direct interaction. The user’s goal remains constant – finding an answer within a text – but the cognitive effort required is dramatically reduced. This aligns closely with fundamental usability heuristics, particularly those emphasizing efficiency and error prevention. By providing immediate, summarized answers, these tools address a crucial usability heuristic: matching the system to the real world, where human conversations naturally follow a Q&A pattern.

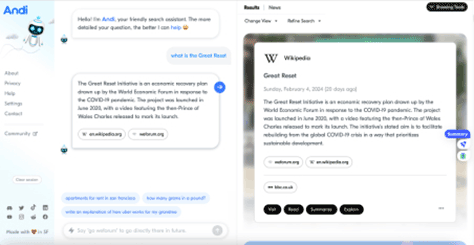

Another notable example, Andi, pushes this conversational mental model further in its layout and interactions. While its information architecture retains elements akin to classic search, its interface design emphasizes a chatbot-like experience. This varied approach among early entrants highlights the ongoing experimentation in defining the optimal user interface for this new generation of search.

Enhancing Usability and Interaction

The immediate and tangible improvements in usability offered by conversational AI search engines are compelling. They directly address several pain points inherent in traditional search:

- Direct Answers: Instead of a list of potential sources, users receive a synthesized answer, eliminating the need to evaluate multiple links or navigate away from the search interface. This significantly reduces the time and effort required to obtain information.

- Reduced Cognitive Load: The AI performs the heavy lifting of information collation and summarization, freeing users from the task of sifting through disparate texts. This allows users to focus on understanding the answer rather than the process of finding it.

- Conversational Interface: The Q&A format feels natural and intuitive, leveraging users’ familiarity with conversational patterns. This lowers the barrier to entry and makes complex information retrieval more accessible.

- Contextual Understanding: LLMs are designed to understand the nuance and context of natural language queries, leading to more relevant and accurate responses than keyword matching alone.

- Iterative Refinement: Many conversational AI search engines allow users to ask follow-up questions, refining their search without starting anew, much like a human conversation. This enhances the fluidity of the information-seeking process.

These enhancements align with several of Jakob Nielsen’s usability heuristics for user interface design [4]. The "visibility of system status" is improved by providing a clear, direct answer. "Match between system and the real world" is achieved through the conversational paradigm. "User control and freedom" is maintained through iterative questioning. "Flexibility and efficiency of use" are inherent in the streamlined process. While these tools tick many boxes for improving the user experience, their long-term implications extend beyond mere convenience.

Broader Implications: Trust, Explainability, and Human Agency

The shift towards AI-powered search, while offering undeniable usability benefits, introduces a complex array of challenges, particularly concerning trust, explainability, and the potential impact on human cognitive processes. When a tool like Perplexity AI autonomously selects, extracts, and summarizes information to craft an answer, users delegate a significant portion of their decision-making to the AI. A critical shortfall here is the lack of explicit communication regarding why certain sources were prioritized over others, neglecting a crucial aspect of AI explainability [5].

IBM’s Global Ethics Leader, Francesca Rossi, underscores this concern, stating that AI "raises some concerns, such as its ability to make important decisions in a way that humans would perceive as fair, to be aware and aligned to human values that are relevant to the problems being tackled, and the capability to explain its reasoning and decision-making" [6]. The absence of transparent reasoning undermines the trustworthiness of the system. While enterprises are increasingly aware of the risks associated with untrustworthy AI—ranging from regulatory fines to reputational damage—individual internet users, or "Internauts," may be less attuned to these dangers.

The profound influence of digital architectures on human behavior is a concept articulated by Gen Z expert Roberta Katz: "first you make the building and then the building makes you" [7]. Just as physical environments shape our emotional and behavioral responses, the design of our digital tools profoundly impacts how we think and interact with information. This analogy suggests a significant danger in the uncritical adoption of highly convenient AI search engines: users may become accustomed to accepting ready-made, authoritative-sounding answers without pausing to question their accuracy, biases, or outright veracity.

While these tools often provide links to source documents, several critical questions remain unanswered for the average user:

- How were the sources selected?

- What criteria were used to summarize and synthesize the information?

- Are there inherent biases in the underlying LLM or the data it was trained on that influence the answer?

- What is the recency and authority of the selected sources?

The first issue, common to both traditional and AI search, is exacerbated by the deeper delegation of decision-making inherent in generative AI. Even with full explainability, the ease of access to pre-digested answers could, over time, diminish users’ critical thinking skills. The laborious process of sifting through Google’s SERP, comparing information from various articles, and synthesizing one’s own answers, while tedious, serves as a valuable exercise for analytical and creative faculties. This friction, paradoxically, encourages a more discerning approach to information. If the mental model of search shifts irrevocably to one where the AI always knows the right answer, the societal implications for critical discernment, particularly in sensitive fields like policymaking, science, or civic discourse, could be enormous.

Impact on Content Creators and the Digital Economy

Beyond individual cognitive impact, the rise of conversational AI search engines poses a significant economic threat to content creators and traditional publishers. Kevin Roose of The New York Times succinctly framed this concern: "If AI search engines can reliably summarize what’s happening in Gaza or tell users which toaster to buy, why would anyone visit a publisher’s Web site ever again?" [8]. If users receive complete answers directly within the search interface, the incentive to click through to source websites—which rely on traffic for advertising revenue and subscriptions—diminishes drastically. This could destabilize the existing digital economy that underpins quality journalism and content creation.

Some AI search models, like Andi, attempt to mitigate this by prominently displaying source links, encouraging users to click through. Andi’s architecture, while employing a chatbot-like interface, maintains an information structure more akin to a traditional SERP, where a brief answer is often a snippet from a primary source (like Wikipedia), followed by a list of all relevant sources. This design choice highlights the power of UX and UI in influencing user behavior, potentially directing traffic back to content creators. However, the fundamental tension between providing direct answers and sustaining the ecosystem of content creation remains a formidable challenge.

The Competitive Landscape and Regulatory Responses

The emergence of these new AI-powered search engines has prompted significant reactions from established players. Google, long the undisputed king of search, has responded with its Search Generative Experience (SGE), which integrates AI-powered summaries directly into its traditional SERP. Microsoft has also heavily invested in integrating LLMs into its Bing search engine and Edge browser through Copilot, aiming to capture market share by offering a more conversational and generative experience. These moves underscore the industry-wide recognition that AI is not merely an enhancement but a fundamental shift in the future of search.

The rapid advancement and widespread adoption of generative AI have also spurred an urgent global dialogue around regulation. Governments and international bodies are grappling with how to ensure the ethical, safe, and transparent development and deployment of AI. The European Union’s AI Act, a landmark piece of legislation, aims to establish a comprehensive regulatory framework for AI, categorizing systems by risk level and imposing stringent requirements on high-risk applications. Similar efforts are underway in the United States and other regions, focusing on transparency, accountability, and the prevention of harm. These impending regulations will undoubtedly influence the design and operation of conversational AI search engines, particularly concerning explainability, data governance, and the mitigation of biases and "hallucinations" – instances where AI generates plausible but factually incorrect information [11].

The Future of AI Search: Trust as the Cornerstone

The question is not if AI will be the future of search, but how it will be shaped. Generative capabilities offer unparalleled opportunities to enhance the user experience and accelerate knowledge acquisition. However, the critical path forward depends on addressing fundamental concerns about trust, accuracy, and ethical deployment.

A study published in Nature highlighted the conflicting views among users of AI science search engines, with some researchers praising their utility and accuracy, while others expressed deep-seated concerns about trustworthiness and inconsistent retrieval performance [9]. This divergence underscores that trust is the central issue potentially impeding broader AI adoption.

To foster trustworthy AI search engines, two key areas of focus are paramount:

-

Technological Advancements in Trustworthiness:

- Improved Accuracy and Factuality: Ongoing research into Retrieval-Augmented Generation (RAG) [10] and other techniques aims to ground LLMs in authoritative, up-to-date information, reducing the propensity for hallucinations.

- Enhanced Explainability: Developing methods for AI systems to articulate their reasoning, source selection, and summarization processes in an understandable way to users. This includes transparency about model limitations and potential biases.

- Robustness against Manipulation: Building systems that are resilient to adversarial attacks and the propagation of misinformation.

- Continual Learning and Feedback Loops: Implementing mechanisms for user feedback and continuous model improvement to correct errors and adapt to evolving information landscapes.

-

User Education and Design for Critical Engagement:

- Prominent Source Attribution: Clearly linking to all original sources used in generating an answer, making it easy for users to verify information.

- Contextual Cues: Providing information about the recency, authority, and potential biases of sources.

- Encouraging Verification: Designing interfaces that subtly encourage users to explore sources and engage in critical thinking, rather than passively accepting answers.

- Transparency in AI’s Role: Clearly communicating when an answer is AI-generated and the methodologies employed.

- Digital Literacy Initiatives: Broader societal efforts to educate users on the capabilities and limitations of AI, fostering a more discerning approach to digital information.

As new regulations emerge to ensure safer and more ethical uses of AI, the question of whether a "good" search experience—defined purely by convenience—is also the "right" experience becomes increasingly relevant. In a context where a significant portion of our decision-making is delegated to LLMs, design efforts must prioritize accuracy, trustworthiness, and the comprehensiveness of search outputs [12]. This necessitates a holistic approach that extends far beyond merely building a user-friendly conversational interface; it requires a commitment to responsible AI development that safeguards human agency and critical thinking in the digital age.