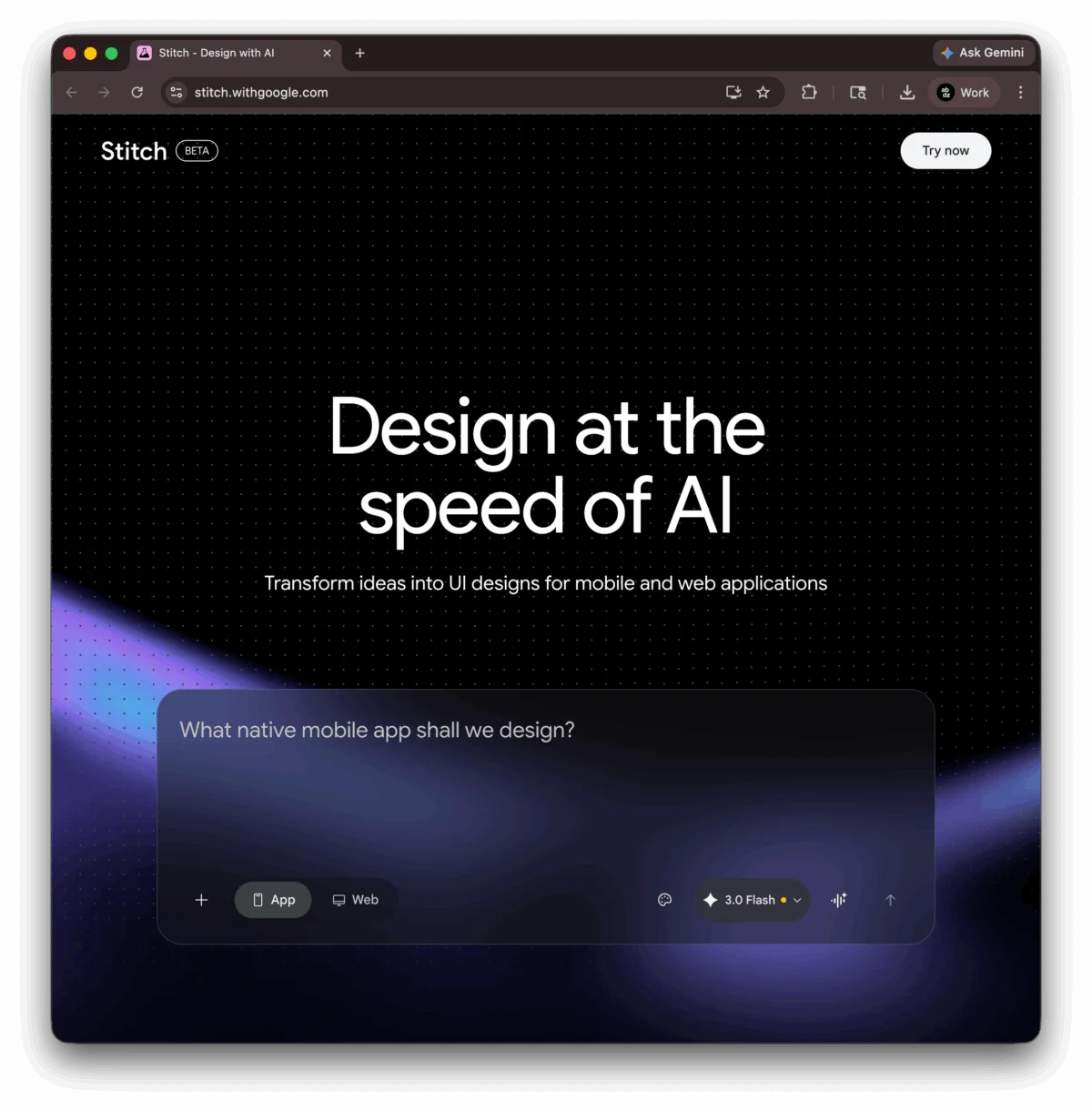

Google Stitch, an advanced AI design tool from Google Labs, has fundamentally repositioned the initial stages of user interface (UI) creation with its March 2026 update, introducing an AI-native infinite canvas, sophisticated voice input capabilities, and instant high-fidelity prototypes. Available for free during its beta phase at stitch.withgoogle.com, this platform formalizes "vibe design," a concept that prioritizes a desired aesthetic or emotional goal over traditional, component-by-component construction, mirroring the earlier emergence of "vibe coding" in developer circles.

The Paradigm Shift: From Wireframes to "Vibe Design"

The core innovation of Google Stitch lies in its departure from the conventional wireframe-first ritual that has long dominated UI/UX design. Historically, designers would begin a project by meticulously specifying components, defining grid columns, and detailing spacing—often in low-fidelity—before any visual resemblance to a finished product materialized. Stitch inverts this established methodology. Instead of placing a rectangle in a tool like Figma, a designer now describes an overarching goal, an emotional feeling, or provides a visual reference. The AI-native canvas then interprets this intent to generate multiple high-fidelity design directions instantaneously, effectively bypassing the often time-consuming low-fidelity exploration phase entirely.

This represents a significant leap from mere prompt-to-mockup generators. Industry reports indicate that designers spend an estimated 20-30% of their initial project time on low-fidelity wireframing and basic layout, a phase that Stitch aims to compress or eliminate. By allowing prompts such as "premium and minimalist, like Stripe," the AI infers complex design attributes—layout hierarchy, color temperature, typographic weight, and whitespace—from the semantic intent, dramatically accelerating the initial conceptualization phase. This shift empowers designers to focus immediately on the overall user experience and brand ethos, rather than getting bogged down in minute structural details at the outset.

An AI-Native Canvas: The Core Innovation

At the heart of Stitch’s transformation is its redesigned AI-native infinite canvas. The March 2026 update rebuilt the entire product around this dynamic environment, allowing a project to evolve seamlessly from a rough conceptual sketch to a fully clickable prototype without the need to switch between disparate tools. This canvas is designed to be highly receptive to a wide array of inputs, accepting not only text descriptions but also visual assets like screenshots, competitor URLs, and even raw code snippets. Critically, it also integrates voice input, transforming the design process into a more intuitive and conversational interaction. All these diverse inputs become critical context against which the AI reasons when generating and refining screens, ensuring a coherent and contextually relevant output.

Google Labs, known for its pioneering work in experimental AI, has leveraged its deep research capabilities to create a system that doesn’t just apply pre-set templates but actively learns and adapts. This positions Stitch as a key component of Google’s broader strategy to integrate generative AI across its product ecosystem, enhancing productivity and fostering innovation in creative industries.

Voice Canvas and Conversational Design

Further enhancing the intuitive nature of Stitch is the introduction of the new Voice Canvas. This feature enables designers to interact directly with the canvas through natural language commands, blurring the lines between human intent and machine execution. A designer can simply speak commands like "darker palette," "add a hero section," or "show me three menu options." The underlying design agent actively listens, processes the instruction, and updates the UI in real-time. Where clarification is needed, the agent intelligently asks follow-up questions, fostering a conversational design flow that feels more akin to collaborating with a human partner than operating a software tool.

This conversational approach marks a significant evolution in human-computer interaction within design. It moves beyond the paradigm of adjusting sliders and inputting specific values, offering a more fluid and less prescriptive method of interaction. A Google spokesperson, speaking on background, emphasized that the Voice Canvas aims to "democratize advanced design capabilities, making sophisticated UI adjustments accessible through the most natural interface: human speech." This could significantly reduce the learning curve for new users and accelerate iteration cycles for experienced professionals.

Streamlining the Design-to-Development Handoff

One of the most persistent challenges in the product development lifecycle is the handoff between design and engineering teams. This phase is often plagued by misinterpretations, leading to costly rework and delays. Google Stitch addresses this critical pain point with the introduction of a DESIGN.md file. This innovative agent-friendly markdown document is designed to capture all essential design tokens, spacing rules, and component patterns, not just from the Stitch project itself, but also by analyzing any existing website or brand asset provided by the user.

The DESIGN.md file acts as a universal source of truth, facilitating seamless interoperability across the development stack. It can be exported from Stitch and subsequently dropped into leading coding agents and Integrated Development Environments (IDEs) such as Claude Code, Cursor, or Gemini CLI via the MCP server. This allows the coding agent to automatically match the visual system, adhering to brand guidelines and component specifications without explicit, manual instruction. Industry data suggests that inefficiencies in design-to-development handoffs contribute to an average of 15-20% project delay and a significant increase in development costs. By establishing a shared, machine-readable design specification, Stitch aims to transform what was often described as a "game of telephone" into a synchronized, coherent workflow, significantly reducing friction and accelerating time-to-market.

The Canvas as a Dynamic Design Thinking Environment

Beyond its input capabilities, Stitch’s infinite canvas functions as a sophisticated design thinking space. Earlier iterations of Stitch primarily operated as a glorified chat interface, where prompts generated designs that required subsequent manual iteration. The March update has transformed this into a robust design environment. Designers can now generate and place multiple design variations side-by-side, facilitating direct comparison and evaluation. The canvas supports annotation and allows for branching into parallel explorations without the risk of losing original concepts.

A notable feature is the Agent Manager, which enables multiple specialized AI agents to run concurrently. For example, one agent can focus on refining typography, another on adjusting color palettes, while a third generates placeholder images. This last capability is powered by Nano Banana 2, Stitch’s context-aware image generator. Unlike generic stock image services, Nano Banana 2 produces visuals specifically tailored to the project’s context and aesthetic, ensuring consistency and relevance without additional manual curation. This multi-agent approach enhances efficiency and allows for a more holistic and iterative design process within a single environment.

Accelerated Prototyping and User Journey Mapping

Interactive prototyping, a feature first introduced in December 2025 and retained in the March update, further cements Stitch’s utility as an end-to-end design solution. Designers can connect individual screens into comprehensive user flows, complete with transitions, and preview entire user journeys with a simple "Play" button. A particularly powerful aspect is the AI’s ability to predict and generate logical next screens based on a current selection. This means a designer can define a three-screen flow, and the AI can intelligently expand it to eight screens or more without requiring manual prompts for each additional step.

This capability significantly closes the gap between initial design exploration and stakeholder presentations. By rapidly generating interactive prototypes that simulate real user experiences, design teams can gather feedback earlier, validate concepts faster, and iterate with greater agility. This reduces the time and resources traditionally required to translate static mockups into presentable, testable experiences.

Seamless Export and Integration into Development Workflows

A key advantage of Google Stitch for product teams is its commitment to producing clean, functional code. Every design generated within Stitch also outputs production-ready HTML/CSS. This code is not merely a static representation but can be exported seamlessly to popular design and development tools. It can integrate with Figma, maintaining named layers and Auto Layout properties—a critical feature often lost in less sophisticated AI-to-design tool exports. Furthermore, the code can be sent to Google AI Studio for backend integration or directly to Antigravity IDE for comprehensive application development.

For solo entrepreneurs, startups, and early-stage teams, this streamlined path from a conceptual "vibe design" to a working, interactive interface is revolutionary. What once took days, or even weeks, involving multiple tools and handoffs, can now be accomplished in a matter of hours. This capability democratizes access to high-quality UI development, empowering innovators to bring their ideas to market with unprecedented speed.

Industry Implications: The Evolving Role of the Designer

The advent of tools like Google Stitch and the concept of "vibe design" inevitably sparks discussions about the future role of the human designer. If AI systems can absorb the tedious, early-stage wireframing and even generate high-fidelity prototypes, where does the competitive edge for human designers lie? The consensus among industry analysts is that the skill premium will shift decisively towards judgment, strategic thinking, and aesthetic discernment.

Designers will become less like artisans painstakingly crafting every pixel and more like conductors or curators, guiding powerful AI orchestras. The value will reside in the ability to articulate a precise "vibe," to immediately identify which AI-generated direction is superior, to detect what "feels off" or misaligned with brand values, and to understand how a design might perform or break at scale. A designer who can feed Stitch an insightful prompt and quickly discern the strongest output will be exponentially faster and more efficient than one who builds every frame manually. This signifies that the tool is not replacing taste or strategic insight; rather, it is making these uniquely human attributes the primary and most valuable inputs. Design education and professional development will need to adapt, focusing less on manual tool proficiency and more on critical thinking, ethical AI use, and the art of effective prompting.

Current Availability and Future Outlook

Google Stitch is currently accessible for free during its beta phase, requiring only a Google account for access. Users are allocated 350 standard generations and 50 experimental-mode generations per month, operating entirely within a web browser. While powerful, Stitch, in its current 2026 beta iteration, does have certain limitations. It does not yet support shared component libraries, real-time multiplayer editing, or comprehensive version history—features commonly found in established design platforms like Figma. Consequently, the recommended workflow for professional teams in 2026 involves using Stitch for rapid exploration and initial concept generation, refining the designs in a collaborative tool like Figma, and then proceeding to build the application in an IDE such as Antigravity.

However, the rapid pace of development in AI tools suggests that these collaborative and versioning features are likely on the roadmap. The integration with Google’s broader AI ecosystem and its potential for enterprise adoption are significant. As Stitch evolves, it could become a central hub for end-to-end product design, further blurring the lines between concept, design, and development.

Google Stitch, through its pioneering "vibe design" canvas, represents a pivotal moment in the evolution of UI/UX design tools. By harnessing the power of generative AI, voice interaction, and seamless integration, it promises to accelerate creativity, streamline workflows, and redefine what it means to design in the digital age. The canvas is open at stitch.withgoogle.com, inviting designers worldwide to experience this new frontier.