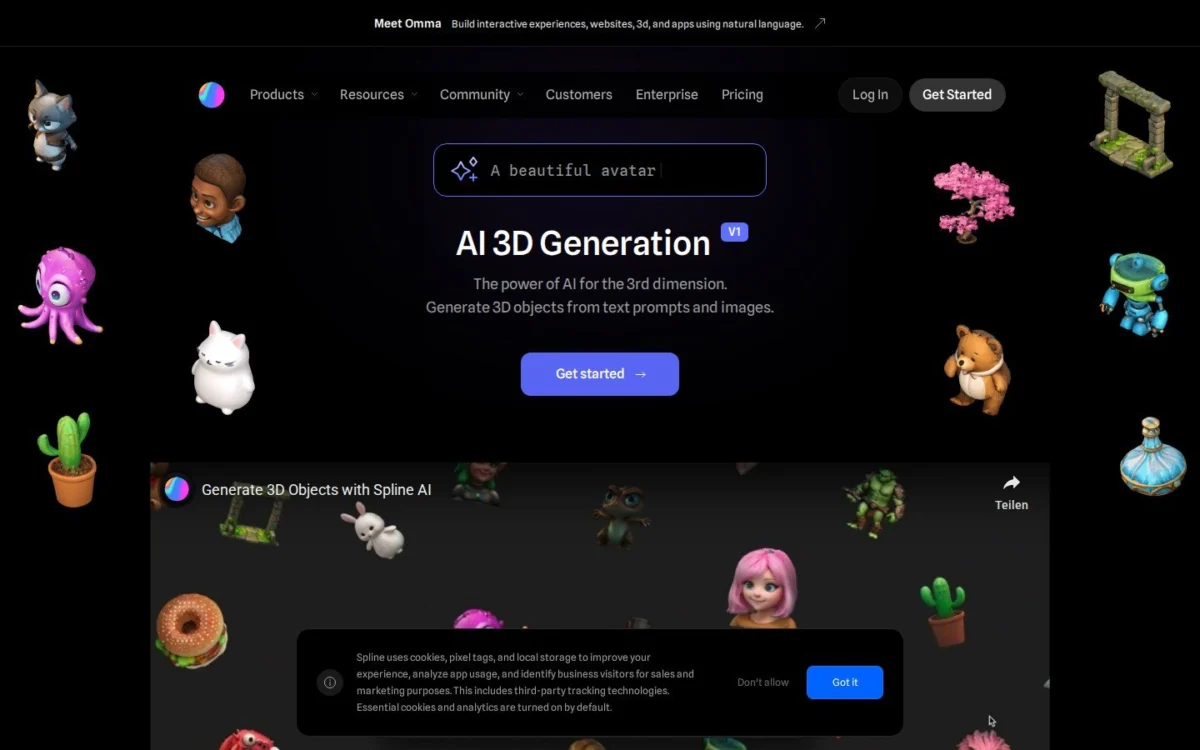

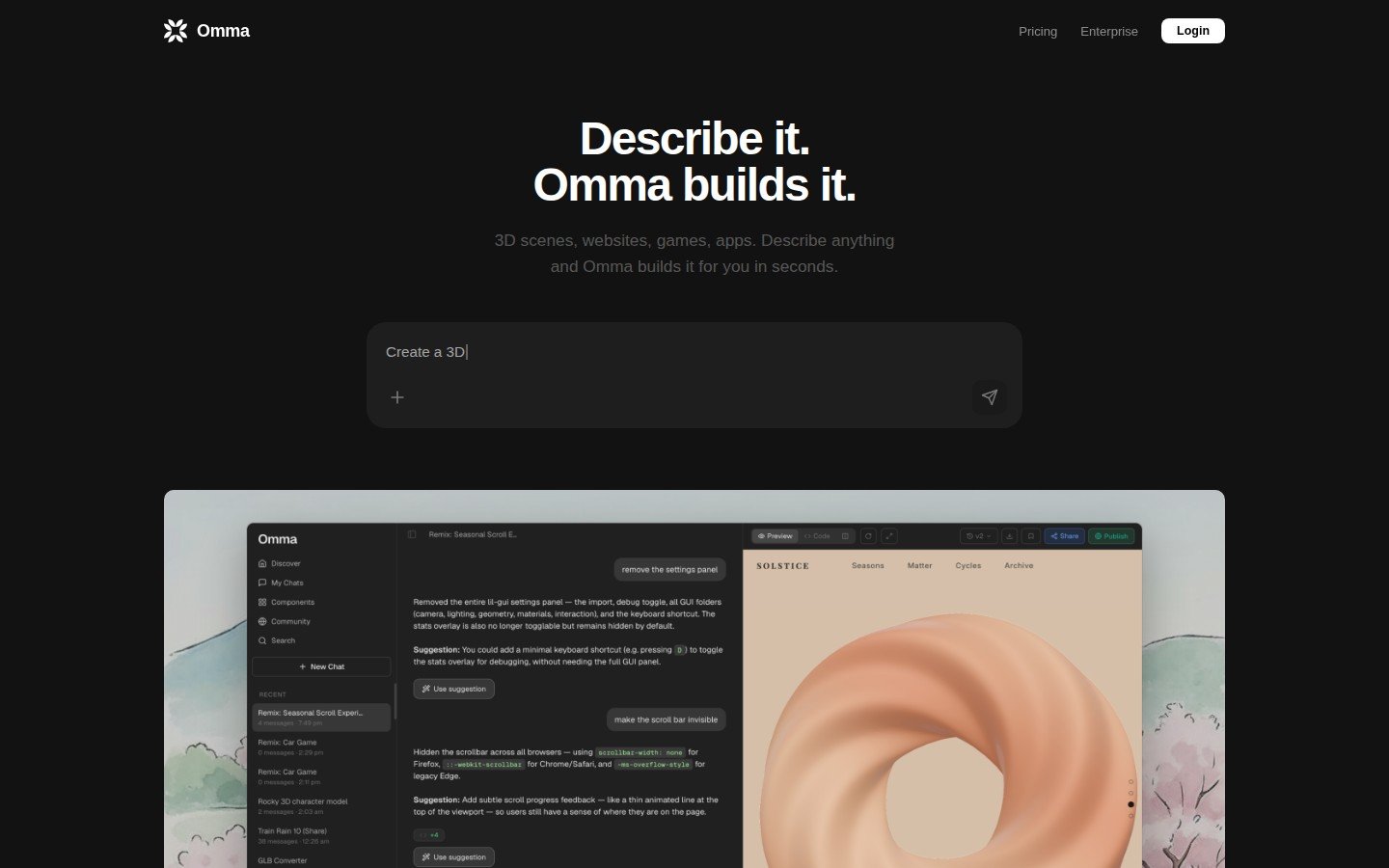

The launch of Omma on March 24, 2026, by Spline marks a significant inflection point in the landscape of digital design and web development. Positioned as a revolutionary AI canvas, Omma is engineered to transform natural language input into fully interactive web experiences, encompassing complex 3D scenes, sophisticated motion design, intricate animations, and functional user interfaces, all within a single, unified environment. Unlike many existing AI-powered design tools that often yield prototypes or static components, Omma is designed to produce production-ready, deployable assets, effectively bridging the gap between conceptualization and live deployment.

Spline, a company founded in 2020, has rapidly grown to serve over three million designers globally, with its tools embraced by prominent organizations such as Google, Datadog, Robinhood, and UPS. The introduction of Omma leverages Spline’s established expertise in real-time 3D design, extending its capabilities through advanced artificial intelligence to an even broader audience. This new platform is not merely an incremental update but a strategic expansion aimed at redefining the creative workflow for interactive digital content.

The Multi-Agent AI Architecture Behind Omma

At the core of Omma’s groundbreaking functionality is a sophisticated multi-agent AI system that operates in parallel to fulfill user requests. This innovative architecture orchestrates several specialized AI agents, each tasked with a distinct aspect of the creative process. One agent, powered by large language models (LLMs), is responsible for code generation, translating natural language commands into functional web code. Concurrently, a second agent focuses on 3D mesh creation, intelligently constructing three-dimensional models based on the prompt. A third agent specializes in image generation, producing textures, backgrounds, or other visual assets required for the scene.

This synchronous operation allows users to initiate complex creative tasks with remarkable simplicity. For instance, a user might type a command such as /3d create a futuristic cityscape with flying cars and neon lights. The multi-agent system then springs into action, simultaneously generating the underlying code for interactivity, sculpting the 3D models of buildings and vehicles, and rendering appropriate visual elements. Crucially, the generated 3D models are automatically placed into the scene, and the resulting GLB files are compressed and optimized for web deployment, ensuring high performance and accessibility. A defining feature of Omma is that every element generated by the AI remains fully editable through Spline’s intuitive visual tools, providing designers with ultimate control and the ability to refine and customize the AI’s output to perfection. This balance of automated generation and manual control addresses a key concern for creative professionals regarding AI’s role in design – maintaining artistic agency.

From Prompt to Production: Eliminating Workflow Bottlenecks

A central objective of the Omma AI canvas is to streamline the digital content creation pipeline by eliminating traditional workflow bottlenecks, particularly the notorious prototype-to-developer handoff. This phase, often fraught with communication challenges, reinterpretations, and iterative adjustments, can significantly prolong project timelines and escalate costs. Alejandro Leon, CEO of Spline, articulated this vision, emphasizing the platform’s ability to offer "flexibility for teams that need speed without sacrificing control." This statement underscores Omma’s promise to empower individuals and small teams to achieve what previously required a multi-disciplinary team comprising 3D artists, motion designers, front-end developers, and UI/UX specialists.

The platform’s capability to export target web, mobile, and extended reality (XR) devices further enhances its versatility. Moreover, Omma simplifies the deployment process by handling custom domain assignment and facilitating direct deployment to production environments. This end-to-end solution means that even individuals without prior design or coding experience can conceive, create, and ship a finished, interactive digital experience. This democratization of high-fidelity interactive design has profound implications for various sectors, from marketing agencies looking to quickly launch engaging campaigns to small businesses seeking to establish a dynamic online presence without extensive technical investment.

Spline’s Growth, Funding, and Product Ecosystem

Spline’s journey to the launch of Omma has been marked by consistent innovation and strategic growth. Founded in 2020, the company quickly established itself as a leader in real-time 3D design, providing an accessible editor that made 3D creation more approachable. To date, Spline has successfully raised $32 million in funding from a consortium of prominent investors, including Third Point Ventures, Gradient Ventures, and Y Combinator. This substantial financial backing has fueled its research and development efforts, culminating in the sophisticated AI capabilities seen in Omma.

The company’s product portfolio now comprises three distinct yet synergistic offerings:

- The core Spline editor: This remains the foundation for real-time 3D design, offering robust tools for creating and animating 3D objects and scenes.

- Hana: A specialized tool dedicated to 2D motion design, catering to animators and graphic designers seeking dynamic visual effects.

- Omma AI canvas: The latest addition, integrating AI-powered generation for interactive 3D web experiences, represents a significant leap forward in automating complex design tasks.

This integrated ecosystem positions Spline as a comprehensive solution for designers working across various dimensions and levels of interactivity, reinforcing its commitment to empowering creative professionals with cutting-edge technology. The tiered pricing structure for Omma, starting at $29 per month for the Professional plan with individual credit purchases available, and an Enterprise tier for larger organizations, reflects a strategic approach to cater to a diverse user base, from freelancers to large corporations.

Omma AI Canvas in the Evolving Competitive Landscape

The market for AI-powered web design tools has seen rapid expansion, with several players emerging to address different facets of UI generation. Tools like Vercel’s v0, Bolt, and Lovable have gained traction by enabling users to generate web user interfaces from text prompts. However, a critical distinction for most of these existing solutions is their focus on producing flat, component-based interfaces. While highly efficient for standard web layouts and forms, they generally lack the capacity for integrated 3D elements, complex motion graphics, or deep interactivity without significant manual intervention or external tooling.

The Omma AI canvas differentiates itself by uniquely combining 3D generation, image generation, and coded interactivity within a single, cohesive canvas. This integration positions Omma in a distinctive niche, bridging the gap between traditional design-heavy 3D tools and code-forward web generators. For motion designers, in particular, Omma presents a paradigm shift, offering a significantly faster path to shipping interactive work by drastically reducing pipeline complexity. Instead of designing 3D assets in one program, animating them in another, and then relying on developers to integrate them into a functional web environment, Omma promises an all-in-one solution.

This integrated approach addresses a long-standing challenge in digital production: the fragmentation of tools and expertise. By consolidating these capabilities, Omma aims to unlock new creative possibilities, allowing designers to experiment with highly immersive and dynamic web experiences that were previously too time-consuming or technically demanding to produce. The implication is a potential realignment of roles within creative teams, with a greater emphasis on conceptualization and prompt engineering, and less on the laborious execution of individual components.

Technical Deep Dive: Optimization and Editability

The promise of "production-ready" output from Omma is underpinned by several crucial technical considerations. The automatic compression and optimization of GLB files for the web are vital. GLB (GL Transmission Format Binary) is a standard for 3D scenes and models, designed for efficient transmission and loading of 3D content. By ensuring these files are optimized, Omma addresses one of the primary hurdles of incorporating 3D into web experiences: performance. Unoptimized 3D assets can lead to slow loading times and poor user experiences, negating the benefits of immersive content. Omma’s built-in optimization ensures that the AI-generated 3D content is lightweight and fast, making it suitable for a broad range of devices and network conditions.

Furthermore, the commitment to keeping every element editable through Spline’s visual tools post-generation is a cornerstone of Omma’s design philosophy. This feature is crucial for professional designers who require granular control over their creations. While AI can accelerate the initial generation phase, the ability to tweak, refine, and iterate on specific aspects – from material properties and lighting to animation curves and UI layouts – ensures that the final output aligns precisely with the designer’s vision and brand guidelines. This hybrid workflow, combining AI-powered speed with human-centric control, is likely to be a key factor in Omma’s adoption among professional creative teams.

The multi-agent system itself represents a significant advancement in AI for creative applications. The coordinated effort of an LLM for code, a generative AI for 3D meshes, and another for images showcases a sophisticated understanding of how to deconstruct and reconstruct complex digital experiences from simple text prompts. The challenge lies not just in generating these individual components but in seamlessly integrating them into a coherent, functional, and aesthetically pleasing whole, which Omma purports to achieve.

Future Outlook and Implications for the Creative Industry

While the launch demo of Omma AI canvas, running for 24 seconds and showcasing immediate, clean results, offers a compelling glimpse into its potential, its performance at scale remains an open question. The true test will come as users attempt to generate increasingly complex 3D scenes, intricate motion designs, and highly interactive applications under production-level demands. Factors such as the fidelity of generated models, the robustness of the code, the consistency of design elements across multiple generations, and the learning curve for prompt engineering will all contribute to its long-term success and adoption.

Nevertheless, the ambition behind Omma is clear and profound: to empower a single designer, equipped with nothing more than a text prompt, to deliver a complete, interactive digital experience that once necessitated the collaborative efforts of an entire team. This vision has several potential implications for the creative industry:

- Democratization of 3D and Interactive Design: Omma could significantly lower the barrier to entry for creating sophisticated 3D web content, making it accessible to individuals and businesses without specialized skills or large budgets.

- Shift in Skillsets: Creative professionals may see a shift from manual execution towards prompt engineering, AI supervision, and high-level conceptual design. The ability to articulate complex ideas effectively to an AI will become a valuable skill.

- Accelerated Development Cycles: Projects that traditionally took weeks or months to develop could potentially be brought to fruition in days or hours, significantly increasing productivity and responsiveness to market trends.

- New Business Models: The ease of creating interactive experiences could foster new types of digital products and services, particularly in areas like personalized web experiences, interactive e-commerce, and immersive storytelling.

- Competitive Pressure: Established design software companies and web development platforms will face renewed pressure to integrate similar AI capabilities or risk being outmaneuvered by more agile, AI-driven solutions.

- Ethical Considerations: As AI takes on more creative tasks, discussions around authorship, intellectual property, and the potential for AI-generated content to dilute human creativity will undoubtedly intensify.

The Omma AI canvas represents a bold step towards an AI-augmented future for digital creation. Its promise to deliver production-ready 3D websites, motion design, and interactive apps from simple text prompts could reshape how digital content is conceived, designed, and deployed. As the platform goes live at omma.build, the industry watches closely to see how this ambitious vision unfolds and transforms the creative landscape.