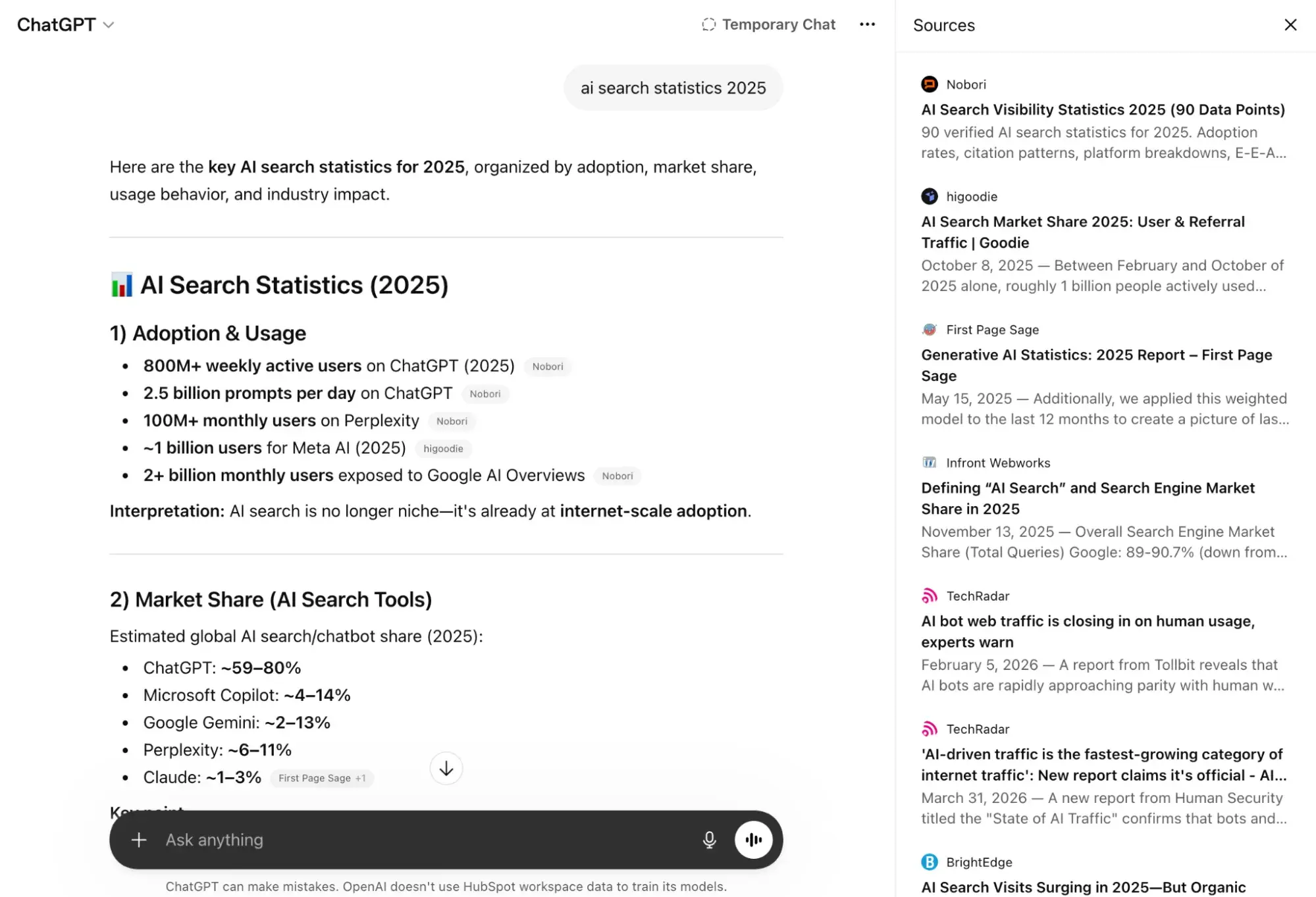

The landscape of digital discovery has undergone a fundamental shift as traditional search engine optimization (SEO) evolves into answer engine optimization (AEO). As of early 2026, industry data indicates that a growing majority of internet users now rely on generative AI platforms like ChatGPT to fulfill complex queries that previously required multiple manual searches. For businesses and digital publishers, appearing within these AI-generated responses is no longer a luxury but a critical component of lead generation and brand authority. Case studies from major marketing firms, including HubSpot, have demonstrated the massive potential of this shift, with some reporting an 1,850% increase in qualified leads over the past year specifically driven by refined AEO strategies.

The Evolution of Discovery: From Keywords to Conversational Answers

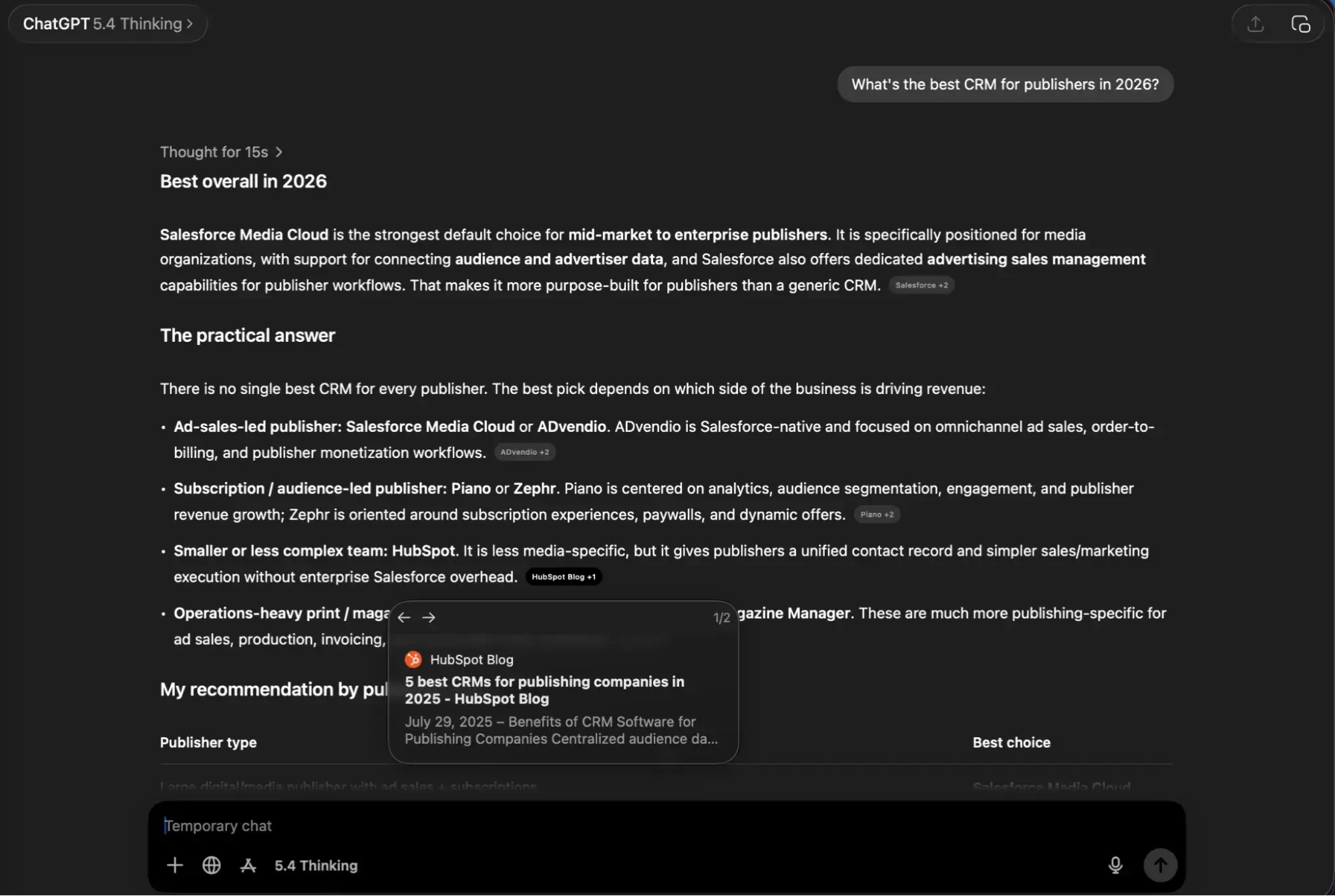

The transition from search engines to answer engines began in earnest in late 2022 with the public launch of ChatGPT. However, the maturation of the technology in late 2025 and early 2026, particularly with the release of the GPT-5.4 model, has solidified AEO as a distinct discipline. Unlike traditional search, which provides a list of blue links, ChatGPT synthesizes information from various sources to provide a cohesive, singular response.

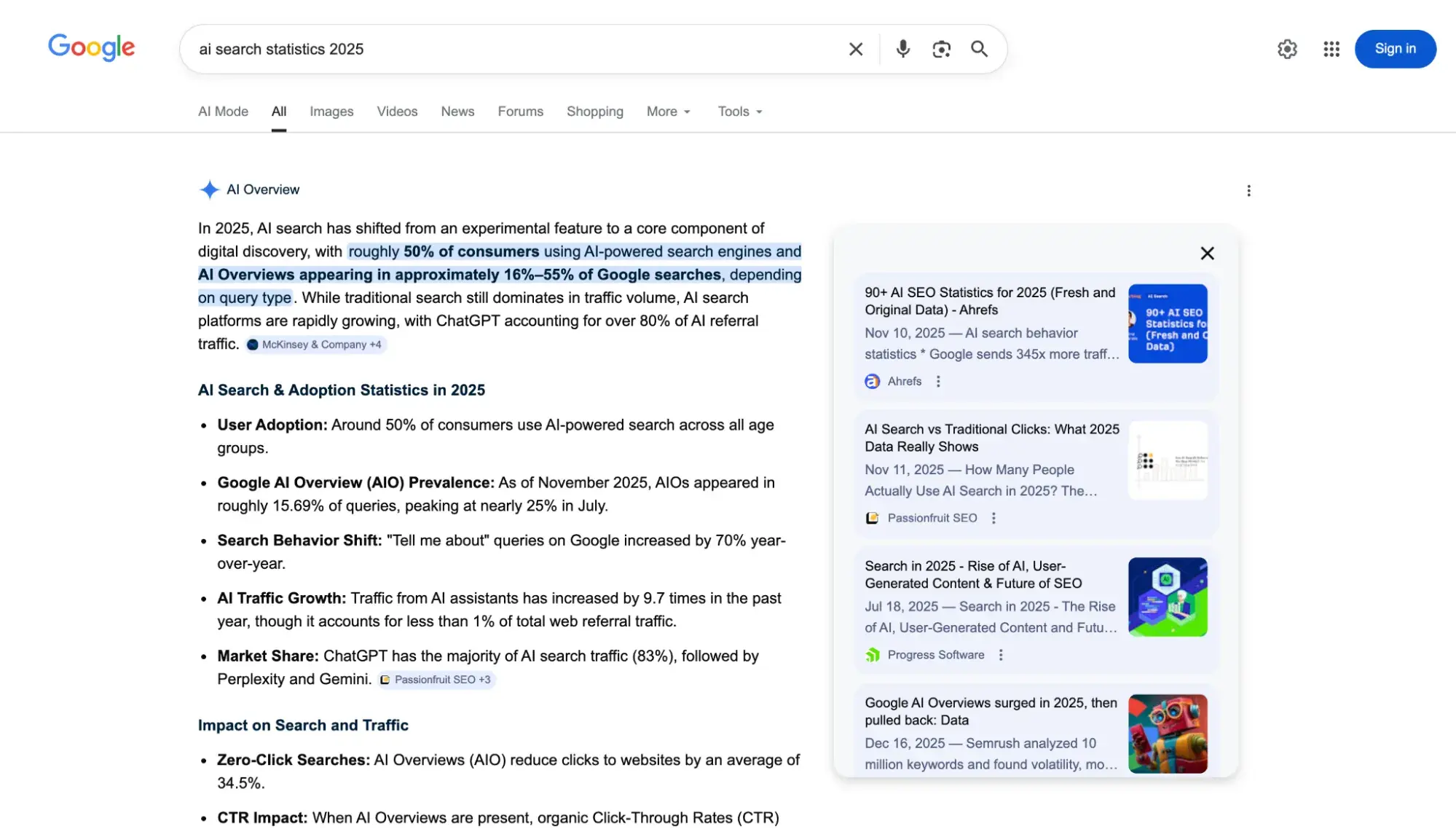

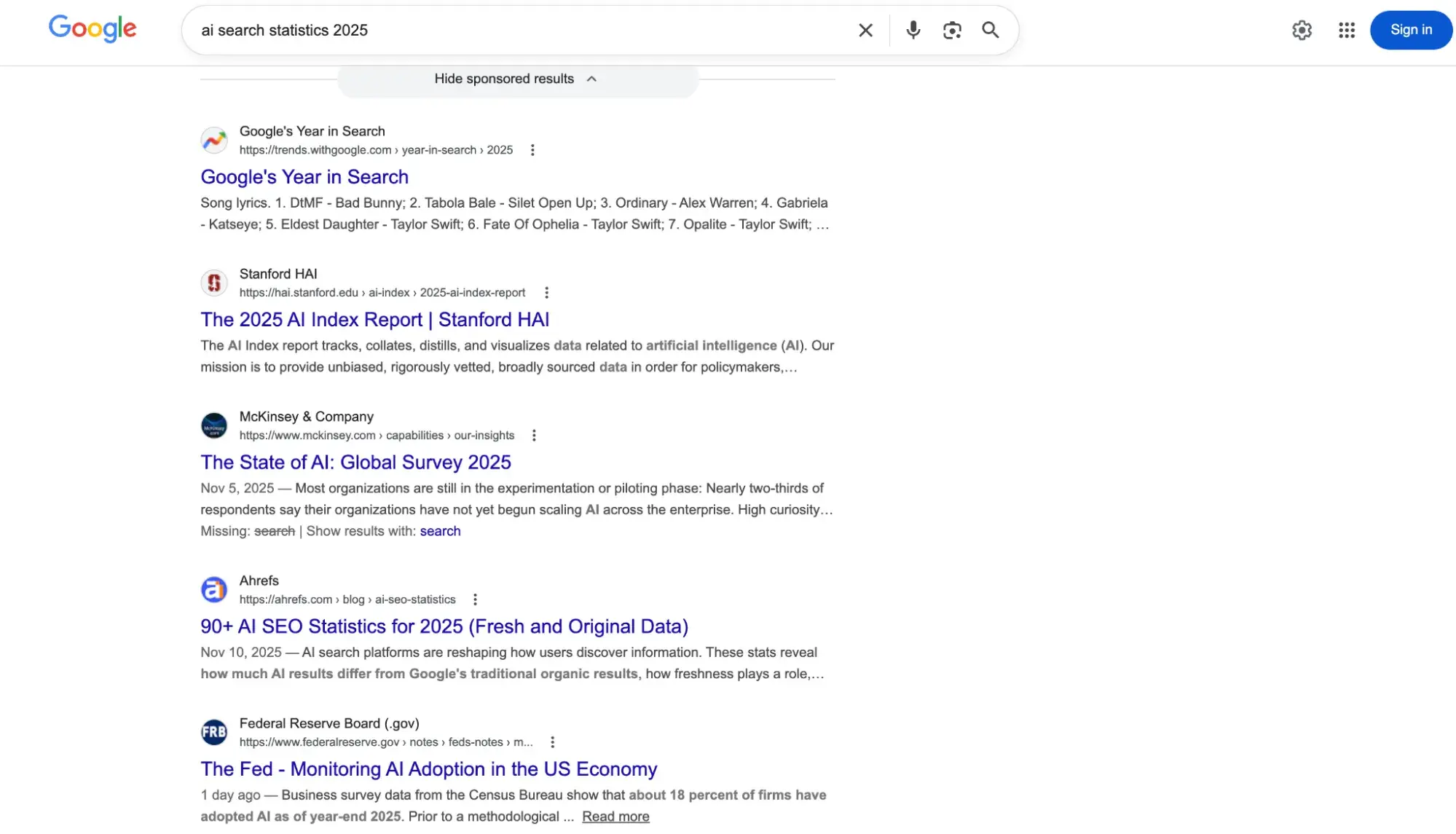

This evolution has forced a reevaluation of how content is indexed and valued. While Google remains a dominant force, its "AI Overviews" now compete directly with ChatGPT’s "Search" functionality. Market analysts note that the criteria for ranking in a standard search engine results page (SERP) do not always align with the criteria for being cited by an artificial intelligence model. Consequently, practitioners are moving away from simple keyword density toward "entity-based" authority and "answer-first" content structures.

Understanding the Dual Sourcing Mechanism of ChatGPT

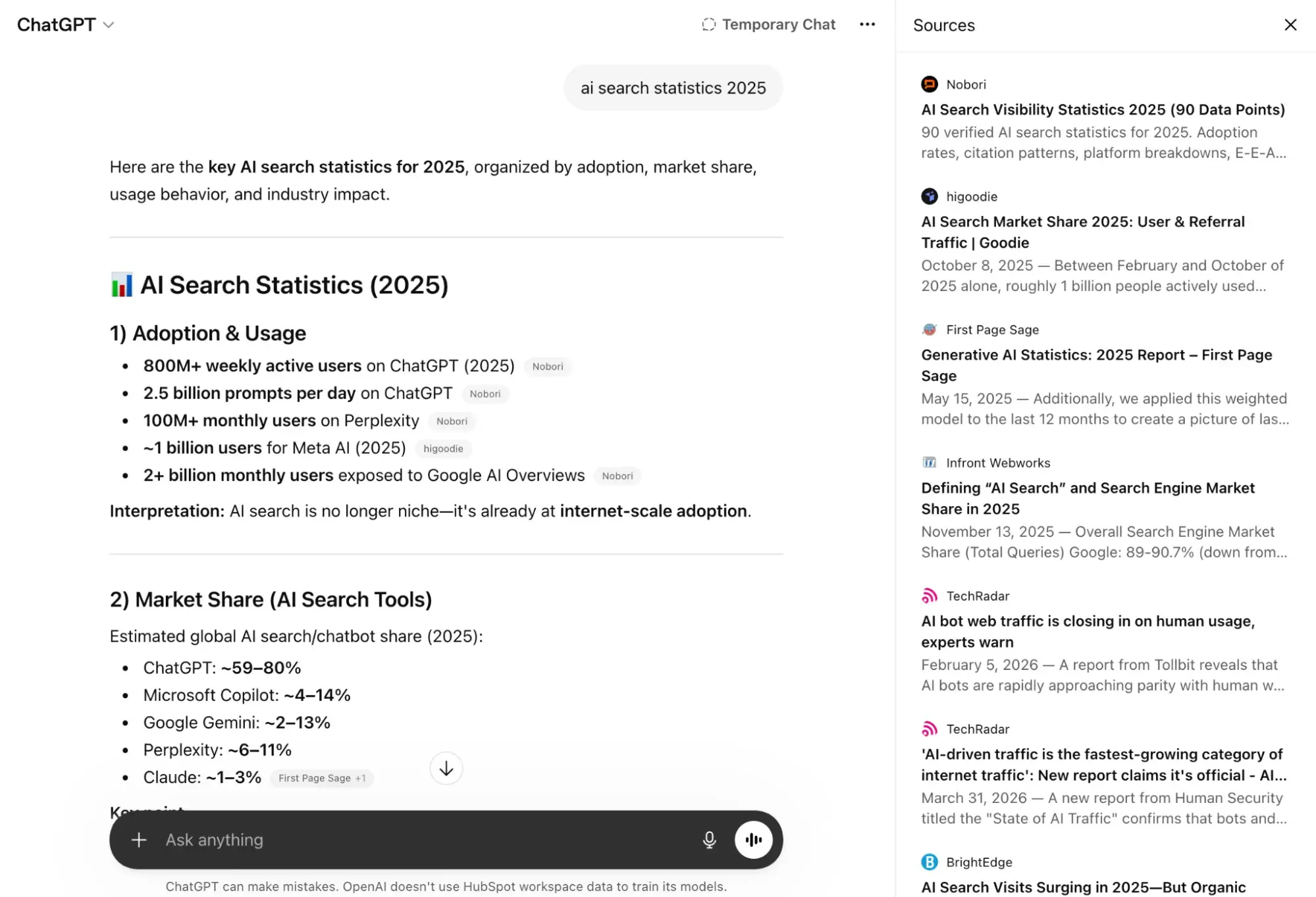

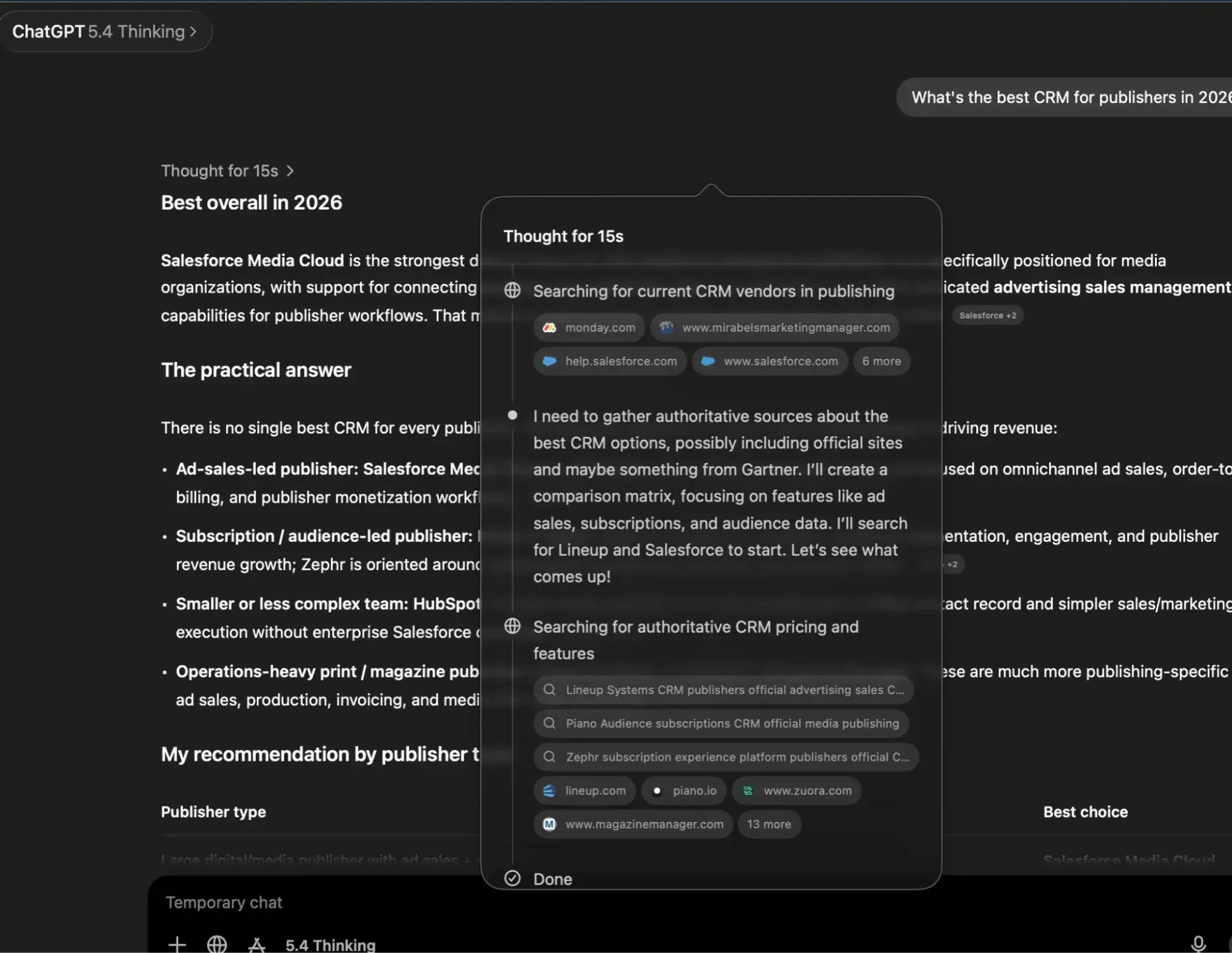

To effectively optimize for ChatGPT, one must understand the two primary ways the model retrieves information: training data and live web search.

Training Data and Knowledge Cut-offs

ChatGPT models are trained on massive datasets comprising public internet archives, third-party partnerships, and user-provided data. As of the current GPT-5.4 iteration, the knowledge cut-off date is August 2025. For any brand or entity looking to appear in queries relying solely on the model’s internal weights, the information must have been established and widely recognized before this date. The model functions similarly to a human brain that has read an entire library; it does not "look up" facts in its training data but rather predicts the most accurate response based on learned patterns and relationships between concepts.

Live Web Search and "Query Fan-out"

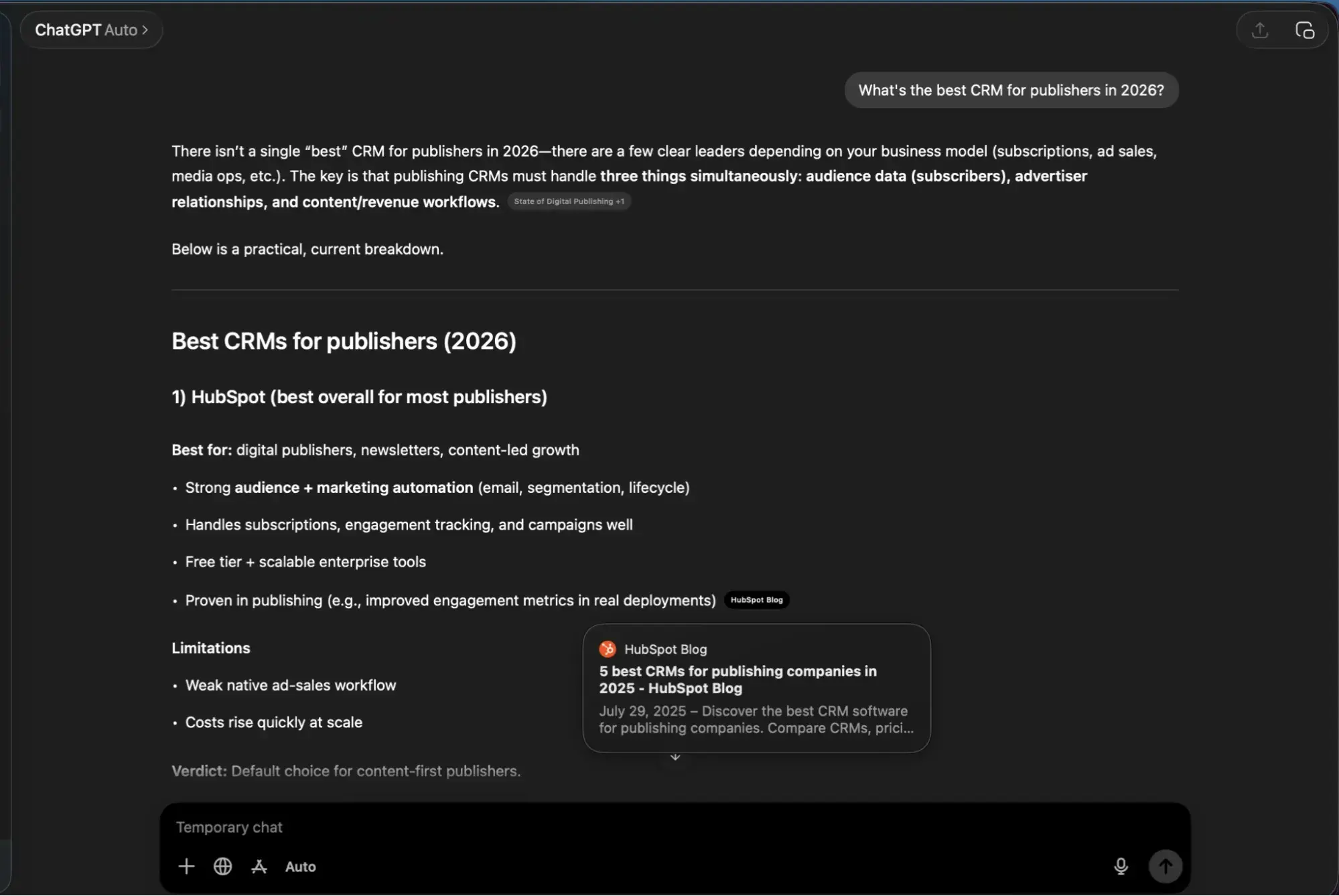

For time-sensitive information—such as 2026 pricing, current news, or recent product launches—ChatGPT utilizes live web search. OpenAI primarily leverages Bing as its search provider, though internal experiments and third-party tracking have confirmed that Google Search is also utilized in specific contexts.

A critical phenomenon in this process is "query fan-out." When a user enters a complex prompt, ChatGPT often breaks it down into multiple sub-queries to ensure a comprehensive answer. For example, a prompt regarding the "best CRM for publishers in 2026" may trigger sub-searches for "CRM features for media companies," "HubSpot vs. Salesforce for publishing," and "2026 CRM reviews." This means that visibility depends not just on the primary keyword, but on being the authoritative answer for the various sub-components of a user’s intent.

Technical Prerequisites for AI Visibility

Before content can be cited, it must be accessible to the specialized crawlers used by OpenAI. This requires a precise configuration of a website’s technical infrastructure.

Crawler Management and Robots.txt

OpenAI utilizes two distinct crawlers: GPTBot and OAI-SearchBot. The former is used to collect data for future model training, while the latter is specifically designed to find results for the live search feature. Webmasters must ensure their robots.txt file allows access to these bots. Blocking GPTBot may protect intellectual property from being used to train future models, but blocking OAI-SearchBot will effectively render a website invisible to ChatGPT’s real-time search results.

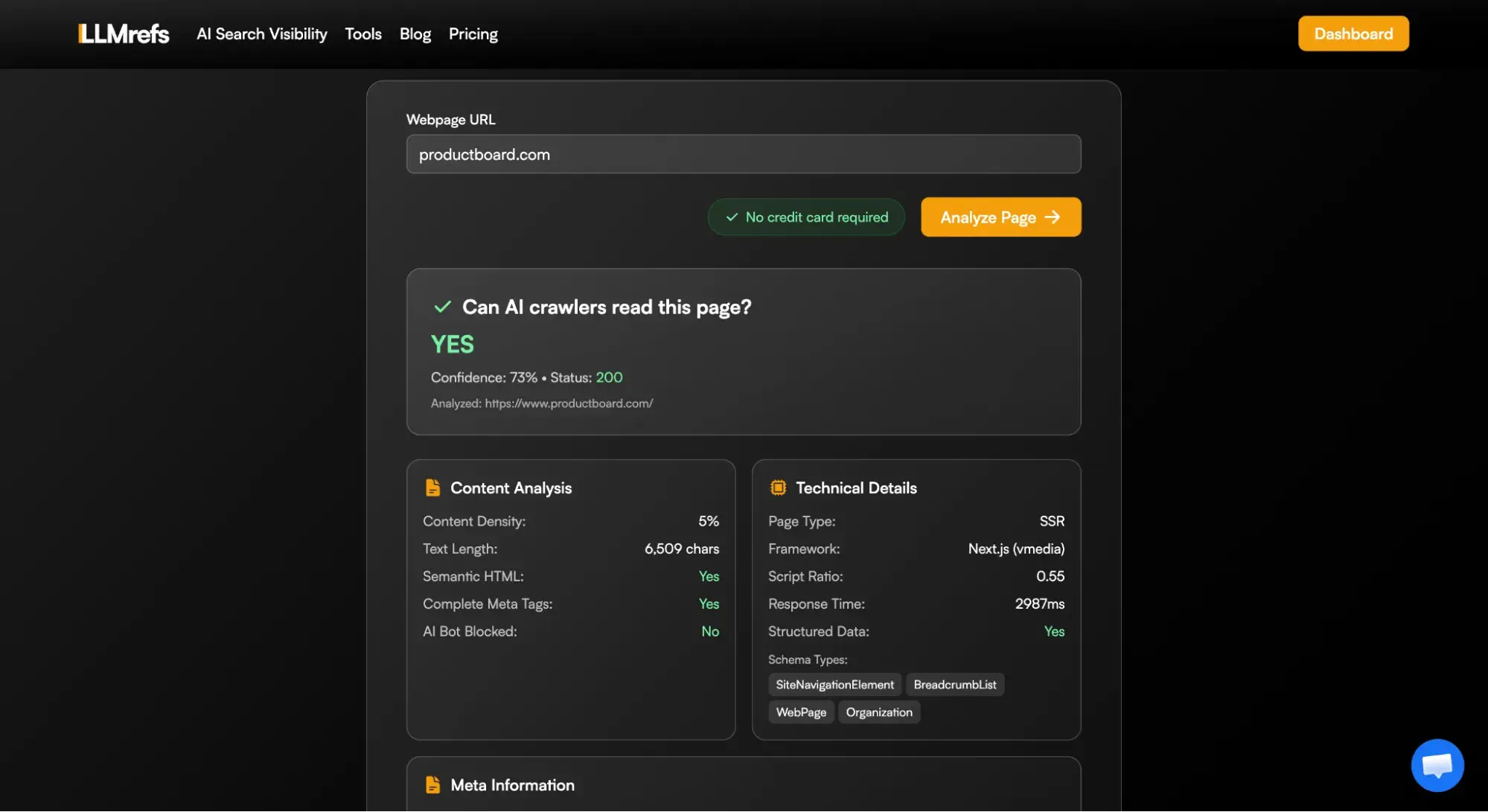

The JavaScript Barrier

A common pitfall for modern websites is a heavy reliance on client-side JavaScript. While modern browsers can render these sites easily, AI crawlers often struggle to "see" content that is not present in the initial HTML response. Industry experts recommend server-side rendering (SSR) or pre-rendering to ensure that the most vital information is immediately available. Testing tools, such as AI Crawlability Checkers, have become essential for identifying these structural failures.

The "Answer-First" Content Strategy

The way content is written must adapt to the "inverted pyramid" style preferred by LLMs. Data from February 2026 analyses indicates that approximately 44.2% of ChatGPT citations come from the top 30% of a page’s content.

Leading with the Conclusion

To maximize the chance of being cited, writers should provide a direct, concise answer to the primary question in the opening paragraph. This "answer-first" phrasing provides the crawler with a clear snippet to extract. Following the direct answer, the article can then expand into nuance, supporting evidence, and data. This structure serves both the AI and the human reader, who often skims for immediate value.

Avoiding "Information in Images"

A significant mistake in 2026 digital publishing is placing critical data, such as pricing tables or specifications, solely within images or infographics. While humans appreciate the visual, ChatGPT’s current crawler architecture fetches raw HTML and extracts text. It cannot reliably interpret graphics or even "read" alt-text as effectively as it reads on-page text. Consequently, any data intended for AI citation must be presented in a parsable format, such as bulleted lists or standard HTML tables.

Building Off-Site Authority and Brand Consensus

ChatGPT does not view a website in isolation; it looks for consensus across the web to verify the trustworthiness of a source. This is a digital evolution of the "EEAT" (Experience, Expertise, Authoritativeness, and Trustworthiness) principles.

The Role of Third-Party Mentions

McKinsey’s recent analysis suggests that only 5% to 10% of citations in AI overviews come from a brand’s own website. The remainder is drawn from third-party platforms including:

- Professional Networks: High-authority posts on LinkedIn.

- Community Forums: Reddit threads and Quora answers where real users discuss products.

- Industry Directories: Specialized listings such as G2, Capterra, or Yelp.

- Media Coverage: Mentions in reputable news outlets and trade publications.

If ChatGPT finds a brand mentioned across multiple independent sources, its "confidence score" in that entity increases, making it more likely to recommend that brand as a definitive solution.

Structured Data and Schema Markup

Implementing schema markup—specifically Organization, Article, and FAQPage schemas—acts as a translator for AI models. It reduces ambiguity by explicitly telling the engine what the content represents. While schema does not guarantee a top position, it removes friction, allowing the AI to categorize the content with higher precision.

Measuring Success in the AEO Era

The metrics for success in ChatGPT results differ significantly from traditional SEO. Marketers are now prioritizing "Zero-Click" metrics and "Share of Voice."

- Brand Visibility Score: This represents the percentage of time a brand is mentioned or cited when relevant prompts are entered into an LLM.

- Citation Analysis: By studying which competitors are being cited for specific prompts, companies can identify content gaps. If an AI consistently cites a competitor’s comparison page, it indicates a need for the brand to create its own authoritative comparison content.

- Sentiment and Recommendation Rate: Tools now track whether the AI recommends a product or merely mentions it. A "recommendation" carries significantly more weight in the buyer’s journey.

Broader Implications for the Future of Search

The rise of AEO suggests a future where the "click-through rate" to websites may decline, while the "influence rate" within AI responses becomes the primary driver of brand awareness. For small teams, the focus must remain on high-impact tasks: maintaining a clean technical crawl, using schema markup, and ensuring that brand mentions are present on high-authority third-party sites.

Official responses from search providers like Bing and Google emphasize that they do not recommend creating separate "AI-only" versions of websites. Instead, the goal is a single, high-quality version of a page that serves both human intent and machine extraction. As AI continues to integrate into the daily workflow of the global workforce, the ability to show up in ChatGPT results will likely define the market leaders of the late 2020s. The strategy is no longer about gaming an algorithm, but about becoming the most credible, accessible, and cited answer in a brand’s specific field of expertise.