The digital landscape is undergoing a profound transformation with the rapid ascent of conversational AI search engines, tools poised to redefine how users interact with information online. In February 2024, Fast Company [1] highlighted this burgeoning trend, noting how these engines, powered by sophisticated large language models (LLMs), are capable of directly answering user queries by synthesizing and summarizing information drawn from across the Internet. This shift marks a significant departure from the established keyword-based search paradigm championed by Google for decades, igniting a wave of experimentation and fervent enthusiasm within academic and scientific research communities [2].

The Genesis of a New Search Paradigm

The current surge in conversational AI search capabilities is intrinsically linked to the broader rise of generative AI. While the concept of natural language processing in search has evolved over years, the public introduction of tools like ChatGPT in late 2022 served as a pivotal moment, democratizing access to highly capable LLMs and showcasing their potential beyond mere chatbots. This "technology push" [3], driven by powerful new AI capabilities, has spurred an explosion of innovative applications, with search being a primary frontier. Companies and startups, recognizing the transformative potential, have rapidly developed and deployed their own conversational AI search platforms, aiming to capture a segment of the vast global search market.

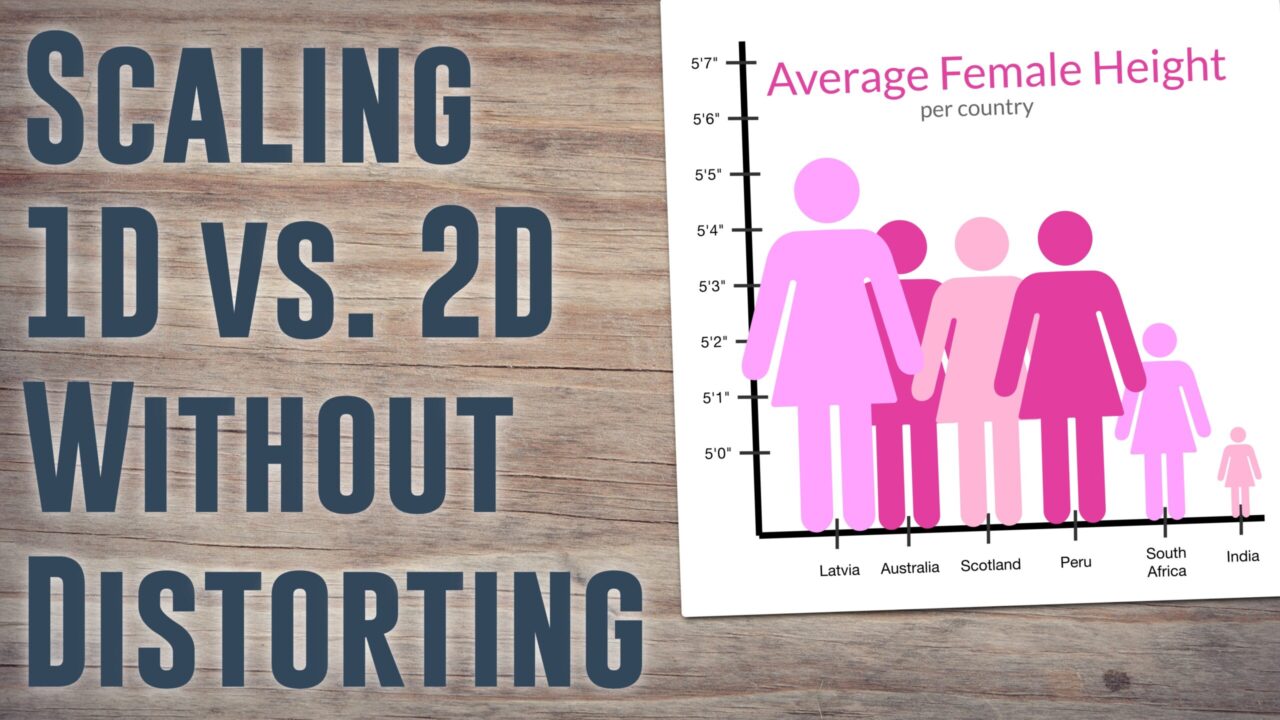

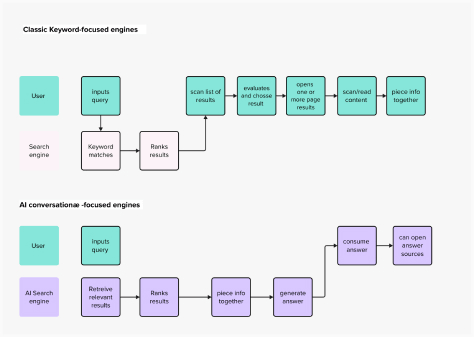

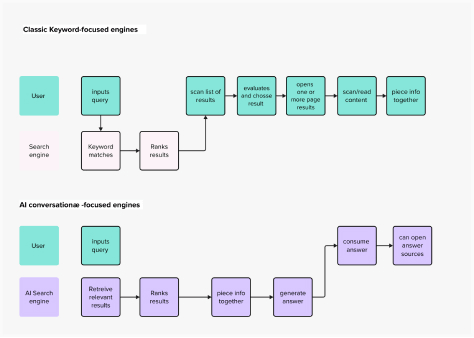

Traditional search engines have long relied on users formulating precise keyword queries, which then return a list of links to relevant web pages. The user’s task is then to sift through these results, evaluate their relevance, and synthesize the desired information themselves. This model, while effective, often demands significant cognitive effort and time. Conversational AI search engines, however, promise a more direct route to answers, mimicking human dialogue and presenting collated, summarized information. This represents not just an incremental improvement but a fundamental re-imagining of the search interaction, serving a user need as old as time—the efficient acquisition of knowledge.

Understanding the Evolving Mental Model of Search

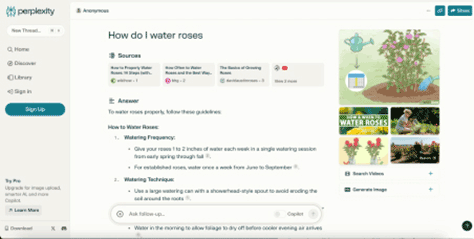

At first glance, platforms like Perplexity AI, a notable player in this space, retain familiar elements of classic search engines: a prominent input field for queries, a central display area for results, and supplementary information widgets. However, the underlying mental model for interaction is profoundly different. Instead of a query-and-link paradigm, users engage with these systems as they would a conversational chatbot. This mental model, solidified by the widespread adoption of generative AI applications, positions the AI as an intelligent interlocutor capable of understanding complex questions and providing comprehensive responses.

For instance, Perplexity AI’s homepage, as illustrated in Figure 1 of the original analysis, might visually resemble a traditional search interface, but its output fundamentally alters the user’s journey. Rather than a list of blue links, it delivers a direct, synthesised answer, often formatted like a web page or article excerpt, compiled from multiple sources. This design choice aims to drastically simplify the information retrieval process, eliminating the need for users to manually evaluate, click through, and scan numerous individual search results.

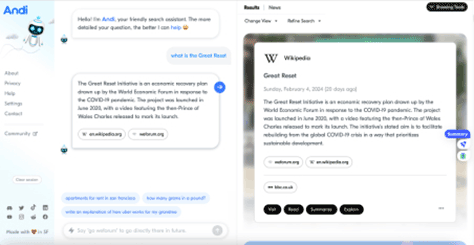

Another example, Andi, shown in Figure 3 of the original article, pushes this conversational interface further in its layout and interactions. While its visual presentation leans heavily into the chatbot aesthetic, its information architecture, as discussed later, maintains a closer resemblance to classic search in how it presents sources. This highlights a critical design tension within the new paradigm: how much to abstract the underlying information sources versus how much to empower users to engage with them directly.

This evolution is a classic example of a "technology push" innovation [3], where the availability of a powerful new technology—generative AI—drives the development of new digital experiences. However, the true measure of this evolution lies in its benefit to users. The mere availability of advanced technology does not automatically equate to superior user outcomes; a human-centered approach remains paramount to avoid strategic pitfalls.

Enhancing the User Experience: Speed and Simplification

Conversational AI search engines offer clear improvements in usability and interaction design. The primary enhancement lies in their ability to directly serve answers by collating and summarizing information from multiple sources. This directness bypasses the traditional multi-step process of evaluating search results, making educated guesses about content, and navigating to external pages to locate answers.

The user’s ultimate goal in both classic and AI-powered search remains identical: finding an answer within a text. The key differentiator is the AI’s capacity to perform the initial aggregation and synthesis, thereby reducing the user’s cognitive load. This approach aligns closely with the intuitive Q&A pattern inherent in human conversation, fulfilling a crucial usability heuristic: "Match between system and the real world" [4]. By mirroring natural dialogue, these tools feel more intuitive and accessible.

Beyond this fundamental Q&A alignment, AI-powered search engines can further enhance the user experience by adhering to other key usability heuristics outlined by Jakob Nielsen [4]:

- Visibility of System Status: While the original article doesn’t explicitly detail this, a well-designed AI search engine would provide clear indications of its processing, source retrieval, and summarization efforts.

- User Control and Freedom: Although the AI takes a proactive role, effective design must still allow users to refine queries, explore sources, and even "undo" certain AI-driven summarizations if they wish to delve deeper.

- Consistency and Standards: Maintaining consistent language, design patterns, and interaction models within the AI search interface helps users build a reliable mental model.

- Error Prevention: By anticipating user needs and directly providing answers, these systems can potentially prevent common search frustrations like dead links or irrelevant results.

- Recognition Rather Than Recall: Presenting synthesized answers directly reduces the burden on users to recall previous search strategies or specific keywords.

- Flexibility and Efficiency of Use: For experienced users, the ability to quickly obtain concise answers offers significant efficiency gains.

- Aesthetic and Minimalist Design: Many conversational AI interfaces prioritize clean, uncluttered designs, focusing on the dialogue interaction, which can contribute to a pleasant user experience.

- Help and Documentation: Clear explanations of how the AI functions and how to best formulate queries are essential for user adoption and trust.

These improvements suggest that AI-powered search engines are indeed "ticking the right boxes" for enhancing the typical search journey. However, this raises a more profound question: does improved usability automatically equate to a desirable future standard for information access?

Broader Implications: Trust, Explainability, and Human Agency

While the convenience of conversational AI search is undeniable, its widespread adoption carries significant implications for user trust, AI explainability, and potentially, human cognitive processes. When using platforms like Perplexity AI, users are delegating a substantial amount of decision-making to the AI. The system independently selects, extracts, and summarizes information from disparate sources it deems most relevant. A critical gap in this process is the lack of transparency regarding why certain sources were chosen over others, or how specific excerpts were weighted in the summarization. This neglect of "AI explainability" [5] directly impacts the trustworthiness of the system.

Francesca Rossi, IBM’s Global Ethics Leader, underscores this point in her article "Building Trust in Artificial Intelligence" [6], stating that AI raises concerns about its ability to make fair decisions, align with human values, and crucially, "explain its reasoning and decision-making." In the enterprise world, these concerns are becoming pressing due to impending regulations and the specter of substantial fines, reputational damage, and legal challenges. However, the average "Internaut"—the highly skilled, habitual Internet user—may not perceive these risks with the same gravity.

Sociologist Roberta Katz’s observation, "first you make the building and then the building makes you" [7], offers a compelling analogy. Just as physical architectures influence human behavior and emotion, the architectures of our digital tools profoundly shape their users. It is a reasonable hypothesis that the constant exposure to ready-made, authoritative-sounding answers from tools like Perplexity AI could habituate users to uncritically accept information, potentially diminishing their inclination to question accuracy or veracity.

While these tools often provide links to source documents, several critical questions remain unanswered:

- How were the sources selected, and what criteria were applied?

- How has the AI summarized and synthesized information, and what potential biases might be embedded in this process?

The first issue, concerning source selection, also exists in traditional search, where algorithms determine link rankings. However, the second issue—the opaque summarization process—is a unique challenge of generative AI, representing a deeper delegation of cognitive tasks. Even if these tools were to achieve full explainability, the sheer ease of the user experience could, in the long term, impact users’ critical thinking skills. This concern echoes previous debates, such as the alleged contribution of text messaging to a decline in formal writing proficiency.

From a usability perspective, the traditional act of sifting through Google’s search engine results page (SERP), comparing multiple articles, and piecing together information to form an answer is undoubtedly more laborious. Yet, these tasks serve as valuable exercises for analytical and critical faculties, fostering a degree of skepticism and independence from single authoritative sources. If the prevailing mental model of a search engine becomes one of an infallible oracle that always provides the "right" answer, the consequences for our collective ability to discern truth from falsehood could be immense, particularly in critical domains like policymaking, scientific research, and civil discourse. While searching for mundane items like trousers or stain removal tips might not demand high criticality, the implications for complex, high-stakes information retrieval are profound.

Furthermore, the rise of AI search poses an existential threat to the existing information ecosystem. Kevin Roose of The New York Times succinctly framed this challenge: "If AI search engines can reliably summarize what’s happening in Gaza or tell users which toaster to buy, why would anyone visit a publisher’s Web site ever again?" [8] This question highlights the potential for AI search to disintermediate content creators and publishers, drastically reducing their web traffic and, consequently, their revenue streams from advertising and subscriptions. This economic implication could fundamentally alter the landscape of online content creation, potentially leading to a decline in high-quality investigative journalism and specialized content if creators cannot monetize their work.

However, design choices can mitigate some of these concerns. Andi’s architecture, for instance, offers an alternative model. Despite its chatbot-like interface, its brief answer is often a direct snippet from a primary source (e.g., Wikipedia), and crucially, a prominent list of all source links appears on the left side of the page. This design conceptually retains more of the traditional SERP’s emphasis on direct source access, encouraging users to click through and engage with the original content. This demonstrates the power of user experience (UX) and user interface (UI) design in eliciting more desirable user behaviors, fostering a healthier information ecosystem by promoting source engagement.

The Imperative of Trustworthy AI in Search’s Future

The central question is not whether AI will be the future of search—it almost certainly will be, given its capabilities to enhance user experience and accelerate knowledge acquisition. The more critical questions are:

- What kind of AI will shape the future of search?

- How can we design AI search engines that are both useful and responsible?

A study published in Nature [9] revealed conflicting views among researchers regarding AI science search engines; some found them incredibly useful and accurate, while others expressed deep distrust due to inconsistent retrieval performance. This underscores that trust is the central barrier to widespread, uncritical adoption.

To foster trustworthy AI search, two key areas of focus are paramount:

-

Technical and Algorithmic Solutions:

- Retrieval-Augmented Generation (RAG): This technique [10] is crucial for grounding LLMs in factual, external data, significantly reducing the problem of "AI hallucinations" [11]—where AIs generate plausible but false information. By retrieving relevant documents and then generating responses based on those documents, RAG enhances accuracy and allows for source attribution.

- Improved Source Attribution and Transparency: Beyond simply listing links, AI search engines need to provide clearer mechanisms for users to understand how information was extracted and summarized from specific sources. This could involve highlighting specific sentences used from a source or providing confidence scores for generated facts.

- Bias Detection and Mitigation: Algorithms must be developed to identify and counteract biases in both the training data and the summarization process, ensuring a more balanced and fair presentation of information.

- Dynamic Fact-Checking and Verification: Integrating real-time fact-checking mechanisms and cross-referencing information against authoritative databases can further bolster accuracy.

- Graph-based RAG: Advanced techniques like GraphRAG [12] can unlock deeper discovery on complex, narrative data, improving the comprehensiveness and accuracy of LLM outputs.

-

User Experience and Ethical Design Principles:

- Prioritizing Explainability in UI: Designers must innovate ways to visually and interactively convey the AI’s reasoning, source selection, and summarization logic to users. This could involve interactive elements that allow users to drill down into source material or understand the confidence level of a generated answer.

- Fostering Critical Engagement: Design should not merely serve answers but also encourage critical thinking. This might involve prompting users to consider alternative viewpoints, providing tools for comparing information from different sources, or even explicitly flagging potentially controversial or rapidly evolving topics.

- Empowering User Control: Users should have agency over the AI’s behavior, with options to customize summarization depth, prioritize certain types of sources (e.g., academic vs. journalistic), or easily switch between direct answers and traditional link lists.

- Clear Disclosure of AI Involvement: Users should always be aware that they are interacting with an AI-generated summary, not a human-curated article.

- Feedback Mechanisms: Allowing users to provide feedback on the accuracy, helpfulness, or perceived bias of AI-generated answers is vital for continuous improvement and building trust.

As new regulations emerge globally to ensure safer and more ethical uses of AI, the question of whether a good search experience is also the right experience becomes increasingly pertinent. Within a context where users are delegating significant portions of their decision-making and information synthesis to LLMs, design efforts must extend beyond mere user-friendliness. The focus must broaden to encompass accuracy, trustworthiness, comprehensiveness, and the preservation of human critical thinking. This demands a nuanced approach that leverages the power of conversational AI without inadvertently undermining the foundational principles of informed inquiry and media literacy. The future of AI search engines hinges not just on technological prowess, but on a commitment to responsible and human-centric design.