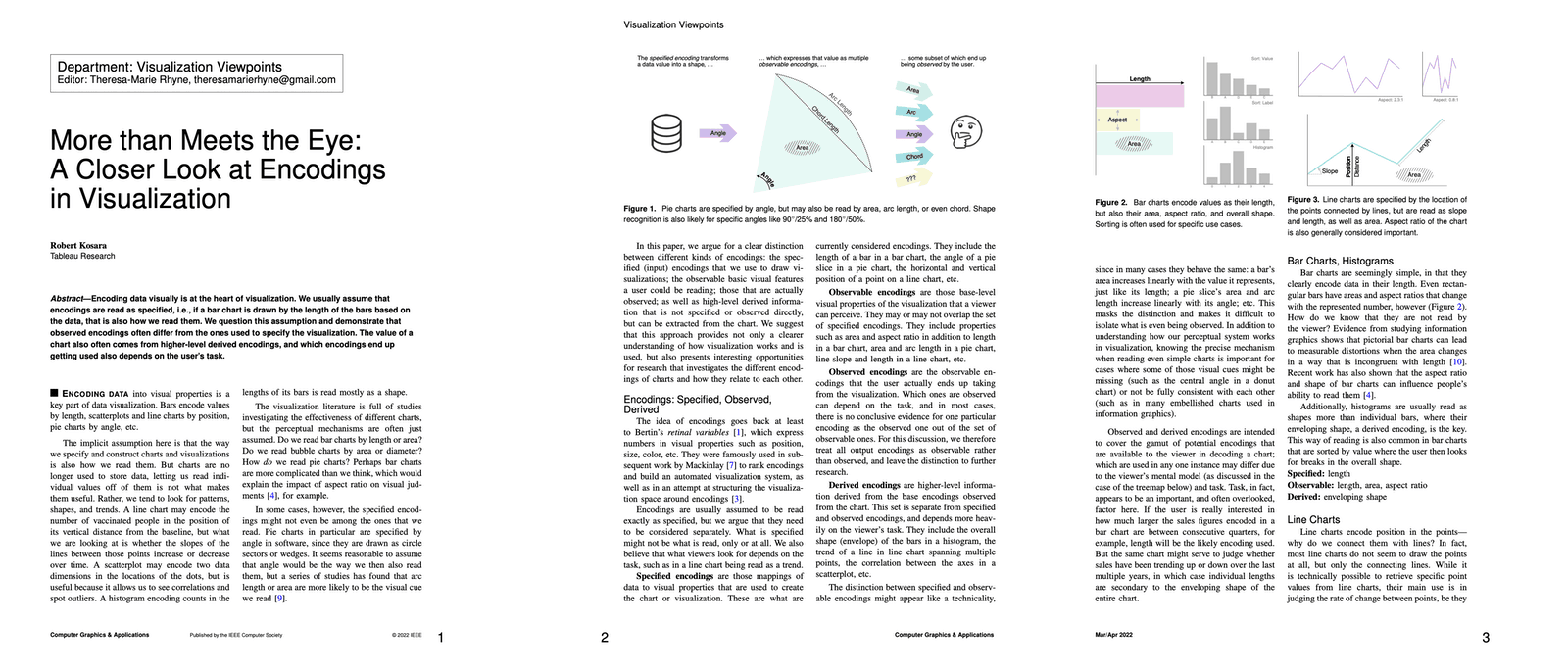

The fundamental principles of data visualization are undergoing a significant theoretical reassessment as researchers identify a critical gap between how charts are constructed and how they are perceived by the human eye. In a seminal paper published in the journal IEEE Computer Graphics and Applications (CG&A), Robert Kosara, a prominent figure in the field of data visualization research, argues that the current understanding of "encodings"—the mapping of data values to visual properties—is overly simplistic and fails to account for the complex cognitive processes involved in visual analysis. The research suggests that the traditional model, which assumes a one-to-one relationship between the parameters specified by a designer and the information extracted by a viewer, is insufficient for modern data science.

The Disconnect Between Specification and Perception

At the heart of Kosara’s research is the distinction between what he terms "specified encodings" and "observable encodings." For decades, the field has operated under the assumption that if a software program specifies a bar’s height to represent a value, the viewer simply reads that height. However, the paper, titled "More Than Meets the Eye: A Closer Look at Encodings in Visualization," posits that the visual system does not always prioritize the primary mathematical property used to generate the graphic.

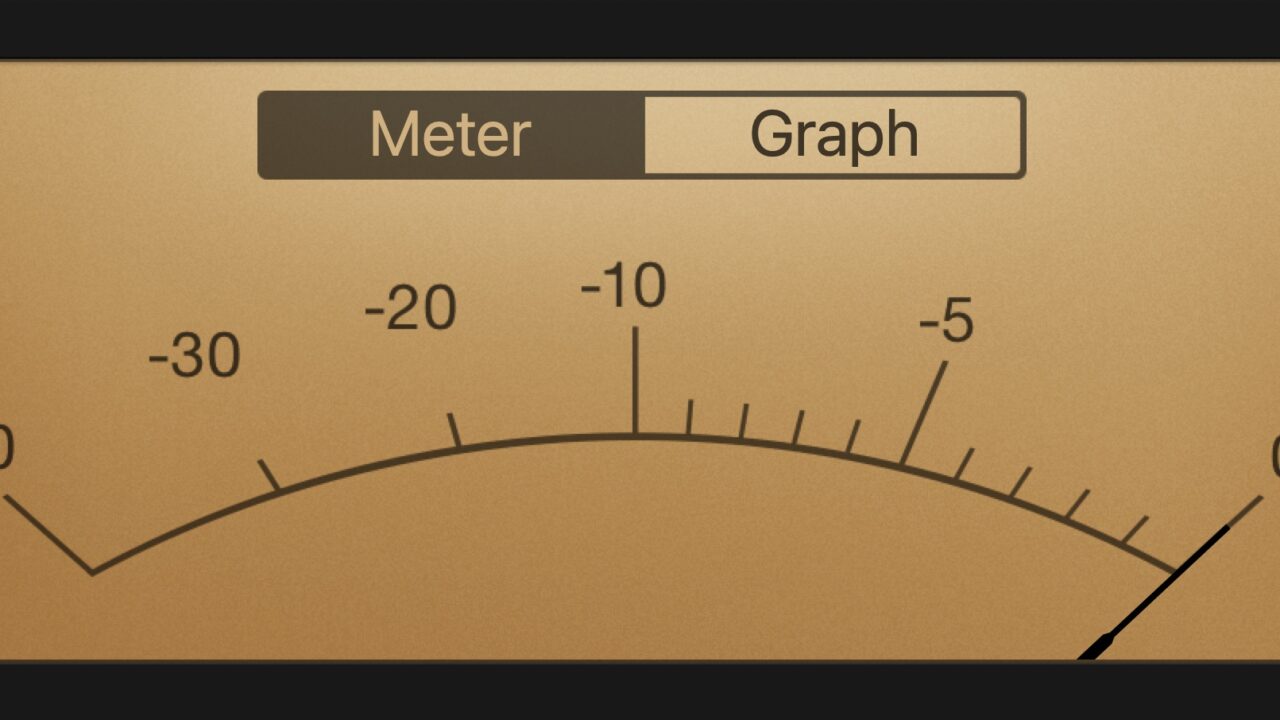

The pie chart serves as the primary case study for this disconnect. In most visualization libraries and software packages, a pie chart is mathematically specified by the angle of its wedges. However, Kosara’s extensive previous studies, including work from 2016 and 2019, indicate that human observers do not actually use angle as their primary cue for interpreting data. Instead, viewers may be responding to arc length, the area of the wedge, or even the chord length (the straight-line distance between the two points where the wedge meets the circle’s edge).

This distinction is not merely academic; it has profound implications for the accuracy of data communication. If a designer specifies a chart using one variable (angle) but the viewer reads another (area), any distortion in that secondary variable can lead to a fundamental misunderstanding of the data. This "observed encoding" represents the actual visual cue that the brain processes to reach a conclusion about the underlying numbers.

A Taxonomy of Visual Encodings

To address these complexities, the research introduces a more nuanced framework for categorizing how information is transmitted through graphics. This framework divides encodings into four distinct categories:

- Specified Encodings: These are the parameters defined by the software or the designer. For a bar chart, the specified encoding is typically the length or height of the bar. For a scatterplot, it is the x and y coordinates of the points.

- Observable Encodings: These are all the visual properties that change as a result of the specified encoding. In a bar chart, when the length changes, the area of the bar and the aspect ratio also change. These are all "observable" to the viewer, even if they were not the intended primary channel.

- Observed Encodings: This refers to the specific visual cue that a human observer actually utilizes to perform a task. Research suggests that for different individuals or different tasks, the observed encoding may vary even when looking at the same chart.

- Derived Encodings: These are higher-level interpretations that go beyond simple geometric properties. In a line chart, while the specified encodings are individual data points connected by segments, the viewer often interprets "trends," "slopes," or "volatility." These are derived from the aggregate shape of the data rather than the individual points.

Historical Context and the Evolution of Visualization Theory

The shift toward this multi-layered understanding of encodings marks a significant evolution from the foundational theories of the 20th century. To understand the weight of Kosara’s 2022 paper, one must look at the chronology of visualization science:

- 1967: Jacques Bertin publishes Sémiologie Graphique (Semiology of Graphics), providing the first systematic framework for visual variables like position, size, shape, and color. Bertin’s work laid the groundwork for the "specified" side of visualization.

- 1984: William Cleveland and Robert McGill conduct pioneering experiments on graphical perception. They established a hierarchy of tasks, noting that humans are most accurate at judging position on a common scale and least accurate at judging volume and color saturation.

- 2010s: The rise of automated visualization tools like Tableau and ggplot2 popularized the "Grammar of Graphics" (proposed by Leland Wilkinson). This approach focused heavily on the mapping of data to aesthetic attributes, largely assuming that the mapping was the end of the process.

- 2022: Kosara’s paper challenges the "Grammar of Graphics" era by arguing that the "grammar" only describes the "writing" of the chart, not the "reading" of it.

Analysis of Complex Visualizations: Treemaps and Line Charts

The limitations of current encoding models become even more apparent when examining complex, algorithmically driven visualizations. The treemap, a method for displaying hierarchical data using nested rectangles, is a primary example. When a "squarified" treemap algorithm is used, the software specifies the area of each rectangle to correspond with a data value. However, because the aspect ratios of these rectangles vary wildly to fit the container, the viewer’s ability to compare those areas is severely compromised.

In this scenario, the "specified" encoding is area, but the "observable" encodings include width, height, and aspect ratio. Because humans are notoriously poor at comparing the areas of rectangles with different aspect ratios, the "observed" encoding becomes a muddled combination of dimensions, often leading to incorrect data comparisons.

Line charts present a different challenge involving "derived encodings." While a line chart is mathematically a series of x-y coordinates, the human brain perceives it as a continuous shape. The "slope" of a line segment is a derived property—it is not explicitly in the data table but is interpreted by the viewer to understand the rate of change. Kosara argues that if we do not account for these derived encodings, we cannot truly understand how line charts function as a communication tool.

Industry Implications and Future Research

The implications of this research extend into the fields of user interface (UI) design, data journalism, and artificial intelligence. As AI systems are increasingly tasked with generating automated reports and dashboards, they rely on the simplistic "specified encoding" model. If these systems are not programmed to understand the "observed" and "derived" encodings, they may inadvertently produce technically accurate but perceptually misleading visualizations.

Furthermore, this research has significant consequences for accessibility in data science. For individuals with visual impairments or cognitive differences, the "observed encoding" may differ significantly from the norm. By identifying the multiple ways a single chart can be read, designers can create more redundant and robust visualizations that communicate effectively across diverse audiences.

While the academic community has generally received Kosara’s framework as a necessary "reality check" for the field, some practitioners note the difficulty of implementation. "The challenge," notes one industry analyst, "is that specified encodings are easy to code. Observed encodings are messy and depend on human psychology, which is much harder to quantify in a software library."

Conclusion: A New Direction for Data Literacy

Robert Kosara’s work suggests that the field of data visualization is only "scratching the surface" of understanding the relationship between data, light, and the human mind. The paper calls for a shift in research focus from the creation of new chart types to a deeper investigation into the mechanics of visual perception.

As data becomes the primary currency of the modern information economy, the ability to read and write visual data accurately—data literacy—becomes a vital skill. By distinguishing between what is drawn and what is seen, this research provides a roadmap for more honest and effective data communication. The visualization community now faces the task of integrating these psychological insights into the tools and best practices that define how the world views its data.

The paper, "More Than Meets the Eye: A Closer Look at Encodings in Visualization," serves as a definitive argument that the future of the field lies not in more complex algorithms, but in a more sophisticated understanding of the human observer. As the transition from "drawing" to "understanding" continues, the distinction between specified and observed encodings will likely become a cornerstone of future visualization pedagogy.