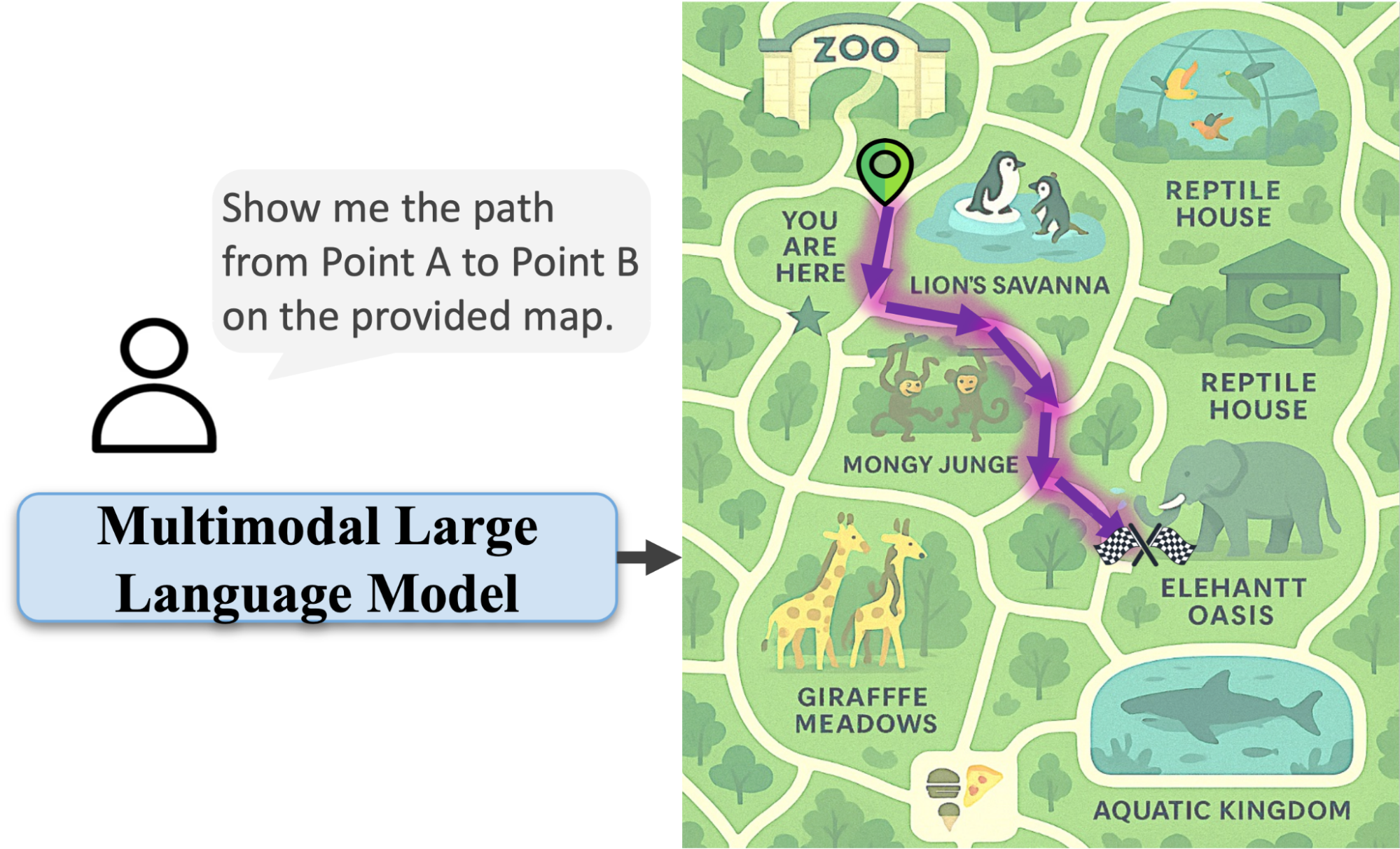

Google researchers have unveiled a groundbreaking method to imbue multimodal large language models (MLLMs) with a crucial yet often lacking capability: sophisticated spatial reasoning for navigating and interpreting maps. The new initiative, detailed in the research paper "MapTrace: Scalable Data Generation for Route Tracing on Maps," introduces a novel task, an extensive dataset, and an automated synthetic data generation pipeline designed to teach MLLMs the fundamental skill of tracing valid paths. This development addresses a significant gap in current AI, where models excel at object recognition but falter when understanding the geometric and topological relationships essential for real-world navigation.

The impetus for this research stems from a fundamental observation: while advanced AI models can readily identify objects and scenes within images, their ability to understand spatial constraints and connectivity remains rudimentary. For instance, an MLLM might correctly identify a zoo and list its inhabitants but would struggle to draw a coherent path from the entrance to the reptile house on a zoo map, potentially tracing lines through enclosures or gift shops. This disconnect highlights that current MLLMs are adept at visual recognition but lack the ingrained understanding of physical environments that humans possess intuitively.

This deficiency can be likened to a painter who can meticulously replicate individual brushstrokes but lacks the conceptual understanding of composition and perspective to create a cohesive landscape. The human brain effortlessly processes visual information on a map, instantly identifying locations, discerning traversable pathways from solid barriers, and charting optimal routes. This inherent fine-grained spatial reasoning is a cornerstone of human cognition, enabling us to navigate complex environments from bustling shopping malls to intricate theme park layouts.

The researchers pinpoint the root cause of this AI limitation: a deficit in training data that explicitly teaches navigational rules. MLLMs learn from vast quantities of images and text, forging associations between words like "path" and visual representations of sidewalks or trails. However, they are rarely exposed to data that systematically illustrates concepts like path connectivity, the inviolability of walls, or the sequential nature of route planning.

The most intuitive solution—collecting a massive, meticulously annotated dataset of maps with millions of hand-drawn paths—is, as the researchers acknowledge, "practically impossible" due to the sheer labor involved in pixel-level annotation and the prohibitive cost of scaling such an effort. Furthermore, many of the most complex and illustrative maps, such as those found in proprietary commercial establishments like shopping malls, museums, and theme parks, are not readily accessible for academic research. This "data bottleneck" has historically constrained the development of AI systems capable of robust spatial comprehension.

Pioneering a Scalable Solution: The MapTrace Pipeline

To surmount these obstacles, the Google team engineered a sophisticated, fully automated, and scalable pipeline. This system leverages the advanced generative capabilities of models like Gemini and Imagen to produce diverse, high-quality maps complete with accurately traced paths. The pipeline offers granular control over data complexity and diversity, ensuring that generated paths adhere to intended routes and strictly avoid non-traversable areas, all without the need for real-world map collection.

The MapTrace pipeline operates through four distinct, automated stages, employing AI models not only as creators but also as quality assurance mechanisms:

Stage 1: Generative Map Creation

The process begins with a large language model (LLM) that crafts rich, descriptive text prompts. These prompts can range from "a map of a bustling zoo with interconnected animal habitats" to "a sprawling shopping mall featuring a central food court and distinct retail zones" or "an imaginative theme park with winding paths through varied thematic lands." These detailed textual descriptions are then fed into a text-to-image generation model, such as Imagen, which renders them into intricate and visually diverse map images. This initial stage is crucial for establishing the varied environments and structural complexities that MLLMs will later learn to navigate.

Stage 2: Identifying Traversable Areas with an AI "Mask Critic"

Once a map image is generated, the next critical step is to delineate all "walkable" areas. The pipeline achieves this by employing a pixel-clustering technique based on color, which generates candidate path masks—essentially, binary representations highlighting potential pathways. However, not all identified shaded regions represent valid traversable routes. To ensure accuracy, a multimodal large language model (MLLM) acts as a "Mask Critic." This AI evaluator scrutinizes each candidate mask in conjunction with the original map image. It assesses whether the mask represents a realistic, interconnected network of paths, looking for features consistent with walkways, sidewalks, or designated pedestrian routes. Masks deemed to contain predominantly valid traversable regions are then classified as high-quality and passed to the subsequent stage.

Stage 3: Constructing a Navigable Graph

With a refined mask of traversable areas, the system transforms the 2D image into a structured graph representation. This process is analogous to creating a digital blueprint of a transportation network, where intersections are designated as nodes and the pathways connecting them as edges. This "pixel-graph" digitally encodes the map’s connectivity, facilitating computational route calculation and providing a framework for pathfinding algorithms.

Stage 4: Generating Optimal Paths with an AI "Path Critic"

The final stage involves generating and validating the actual paths. For each map, thousands of random start and end points are sampled from the navigable graph. A well-established algorithm, Dijkstra’s algorithm, is then employed to compute the shortest possible path between each pair of points. To ensure the generated paths are not only mathematically optimal but also intuitively logical and visually plausible, another MLLM serves as a "Path Critic." This critic evaluates the generated path overlaid on the map image, providing a final quality assessment. This rigorous review ensures that routes are sensible, remain within designated pathways, and resemble paths a human would realistically choose.

This comprehensive pipeline has enabled the creation of a substantial dataset, "MapTrace," comprising 2 million annotated map images with valid, verified paths. While acknowledging that occasional typographic errors might appear in the generated images, the research team emphasizes that the primary focus has been on path fidelity. They express confidence that ongoing advancements in generative modeling will naturally address and mitigate such artifacts in future iterations.

Demonstrating Efficacy: Empirical Results and Performance Gains

The critical question remained: does training MLLMs on this synthetically generated data translate into tangible improvements in spatial reasoning? To answer this, the researchers fine-tuned several MLLMs, including the open-source Gemma 3 27B and Gemini 2.5 Flash, on a subset of 23,000 generated paths. These fine-tuned models were then evaluated on MapBench, a widely recognized benchmark comprising real-world maps not encountered during the training phase.

Performance was measured using the normalized dynamic time warping (NDTW) metric, an extension of dynamic time warping designed to compare sequences of coordinates, accounting for variations in speed or sampling rate. NDTW quantifies the error between a reference path and a model-generated path, with lower values indicating superior performance. The researchers provided a detailed explanation of the NDTW computation, illustrating how it aligns coordinate sequences to minimize discrepancies, even when sampling rates or temporal offsets differ.

The results of the fine-tuning were significant and far-reaching. The Gemini 2.5 Flash model, after fine-tuning, exhibited a substantial reduction in NDTW error, decreasing from 1.29 to 0.87, and achieved the highest overall performance on the benchmark. This improvement signifies a marked increase in the accuracy of path tracing.

Beyond mere accuracy, the models demonstrated a marked increase in reliability. The success rate—the percentage of times a model generated a valid, parsable path—saw a notable increase across all tested models. The Gemma model, for instance, experienced a 6.4 percentage point rise in its success rate, alongside an improved NDTW score (from 1.29 to 1.13). This dramatic improvement underscores a newfound robustness, indicating that the models were not only more precise when they succeeded but also significantly less prone to outright failure after training on the MapTrace dataset.

These findings strongly validate the researchers’ central hypothesis: fine-grained spatial reasoning is not an inherent characteristic of MLLMs but rather a skill that can be acquired through targeted training. The success of the MapTrace pipeline demonstrates that even synthetically generated data, when designed with explicit supervision of spatial relationships, can effectively teach AI models to understand and navigate complex spatial layouts.

Evaluating the AI Critics: Accuracy and Limitations

A key component of the MapTrace pipeline is the reliance on AI models as critics to ensure data quality. The researchers conducted an evaluation of these critics’ performance. For the Path Critic, a manual review of 120 decisions across 56 randomly sampled maps revealed an accuracy of 76% with an 8% false-positive rate (where invalid paths were incorrectly labeled as high-quality). The primary sources of error were identified as misclassifying background regions with similar colors to paths and failing to detect thin but valid paths within broader open areas.

The Mask Critic was assessed by inspecting 200 judgments over 20 maps, achieving 83% accuracy and a 9% false-positive rate. Common errors for the Mask Critic included the inclusion of background pixels due to color similarity, the absorption of small non-path elements like text into otherwise correct masks, and the misclassification of thin but valid paths as invalid. While these error rates indicate room for further refinement, the overall accuracy of the AI critics is considered high enough to generate a dataset of sufficient quality for training MLLMs.

Broader Implications and Future Directions

The ability to imbue AI models with robust spatial reasoning for map interpretation and navigation opens a vast array of potential applications. These include, but are not limited to:

- Enhanced Navigation Systems: Future GPS and navigation apps could offer more intuitive and context-aware routing, accounting for nuanced environmental details and user preferences beyond simple shortest-path calculations.

- Robotics and Autonomous Systems: Robots and autonomous vehicles could navigate complex, indoor environments like warehouses, factories, or hospitals with greater precision and adaptability, understanding the spatial constraints of their surroundings.

- Virtual and Augmented Reality: Immersive virtual and augmented reality experiences could become more realistic and interactive, with AI agents capable of understanding and responding to the spatial dynamics of virtual environments.

- Urban Planning and Design: AI tools could assist in analyzing urban layouts, identifying optimal pedestrian flow, and simulating the impact of design changes on navigability.

- Accessibility Tools: More sophisticated accessibility tools for individuals with visual impairments could be developed, providing richer, more understandable spatial information about their surroundings.

- Logistics and Supply Chain Optimization: AI could optimize delivery routes and warehouse management by understanding the intricate spatial relationships within complex logistical networks.

The researchers acknowledge that the "MapTrace" dataset, while extensive, may still exhibit occasional typographic errors in generated images. However, they are optimistic that ongoing advancements in generative modeling will address these artifacts in future iterations. The open-sourcing of the 2 million question-answer pairs generated by this pipeline, utilizing Gemini 2.5 Pro and Imagen models, is a significant contribution to the research community. This initiative aims to foster further exploration and innovation in the critical domain of AI spatial reasoning.

The work by Panagopoulou, Goyal, Yazdani, Dubost, Chai, Kulshrestha, and Purohit marks a significant step forward in bridging the gap between AI’s perceptual capabilities and its understanding of the physical world. By developing a scalable method for generating targeted training data, they have demonstrated that even complex cognitive skills like fine-grained spatial reasoning can be explicitly taught, paving the way for more intelligent and capable AI systems.

Acknowledgments: This research was conducted by Artemis Panagopoulou (while working as a Student Researcher at Google), Mohit Goyal, Soroosh Yazdani, Florian Dubost, Chen Chai, Achin Kulshrestha, and Aveek Purohit.