The fundamental principles of data visualization have long dictated that graphical representations must adhere to a strict set of geometric and mathematical rules to ensure clarity and prevent the distortion of information. For decades, the consensus among cartographers, statisticians, and designers has been that the direction of bars in a chart should strictly correspond to the numerical growth of the underlying values. However, a series of high-profile debates within the data science community, sparked by unconventional charting methods in fitness and audio engineering software, suggests that "semantic meaningfulness" may be more critical than rigid adherence to traditional visualization dogma. This shift in perspective highlights a growing recognition that user expertise and the specific context of data usage can justify—and even necessitate—the breaking of standard visualization rules.

The Catalyst: The Under Armour Running Pace Controversy

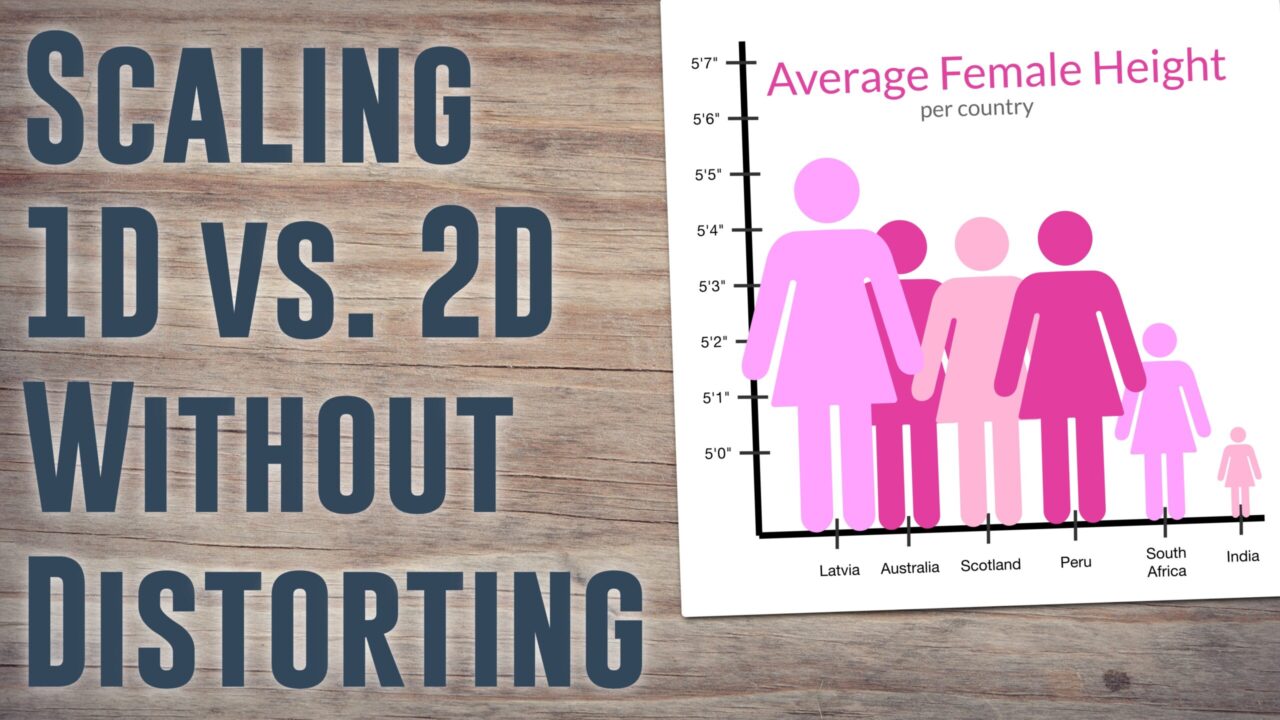

The contemporary discussion regarding the inversion of data axes gained significant momentum in June 2021. The debate was ignited by a social media post from Andy Kirk, a prominent data visualization consultant and editor of Visualising Data. Kirk highlighted a chart produced by the Under Armour fitness ecosystem which displayed kilometer splits for a runner. At first glance, the chart appeared to violate every established rule of graphical integrity: the vertical axis was inverted, with the values decreasing as the bars ascended, and the baseline did not start at zero.

The visualization displayed pace—a metric ubiquitous in the running community but often misunderstood by the general public. Unlike speed, which is measured in distance over time (such as kilometers per hour), pace is measured in time over distance (minutes per kilometer or mile). This distinction is vital for human-scale performance tracking. For a marathoner, a difference of ten seconds per kilometer is a significant shift in effort, whereas the corresponding change in kilometers per hour would be numerically minute and difficult to track mentally during physical exertion.

The mathematical irony of pace is that as a runner becomes faster, the numerical value of their pace decreases. A 4:00 minute-per-kilometer pace is significantly faster than a 5:00 minute-per-kilometer pace. In a standard bar chart, the 5:00 pace would produce a taller bar, visually suggesting "more" or "better" performance. However, for an athlete, "more" performance is represented by a "lower" number. To align the visual stimulus with the psychological experience of running, developers at Under Armour and other fitness giants like Strava and Garmin have opted to invert the Y-axis. In these applications, the fastest splits result in the tallest bars, creating a visual "peak" for the runner’s highest effort.

Chronology of the Visualization Debate

The timeline of this industry-wide reflection reveals a slow-building tension between academic visualization standards and practical user interface (UI) design:

- Early 2010s: The rise of GPS-enabled wearable technology (Garmin, Suunto) leads to the standardization of "pace" over "speed" in consumer fitness interfaces.

- 2015–2018: Strava and MapMyRun (owned by Under Armour) solidify the use of inverted line and bar charts. While purists critiqued the lack of zero-baselines, user retention data suggested that athletes found these "distorted" charts more intuitive.

- June 4, 2021: Andy Kirk’s tweet regarding the Under Armour split chart goes viral, prompting a divide between data purists and domain experts.

- Late June 2021: Data visualization researchers, including Robert Kosara, publish analyses defending the use of "semantically meaningful" distortions, arguing that the user’s mental model should dictate the chart’s form.

- 2022–Present: Major analytics platforms begin introducing "context-aware" charting options, allowing for non-linear and inverted scales in specialized professional dashboards.

Technical Analysis: Why "Higher" Does Not Always Mean "More"

To understand why professional applications break visualization rules, one must analyze the cognitive load placed on the user. In the case of running data, if an application used a "correct" bar chart where shorter bars represented faster speeds, the runner would have to perform a two-step mental translation: first, identifying the numerical value, and second, inverting that value to understand the quality of the performance. By inverting the axis, the designer removes this cognitive hurdle.

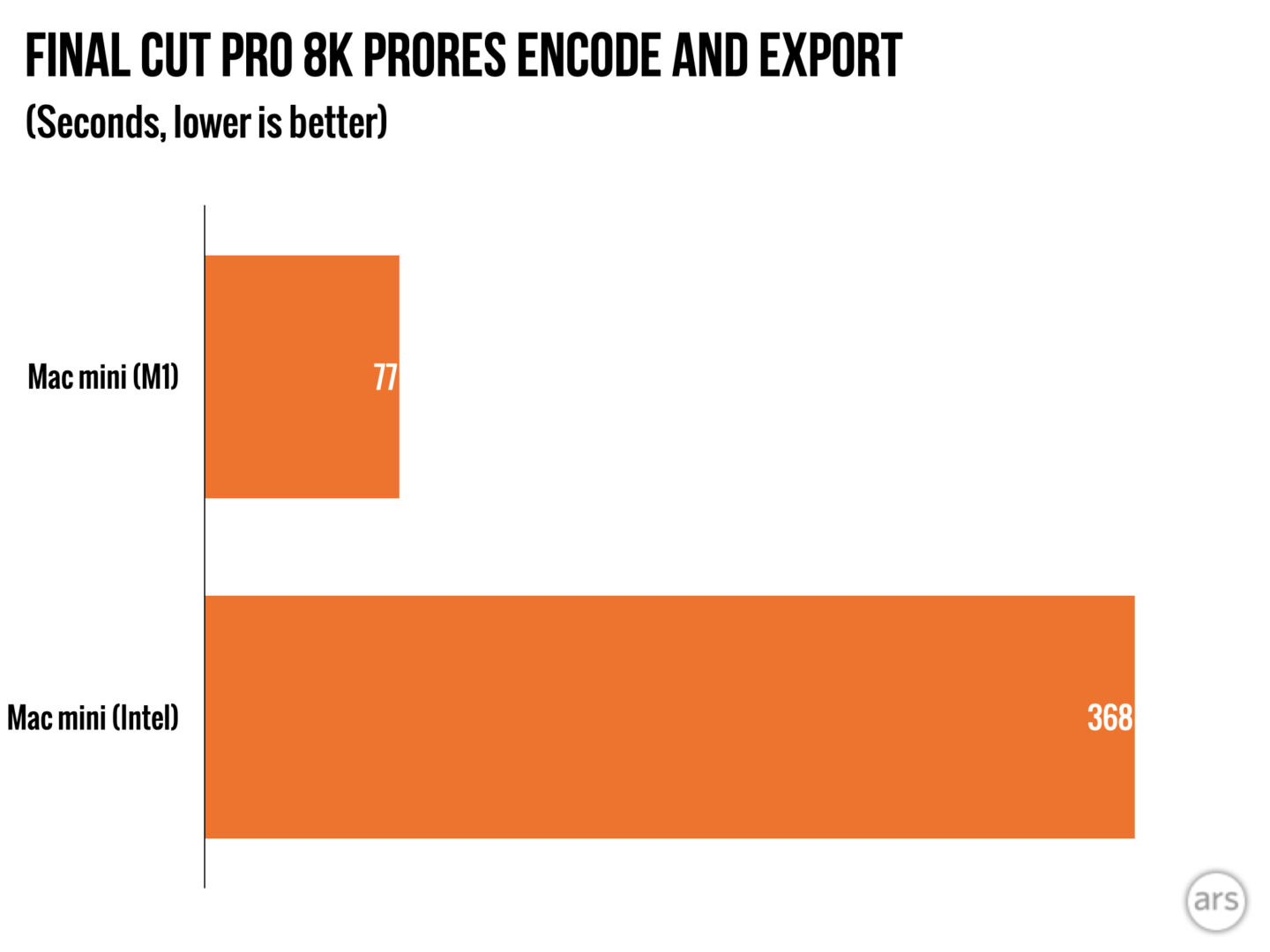

This phenomenon is also prevalent in performance benchmarking for hardware. In reviews of central processing units (CPUs) or graphics cards, benchmarks often measure the time taken to complete a specific task, such as rendering a 3D image. A lower time indicates a superior processor. Publications like Ars Technica frequently include annotations stating "Lower is Better" or "Higher is Better" because a standard bar chart cannot inherently convey whether the growth of a bar represents an improvement or a regression without the context of the metric.

Beyond Fitness: Non-Linear Scaling in Audio Engineering

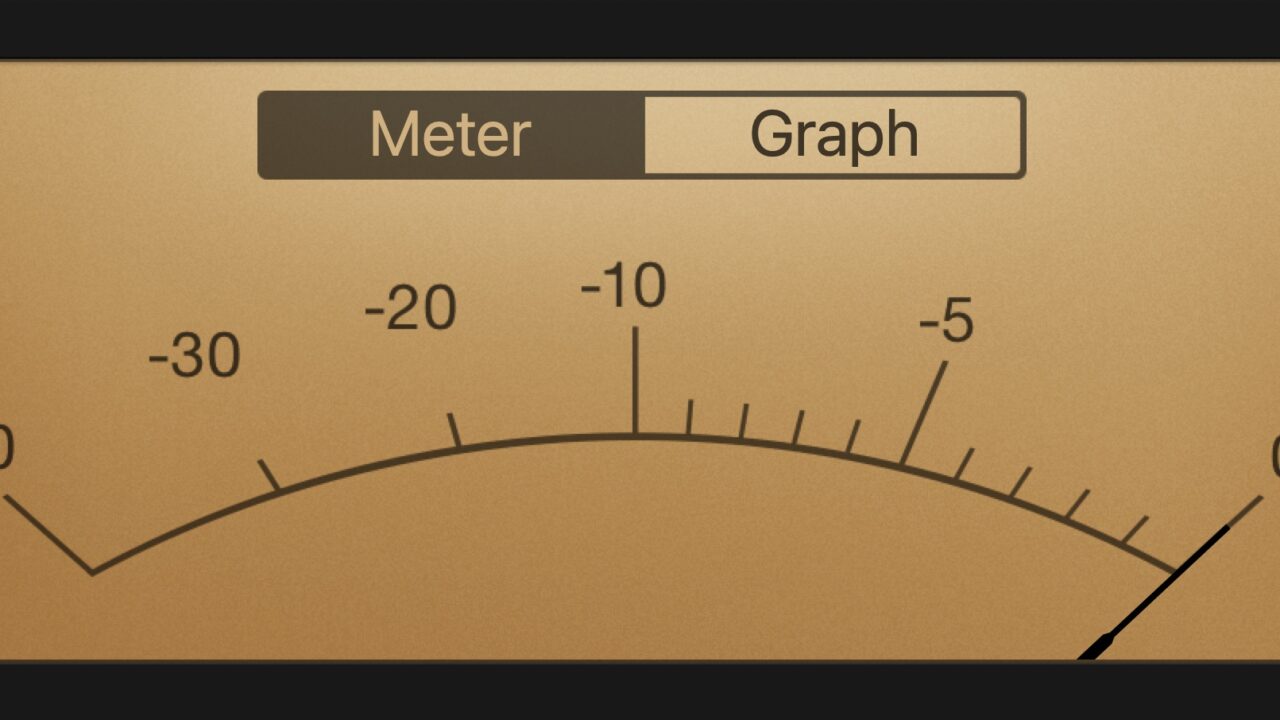

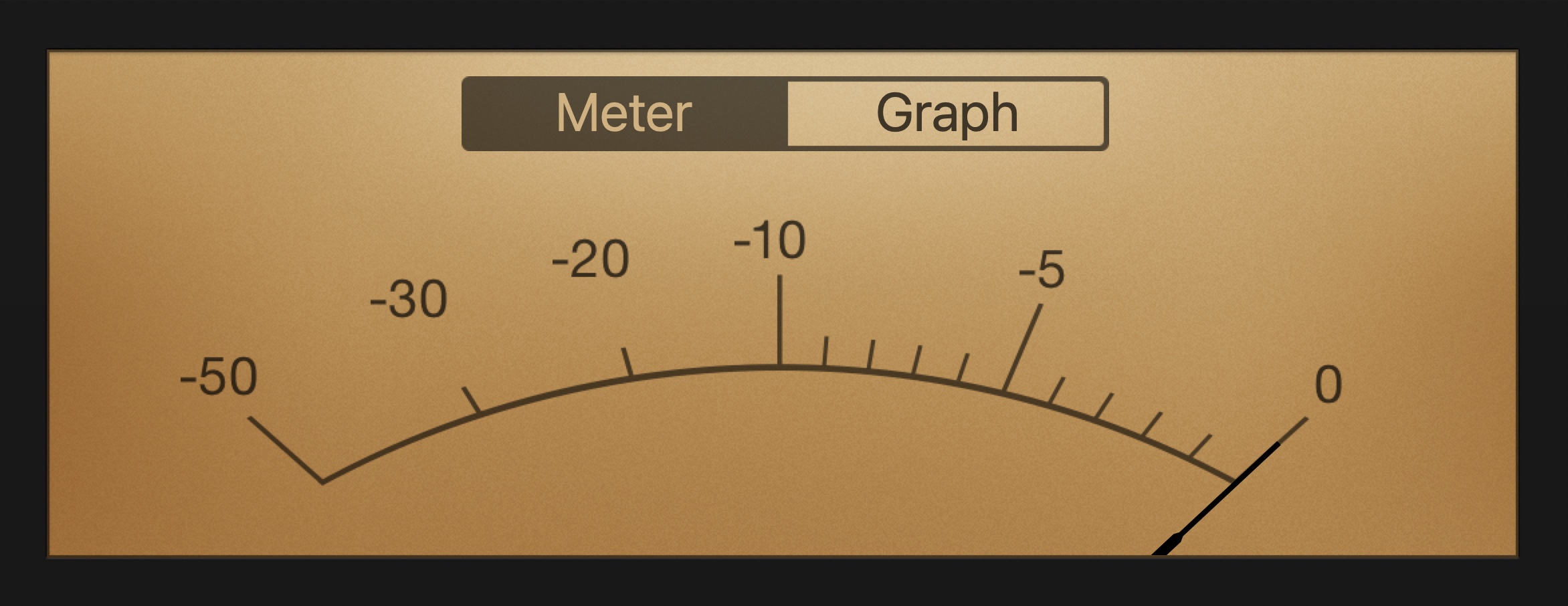

The defiance of traditional visualization rules is perhaps most sophisticated in the realm of audio recording and processing. Professional audio software, such as iZotope RX, Logic Pro, and hardware interfaces like those produced by MOTU (Mark of the Unicorn), utilize axes that would be considered "broken" by standard statistical measures.

In audio engineering, sound intensity is measured in decibels (dB), a logarithmic unit. However, the visual representation of dB on a meter is rarely a simple log scale. In many digital audio workstations (DAWs), the scale ranges from negative infinity (-∞) to zero. The "zero" point represents the digital ceiling; exceeding this point results in "clipping," a harsh distortion that ruins audio quality.

Technical analysis of these interfaces reveals two primary "distortions":

Segmented Linear Scaling

On many professional meters, the space between -12 dB and 0 dB is visually much larger than the space between -60 dB and -48 dB, even though the numerical difference is the same. This is because the "critical range" for an engineer is the headroom near the 0 dB limit. Providing more visual real estate to this narrow range allows for precise adjustments where they matter most.

The Double Infinity Scale

In spectral analysis software, researchers often encounter scales that go from -∞ to 0 both upward and downward from a center point. This represents the signal’s excursion above and below the zero-crossing of an electrical waveform. While "infinity" cannot be graphically represented to scale, these interfaces use it as a symbolic baseline to indicate the absence of signal, prioritizing the engineer’s need to see the "shape" of the silence versus the "shape" of the sound.

Industry Reactions and Expert Perspectives

The debate has forced a reconciliation between two schools of thought: the "Tuftean" purists and the "Functionalist" practitioners. Edward Tufte, a pioneer in the field of data visualization, famously advocated for the "data-ink ratio" and the avoidance of "chartjunk" or misleading scales. From a strict Tuftean perspective, an inverted Y-axis that does not start at zero is a "lie."

However, practitioners argue that the "lie" serves a higher truth. In a thread responding to the Under Armour controversy, designers suggested that the "correct" way to display the data might be to anchor bars on an average pace rather than a zero baseline. This would show "positive" or "negative" splits relative to a runner’s mean performance. While this solves the baseline issue, it still requires the inversion of the scale to ensure that a "positive" split (running faster) points upward.

Software engineers from the audio industry have similarly defended their "distorted" meters. A common sentiment among UI designers for companies like Ableton or Waves is that a meter is a tool for real-time decision-making, not a static record for historical analysis. If a "distorted" scale prevents a recording engineer from ruining a take, the visualization has succeeded in its primary objective.

Broader Implications for Data Science and UI/UX

The implications of this shift extend far beyond running apps and music software. As data becomes more integrated into specialized professional fields—from medical telemetry to cockpit instrumentation—the "one-size-fits-all" approach to data visualization is being challenged.

The Medical Context

In a clinical setting, a patient’s heart rate and blood pressure are often monitored on screens where the "normal" range is highlighted. If a vital sign drops, the visual urgency is often communicated through color and position rather than a strict linear growth of a bar. If a lower number is more dangerous, the UI might use a downward-pointing visual cue that feels "heavier" or "more significant" than a high number.

Aviation and Aerospace

Pilots rely on "tapes" for altitude and airspeed. These vertical scales often move in ways that seem counter-intuitive to a ground-based observer but are perfectly aligned with the pilot’s physical sensation of climbing or descending. The "semantic" alignment of the instrument with the physical reality of the flight is a matter of safety.

Conclusion: The Primacy of the User

The conclusion drawn by the modern data community is that we are not chart-reading machines. Human beings bring context, expertise, and ingrained cognitive biases to every piece of information they consume. The "rules" of data visualization serve as an excellent baseline for general-purpose communication, but they are not absolute laws of nature.

As Robert Kosara noted in his analysis of the controversy, expertise trumps visualization rules. When a runner looks at a pace chart, they are not looking for a lesson in geometry; they are looking for a reflection of their effort. When an engineer looks at a compressor meter, they are looking for the "bite" of the processor on the sound. In these specialized contexts, a chart that follows all the rules but fails to communicate the "feeling" of the data is, in its own way, a failure of design. The future of data visualization lies in this delicate balance: maintaining enough integrity to be accurate, while possessing enough flexibility to be meaningful.