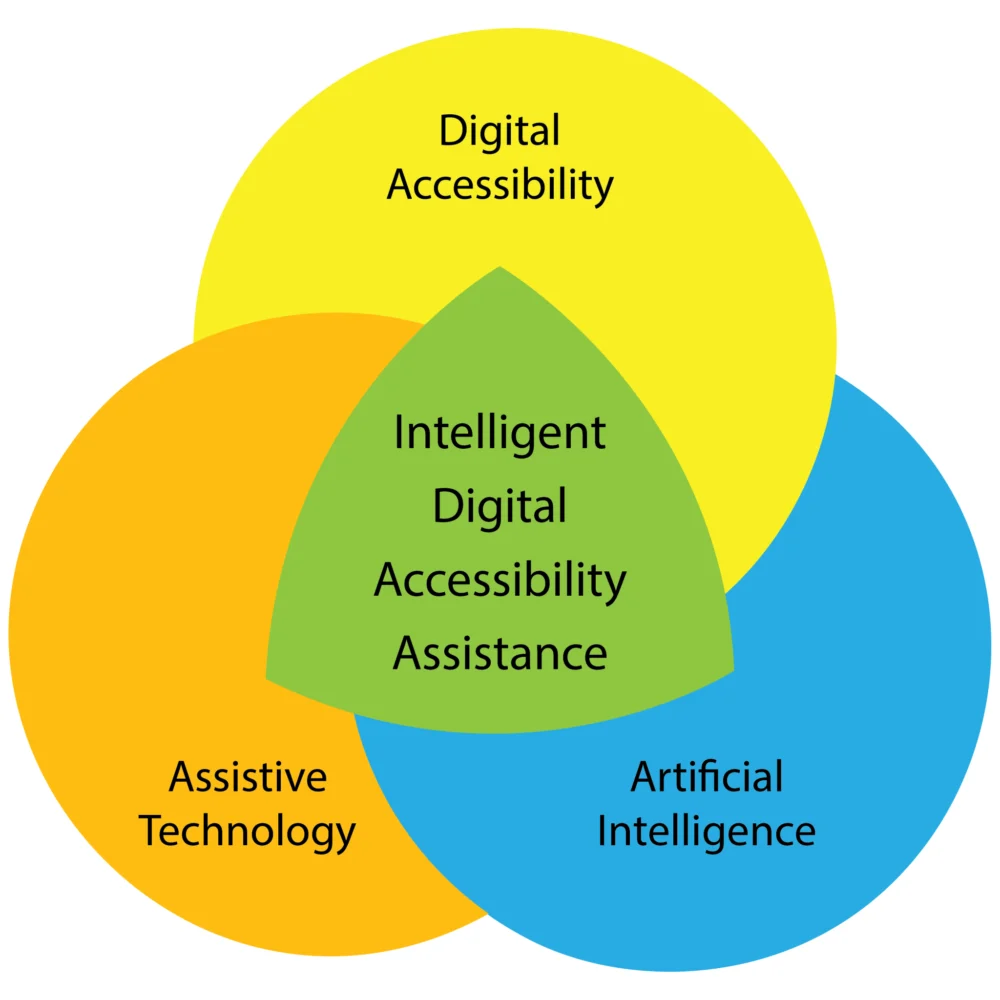

The digital landscape is on the cusp of a transformative evolution, driven by the convergence of advanced assistive technologies, robust digital accessibility principles, and the rapidly expanding capabilities of artificial intelligence (AI). This groundbreaking intersection promises to redefine how individuals with disabilities interact with and navigate the online world, fostering unprecedented levels of autonomy and personalization. While the development of these sophisticated systems is ongoing, the responsibility for ensuring equitable access to digital content, services, and products for all users remains paramount for developers and content creators.

Recent years have witnessed a significant surge in assistive technology innovations. From more intuitive screen readers and advanced speech recognition software to sophisticated eye-tracking devices and adaptive input methods, the tools available to users with disabilities have become increasingly powerful and user-friendly. Concurrently, the field of digital accessibility has matured, with a growing understanding of universal design principles and the legal and ethical imperatives for creating inclusive digital experiences. Standards like the Web Content Accessibility Guidelines (WCAG) have become cornerstones in this effort, guiding developers in building websites and applications that can be perceived, understood, operated, and are robust for all users, including those with sensory, cognitive, or motor impairments.

Parallel to these advancements, artificial intelligence has experienced an exponential growth in its availability, capability, and adoption across various sectors. Methodologies such as natural language processing (NLP), computer vision, and machine learning are no longer confined to academic research but are actively reshaping industries, including the realm of assistive technology and digital accessibility. As noted by researchers Giansanti and Pirrera in a projected 2025 publication, "AI itself is expanding the concept of assistive technology, shifting from traditional tools to intelligent systems capable of learning and adapting to individual needs. This evolution represents a fundamental change in assistive technology, emphasizing dynamic, adaptive systems over static solutions." This perspective highlights a pivotal shift from one-size-fits-all solutions to highly personalized and responsive technological support.

This potent confluence of AI, assistive technology, and digital accessibility has led to the conceptualization of a new domain: Intelligent Digital Accessibility Assistance (IDAA). This emerging field envisions a proactive, personalized mediator designed to empower users by enabling them to adapt, translate, and restructure digital content and environments to align with their unique needs and preferences.

The Dawn of the Intelligent Digital Accessibility Assistant (IDAA)

The core of IDAA lies in the concept of an Intelligent Digital Accessibility Assistant (IDAA), a sophisticated system that acts as a personalized digital concierge for users with disabilities. The primary function of an IDAA would be to facilitate a seamless and optimized digital experience by adapting content and interfaces in real-time, based on the individual user’s profile and evolving requirements. This adaptive capability moves beyond static assistive tools to dynamic systems that learn and respond.

User Configuration and Intelligent Training

The development and efficacy of an IDAA would hinge on a comprehensive understanding of the user. In its initial stages, setting up an IDAA might involve a detailed manual configuration process. This would entail providing information about the user’s existing assistive technology, their preferred methods of interacting with digital content, and their common digital activities. For instance, a user who is blind might specify the exact software (e.g., specific screen reader version) and hardware (e.g., braille display model) they utilize, including any custom configurations. The IDAA would then be tasked with monitoring developments related to this assistive technology, such as user interface changes, new features, or software updates, and proactively informing the user. Furthermore, the assistant could identify and disseminate emerging best practices relevant to the user’s specific tools.

As these intelligent assistants mature, the setup process is envisioned to become increasingly automated. Through observing and learning a user’s interaction patterns and requirements, the IDAA could infer needs and preferences. Users could then opt for the assistant to automatically adapt its behavior based on continuous analysis, either autonomously or by offering recommendations for user authorization or rejection. This learning loop is crucial for the IDAA to evolve alongside the user.

Personalizing Content Interaction

Beyond assistive technology, an IDAA would be instrumental in tailoring content consumption. By granting specific permissions, users could allow their IDAA to monitor and analyze their interactions with digital content. Consider a scenario where a screen reader user encounters a legacy website with inadequate semantic markup, hindering navigation and comprehension. The IDAA could be instructed to analyze the visual layout and text hierarchy of the webpage to infer the missing structural information required by the user’s assistive technology, effectively reconstructing the content’s logical flow.

Another example involves users who rely on auditory cues for information. When reading an email that employs extensive visual formatting styles (e.g., italics, bold text, strikethrough), a user could instruct their IDAA to dynamically adjust their screen reader’s settings. This adjustment could involve presenting formatted text with distinct speech patterns or auditory markers, allowing for a richer and more nuanced understanding of the message’s emphasis and intent.

Adaptive Activity Modes

A significant feature of IDAA would be the ability to configure distinct "session modes" tailored to specific activities. For example, in a "research" mode, a user could direct the IDAA to rapidly scan an academic paper, generate a concise summary devoid of jargon, and extract data from any visual charts or graphs into a tabular format. This would significantly accelerate the research process and improve comprehension.

Conversely, in an "entertainment" mode, designed for activities like watching a movie, the IDAA could be programmed to silence non-critical audio notifications, thus minimizing distractions. A log of these silenced messages could be generated for later review, ensuring that important information is not missed without interrupting the user’s leisure activity. While an IDAA would likely come with default modes, it could also assist users in creating custom modes for various types of digital content and specialized virtual environments, reflecting a broad spectrum of user engagement preferences.

User-Driven Accessibility: A Collaborative Partnership

The intelligence of an IDAA is not static; it is a continuously evolving entity. After establishing an initial understanding of a user’s current digital engagement practices, the IDAA’s ongoing encoding process would refine its alignment with the user’s needs. This could involve instructing the assistant to:

- Identify and flag inaccessible content: The IDAA could proactively scan web pages or documents for accessibility barriers and provide the user with actionable insights or automatically implement workarounds.

- Translate complex language: For users with cognitive disabilities or those encountering technical jargon, the IDAA could offer simplified explanations or summaries of complex text.

- Reformat content for clarity: The assistant could dynamically reformat text, adjust font sizes, or alter color contrasts to improve readability based on individual visual preferences or needs.

- Automate repetitive tasks: For users who frequently perform similar digital actions, the IDAA could learn and automate these tasks, reducing cognitive load and increasing efficiency.

In such an environment, the degree of collaboration between the user and the IDAA is entirely user-defined, offering an unprecedented level of control and personalization in digital accessibility. This user-driven approach ensures that the technology serves the individual, rather than dictating their digital experience.

Implications and Future Outlook

The advent of Intelligent Digital Accessibility Assistance holds profound implications for digital inclusion. By providing personalized adaptive support, IDAA systems have the potential to significantly reduce the digital divide for individuals with disabilities. This could lead to enhanced educational opportunities, expanded employment prospects, and greater social participation. The shift towards user-driven accessibility also empowers individuals, granting them agency over their digital interactions.

However, the development and deployment of such advanced AI systems necessitate careful consideration of several critical concerns. Equity of access to these technologies, the potential for bias embedded within AI training data, the environmental impact of AI computation, and the overall reliability and security of these systems are paramount issues that must be addressed proactively. Ensuring that IDAA benefits are accessible to all, regardless of socioeconomic status or geographical location, will be a significant challenge. Furthermore, the ethical implications of AI learning from user data, including privacy and data security, require robust frameworks and transparent policies.

As AI continues its rapid trajectory, the prospect of systems like the Intelligent Digital Accessibility Assistant is not a distant dream but a tangible future. Researchers and developers are actively working to overcome the technical and ethical hurdles. The journey towards fully realized IDAA systems represents a significant step forward in the ongoing quest for a truly inclusive digital world, where technology empowers every individual to participate fully and meaningfully. The conversation around these advancements is ongoing, and engagement from users, developers, and policymakers will be crucial in shaping a future where digital accessibility is intelligent, adaptive, and universally available.