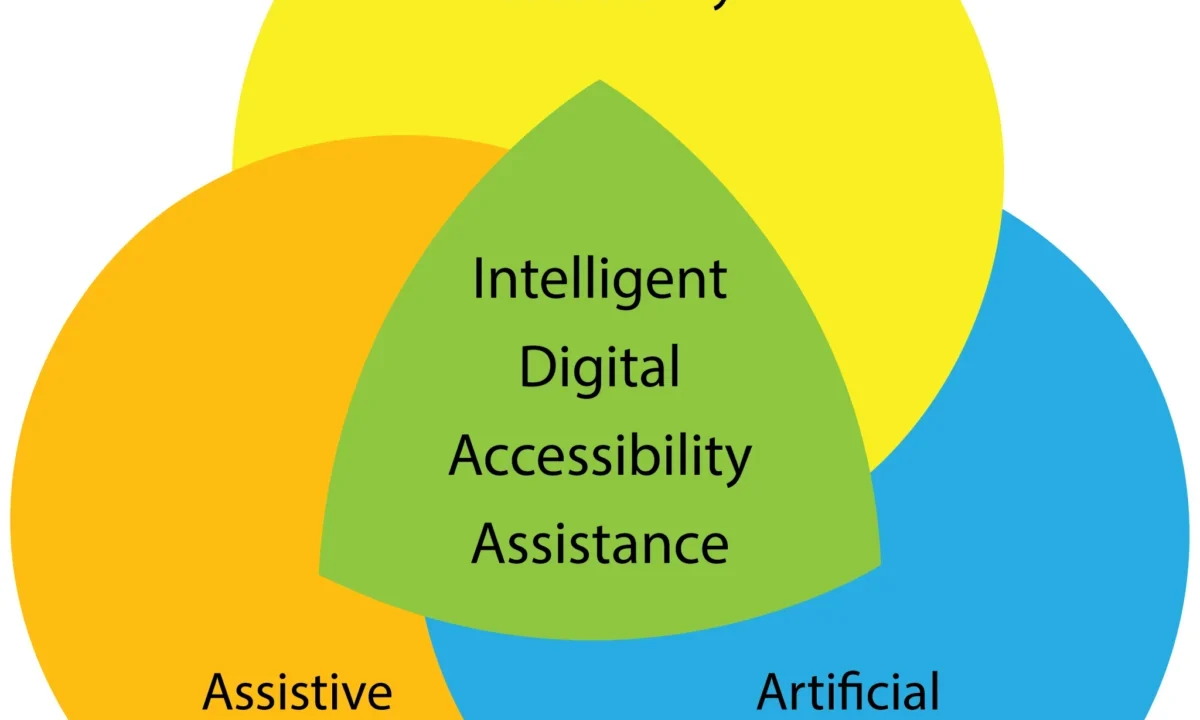

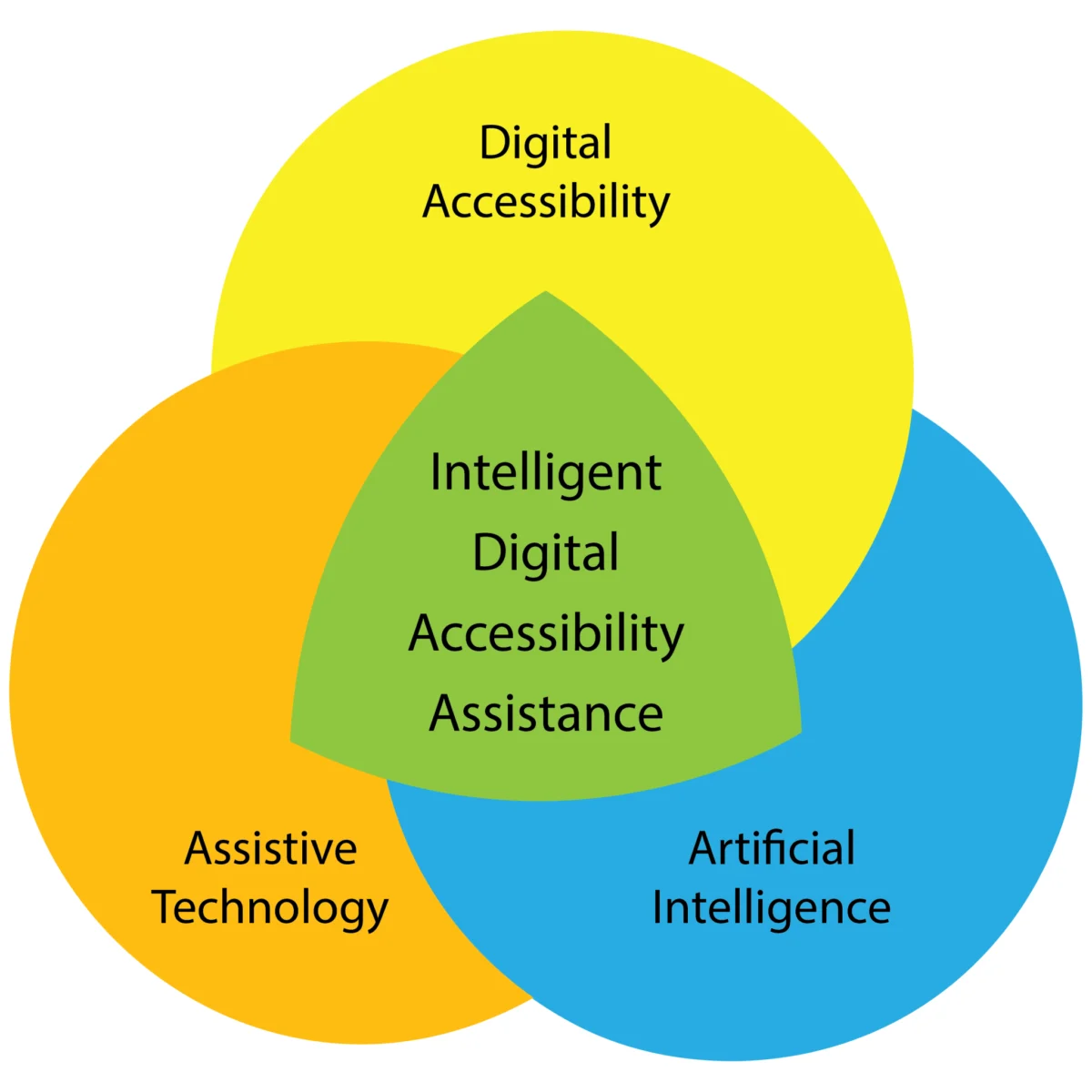

The digital landscape is on the cusp of a profound transformation, driven by the convergence of artificial intelligence (AI), assistive technologies, and digital accessibility practices. Experts predict that a new era of personalized digital empowerment for individuals with disabilities is not a question of if, but when, with the emergence of "Intelligent Digital Accessibility Assistance" (IDAA). This innovative concept envisions adaptive AI systems that can proactively understand and cater to the unique needs and preferences of each user, thereby optimizing their digital experiences.

While the development and deployment of such advanced systems represent a significant leap forward, a crucial caveat remains: the fundamental responsibility for ensuring equal digital access for all users rests squarely with the developers of digital content, services, and products. The potential of IDAA is not a substitute for foundational accessibility principles but rather a powerful augmentation that can bridge existing gaps and create more inclusive digital environments.

A Landscape of Rapid Advancement

The past few years have witnessed an explosion of progress in assistive technology and digital accessibility. Innovations ranging from enhanced screen readers and dynamic braille displays to advanced speech recognition software and sophisticated eye-tracking systems have significantly expanded the capabilities available to individuals with diverse needs. Concurrently, the field of artificial intelligence has experienced unprecedented growth, with methodologies like natural language processing (NLP), computer vision, and machine learning permeating various sectors.

This AI revolution is directly impacting assistive technologies and digital accessibility. As noted by Giansanti and Pirrera in their 2025 publication, "AI itself is expanding the concept of assistive technology, shifting from traditional tools to intelligent systems capable of learning and adapting to individual needs. This evolution represents a fundamental change in assistive technology, emphasizing dynamic, adaptive systems over static solutions." This sentiment underscores the paradigm shift from static, one-size-fits-all solutions to dynamic, user-centric systems that can learn and evolve.

Introducing Intelligent Digital Accessibility Assistance (IDAA)

The convergence of these powerful forces has led to the conceptualization of Intelligent Digital Accessibility Assistance (IDAA). At its core, IDAA represents a proactive, personalized digital mediator. This AI-powered assistant would empower users to adapt, translate, and restructure digital content and environments to align perfectly with their individual preferences, abilities, and disabilities.

The Architecture of Personalization: User Configuration and Training

The efficacy of an IDAA hinges on its ability to develop a comprehensive understanding of the user. This process would begin with a robust configuration and training phase. In initial iterations, this setup might involve a manual input process where users detail their existing assistive technologies, their preferred methods of interacting with digital content, and their specific digital activities. This would include granular details about software versions, hardware models, and any customized settings.

As these intelligent assistants mature, the setup process is expected to become increasingly automated. By observing and learning a user’s requirements and preferences through their digital interactions, the IDAA could autonomously build a detailed user profile. Users would then have the option to allow the assistant to automatically adapt its behavior based on ongoing analysis, or to receive recommendations for adaptive changes that they can authorize or reject.

Empowering Tools and Content Adaptation

The IDAA’s capabilities extend to optimizing the user’s interaction with both their assistive tools and the digital content itself. For a visually impaired user, for example, the IDAA would need to comprehend the intricacies of their assistive technology setup, including specific screen reader software and braille display hardware. The assistant could then proactively inform the user about real-time developments pertinent to their tools, such as changes in user interfaces, the release of new features, or critical software and firmware updates. Furthermore, an IDAA could be tasked with identifying and sharing emerging best practices tailored to the user’s specific assistive technology stack.

Beyond tool adaptation, IDAA can significantly enhance the consumption of digital content. By granting specific permissions, users could allow their IDAA to monitor and analyze their interactions with digital content. For instance, when a screen reader encounters a legacy website with poor semantic markup, the user could instruct the IDAA to analyze the visual layout and text hierarchy to infer the missing structural information necessary for effective navigation. Similarly, when reading an email with extensive visual formatting (italics, bold, strikethrough), a user could request the IDAA to dynamically adjust their screen reader’s settings to present formatted text with distinct auditory cues, thereby improving comprehension and reducing cognitive load.

Contextualizing Digital Activities: Session Modes

A key feature of IDAA would be its ability to support different "session modes" tailored to specific user activities. Imagine a user engaged in academic research. In "research" mode, the IDAA could be instructed to rapidly scan an academic paper, generate a jargon-free summary, and even transform visual charts and graphs into accessible tabular formats. Conversely, for entertainment, a user might switch to an "entertainment" mode. In this scenario, the IDAA could intelligently silence non-critical audio notifications, creating a log of messages for later review, thereby ensuring an uninterrupted viewing or listening experience. While default modes are likely to be included, the IDAA’s true power lies in its ability to assist users in building custom modes for various digital content types and specialized virtual environments, reflecting their unique engagement preferences.

User-Driven Accessibility: An Open-Ended Collaboration

The core philosophy behind IDAA is user-driven accessibility. After establishing an initial understanding of a user’s current digital engagement practices, the IDAA’s ongoing encoding process would continuously refine its alignment with the user’s evolving needs and preferences. This collaborative relationship is designed to be entirely user-controlled, with the assistant’s degree of involvement determined by the user’s explicit instructions. Users could direct their IDAA to perform a wide range of adaptive actions, such as:

- Content Transformation: Automatically converting complex visual content into more accessible formats, such as descriptive text for images, simplified language for dense text, or structured data for charts.

- Interface Customization: Dynamically adjusting the layout, font sizes, color contrasts, and interactive elements of websites and applications to match user-defined accessibility settings.

- Navigation Assistance: Providing intelligent shortcuts, predicting user intent, and offering alternative navigation pathways based on user history and current context.

- Information Filtering: Prioritizing or filtering notifications, messages, and content based on user-defined importance and relevance.

- Language and Format Translation: Seamlessly translating content into preferred languages or reformatting it into more easily digestible structures.

In this envisioned environment, the collaboration between the user and their IDAA is virtually limitless, with the user holding the ultimate authority over the scope and nature of the assistance provided.

Implications and Future Outlook

The advent of Intelligent Digital Accessibility Assistance holds profound implications for digital inclusion. By providing a layer of personalized adaptation, IDAA has the potential to significantly reduce the barriers that individuals with disabilities face in accessing and interacting with the digital world. This could lead to increased participation in education, employment, social engagement, and civic life.

However, the development and deployment of such powerful AI systems also necessitate careful consideration of critical ethical and practical concerns. These include:

- Equity of Access: Ensuring that IDAA technologies are accessible and affordable to all individuals who could benefit from them, regardless of socioeconomic status or geographical location.

- Bias in Training Data: Addressing potential biases in the AI’s training data, which could inadvertently lead to discriminatory outcomes or perpetuate existing inequalities. Rigorous testing and diverse data sets are crucial.

- Environmental Impacts: Assessing and mitigating the environmental footprint associated with the development and operation of large-scale AI systems.

- Reliability and Security: Guaranteeing the robustness, accuracy, and security of IDAA systems to protect user privacy and prevent misuse.

- Developer Responsibility: Reiterating that while IDAA can augment accessibility, it does not absolve content creators and service providers of their fundamental obligation to build accessible digital products and services from the ground up. Industry standards and regulatory frameworks will need to adapt to this evolving landscape.

The author, a daily user of AI and a researcher in its capabilities, expresses strong confidence that the emergence of systems like Intelligent Digital Accessibility Assistants is an inevitability. The potential for individuals with disabilities to partner with AI to expand their access to the digital world is immense. This technological evolution promises a future where digital environments are not just navigable but truly personalized and empowering for everyone. As this field continues to mature, ongoing dialogue, research, and collaborative efforts will be essential to harness its transformative potential responsibly and equitably.