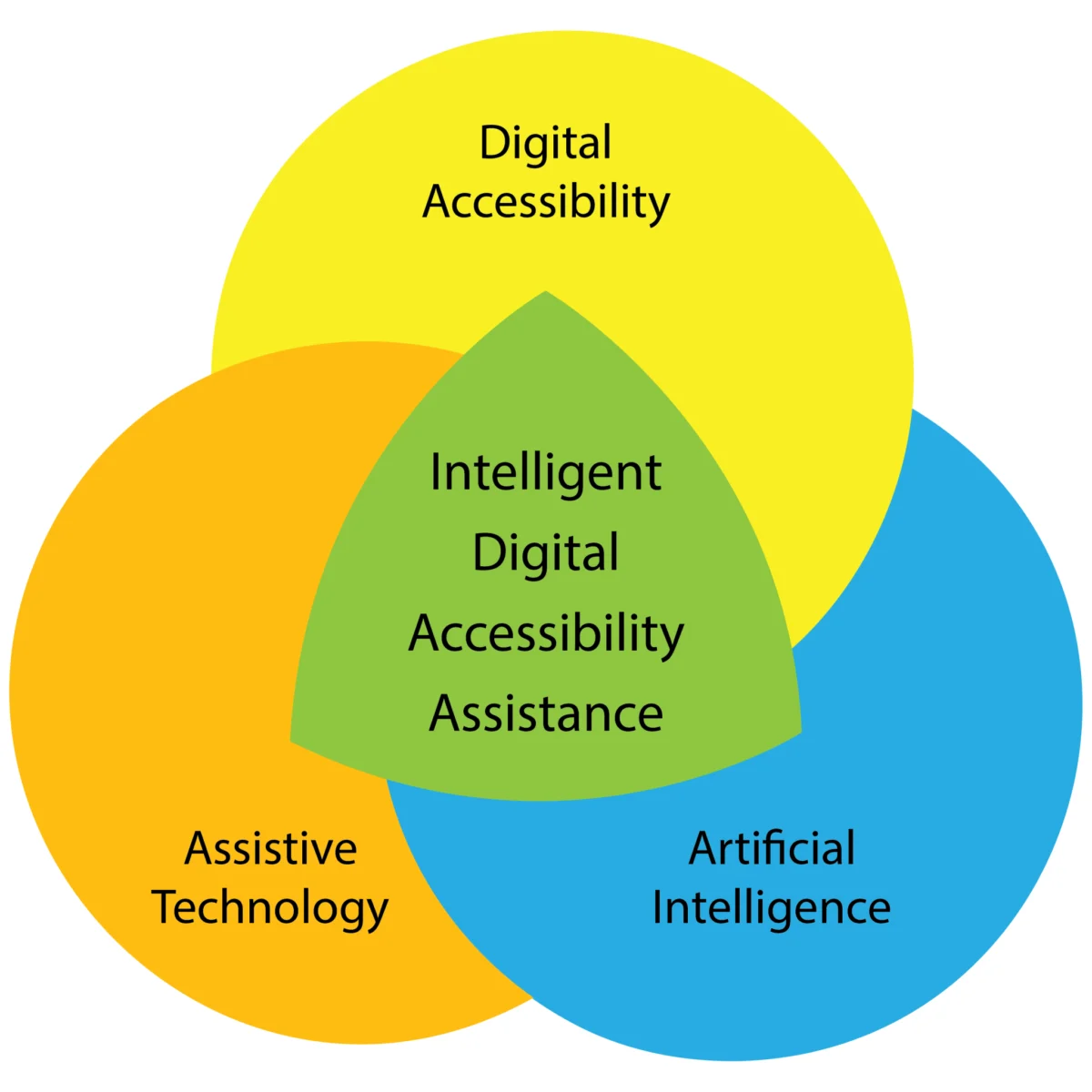

The digital landscape is on the cusp of a transformative evolution, driven by the convergence of artificial intelligence (AI), advanced assistive technologies, and a growing imperative for digital inclusivity. As the capabilities of AI expand exponentially, so too does the potential for creating a more equitable and personalized digital experience for individuals with disabilities. This emerging field, which we can term Intelligent Digital Accessibility Assistance (IDAA), promises to move beyond static solutions to dynamic, adaptive systems that empower users to navigate and interact with digital environments on their own terms. While the ultimate responsibility for accessible design remains with content creators, IDAA represents a powerful complementary force, offering a glimpse into a future where digital access is not just a matter of compliance, but a deeply personalized and intelligent experience.

Recent years have witnessed remarkable strides in assistive technology, from sophisticated screen readers and adaptive input devices to advanced speech recognition and predictive text capabilities. Simultaneously, the widespread availability and increasing sophistication of AI methodologies—including natural language processing (NLP), computer vision, and machine learning (ML)—are actively reshaping both assistive technologies and broader digital accessibility practices. This synergy is prompting a fundamental rethinking of what assistive technology can achieve. As researchers Giansanti and Pirrera (2025) observe, "AI itself is expanding the concept of assistive technology, shifting from traditional tools to intelligent systems capable of learning and adapting to individual needs. This evolution represents a fundamental change in assistive technology, emphasizing dynamic, adaptive systems over static solutions." This paradigm shift heralds the advent of Intelligent Digital Accessibility Assistance (IDAA), a conceptual framework for systems that proactively mediate and optimize the digital experiences of users with disabilities.

The Concept of the Intelligent Digital Accessibility Assistant (IDAA)

At its core, an Intelligent Digital Accessibility Assistant (IDAA) is envisioned as a proactive, personalized mediator. This AI-powered system would empower users to adapt, translate, and restructure digital content and environments to align with their unique preferences, needs, and abilities. This is not about replacing the foundational responsibility of developers to create accessible content from the outset. Instead, IDAA aims to provide an intelligent layer of support that bridges existing gaps and enhances user agency in diverse digital contexts.

The development of an IDAA would necessitate a sophisticated process of user configuration and ongoing training. In its nascent stages, setting up such an assistant might involve a manual input process, where users detail their existing assistive technologies, preferred interaction methods with digital content, and specific digital activities they engage in. This initial phase would require users to provide granular information, such as the specific software versions of their screen readers or the model numbers of their braille displays, along with any customized settings.

However, as these Intelligent Assistants mature, the setup process is expected to become increasingly automated. The IDAA would learn and adapt by observing user behavior, identifying patterns, and inferring needs and preferences. Users could then opt for the assistant to implement adaptations autonomously or to provide recommendations for user authorization or rejection, thereby maintaining a high degree of user control.

Tools and Configuration: A Deeper Dive

The "Tools" configuration within an IDAA would be crucial for ensuring compatibility with a user’s existing assistive technology ecosystem. For a visually impaired user, for instance, specifying their use of both software (e.g., JAWS, NVDA) and hardware (e.g., BrailleNote Touch, HIMS BrailleSense) would be paramount. The IDAA would need to store and reference detailed information about these tools, including version numbers and any non-default configurations. This would enable the assistant to proactively inform the user about real-time developments pertinent to their tools, such as changes in user interfaces, the introduction of new features, or critical software/firmware updates. Furthermore, an IDAA could be tasked with identifying and disseminating emerging best practices for specific assistive technologies, thereby enhancing user proficiency and efficiency.

Content Adaptation: Bridging Semantic Gaps

The "Content" configuration within an IDAA would focus on tailoring the interpretation and presentation of digital information. Users could grant permissions for the assistant to monitor and analyze their interactions with various forms of digital content. For example, when a screen reader encounters a legacy website with poor semantic markup, a user might instruct the IDAA to analyze the visual layout and text hierarchy to infer the missing structural information that their assistive technology requires. This could involve intelligently identifying headings, lists, and other semantic elements that are visually present but programmatically absent.

Similarly, when reading an email laden with extensive visual formatting (italics, bold text, strikethrough), a user might request the IDAA to dynamically adjust their screen reader’s settings to present this formatted text in a distinctive and easily distinguishable speech style. This level of content adaptation moves beyond simple text-to-speech to a more nuanced interpretation and presentation of information, significantly enhancing comprehension and reducing cognitive load for users.

Activity-Based Modes: Optimizing Digital Engagement

The concept of "Activities" within an IDAA introduces the notion of user-defined "session modes," allowing for context-specific adjustments to the digital environment. For instance, a "research" mode could be configured for a user to enable the IDAA to rapidly scan an academic paper, generate a jargon-free summary, and extract data from visual charts into tabular formats. This would dramatically streamline the research process for individuals who might otherwise struggle with dense academic prose or complex visual data representations.

Alternatively, switching to an "entertainment" mode for watching a movie could prompt the IDAA to automatically silence non-critical audio notifications, ensuring an uninterrupted viewing experience. The assistant could then generate a log of these silenced messages for later review. While an IDAA might come equipped with default modes, its true power would lie in its ability to assist users in building custom modes tailored to their specific engagement preferences for different types of digital content or specialized virtual environments. This could include modes for gaming, social media interaction, or even professional collaboration tools.

User-Driven Accessibility: A Collaborative Partnership

Following the establishment of a baseline understanding of a user’s current digital engagement practices, the IDAA’s ongoing encoding process would continuously refine its alignment with the user’s evolving needs and preferences. This adaptive learning would be facilitated by user-driven instructions, enabling the IDAA to:

- Proactively identify and flag accessibility barriers: The assistant could scan web pages or documents for common accessibility issues, such as missing alt text for images, insufficient color contrast, or non-keyboard-navigable elements, and offer immediate remediation suggestions or automated fixes.

- Translate complex information into simpler formats: Beyond summarizing academic papers, the IDAA could be instructed to rephrase technical jargon in user-friendly language, simplify complex sentence structures, or convert intricate visual diagrams into more accessible formats.

- Automate repetitive tasks: For users who frequently perform similar digital actions, the IDAA could learn and automate these sequences, reducing the manual effort required and minimizing the potential for errors.

- Provide real-time feedback on accessibility compliance: As users interact with digital content, the IDAA could offer immediate feedback on the accessibility of their own contributions, such as ensuring that images they upload have descriptive alt text or that their written communications adhere to accessibility best practices.

In such an environment, the degree of collaboration between the user and the IDAA is truly open-ended, with the user retaining complete control over the extent and nature of their engagement with the assistant.

Broader Implications and the Future of Digital Inclusion

The advent of Intelligent Digital Accessibility Assistance represents a significant potential leap forward in achieving true digital inclusion. As a daily user of artificial intelligence and a researcher focused on its evolving capabilities, the availability of systems like IDAA appears to be a matter of "when," not "if." The current trajectory of AI development strongly suggests that such sophisticated assistants will become a reality in the coming years.

However, the integration of AI into accessibility solutions is not without its challenges. Significant concerns surrounding equity of access to these advanced AI systems, potential biases embedded within training data, the environmental impact of AI infrastructure, and the reliability of AI-driven assistance must be rigorously addressed. Ensuring that IDAA technologies are developed and deployed in a manner that benefits all individuals with disabilities, regardless of socioeconomic status or geographic location, will be paramount.

Despite these challenges, the potential for individuals with disabilities to partner with AI to expand their access to the digital world is immense. IDAA technologies could democratize access to information and services, foster greater independence, and enable fuller participation in an increasingly digital society. The development of IDAA systems necessitates a collaborative effort involving AI researchers, accessibility experts, developers, policymakers, and, most importantly, individuals with disabilities themselves. Their lived experiences and insights will be indispensable in shaping these technologies to be truly effective and empowering.

The implications extend beyond individual empowerment. Widespread adoption of IDAA could also serve as a powerful catalyst for improving baseline digital accessibility standards. As users increasingly rely on intelligent assistants to bridge accessibility gaps, the demand for inherently accessible digital content and platforms will likely grow, incentivizing developers to prioritize inclusive design principles from the outset. This feedback loop, driven by user demand and AI-powered adaptation, could accelerate the transition to a more universally accessible digital ecosystem.

The journey towards Intelligent Digital Accessibility Assistance is an exciting and complex one, promising to redefine the boundaries of what is possible for digital inclusion. As this field continues to mature, ongoing dialogue, rigorous research, and a steadfast commitment to ethical development will be crucial in harnessing the full potential of AI to create a digital world that is truly accessible to everyone. This exploration serves as a call to action, inviting stakeholders to engage in shaping this transformative future and to share their insights, concerns, and questions as we navigate this new frontier together.