The Open-Source Revolution: Google Sans Flex Unveiled

One of the week’s most impactful announcements came from Google, which released its proprietary brand typeface, Google Sans Flex, under an open license. This move, executed under the Open Font License (OFL), represents a strategic shift for the tech giant, extending its commitment to open-source contributions beyond software and into core brand assets. Google Sans Flex, a variable font, has been an integral part of Google’s product ecosystem, seen across its Android interface, Pixel devices, and various marketing materials, defining a consistent and recognizable visual identity.

The decision to open-source Google Sans Flex is not merely a gesture but a calculated step reflecting broader industry trends towards collaborative development and accessibility. Historically, Google has contributed significantly to the open-source font landscape with projects like Roboto, which became the default font for Android, and Noto, designed to support all languages and scripts. Google Sans Flex builds on this legacy by offering designers and developers unparalleled flexibility. As a variable font, it allows for a single font file to contain an infinite range of styles, weights, and widths, adjustable along various axes. This technology streamlines design workflows, reduces file sizes, and enhances responsiveness across diverse digital platforms, from smartwatches to large-format displays.

Industry analysts widely praised Google’s initiative. Dr. Eleanor Vance, a prominent typography expert and Professor of Digital Design at the London School of Design, commented, "Google’s release of Sans Flex under OFL is a watershed moment. It democratizes access to a high-quality, professional-grade typeface that has defined one of the world’s most recognizable brands. For independent designers and smaller studios, this isn’t just a free font; it’s a powerful tool that levels the playing field, fostering innovation and consistency across the web." The move is expected to accelerate the adoption of variable font technology, which, despite its advantages, has seen slower uptake compared to traditional static fonts. Projections from the Global Font Market Report 2025 indicated that variable font usage, while growing, still constituted less than 15% of all web font deployments, a figure Google’s action is anticipated to significantly boost.

The implications extend beyond mere aesthetics. For developers, integrating Google Sans Flex means consistent branding with minimal effort, reducing the overhead of managing multiple font files. For users, it promises a more cohesive and visually pleasing experience across a multitude of applications and devices. This release reinforces Google’s position not only as a technological innovator but also as a significant contributor to the global design commons.

AI’s Leap Forward: Midjourney V8 Redefines Visual Creation

Simultaneously, the generative AI landscape experienced a notable advancement with the release of Midjourney V8. This iteration of the popular image generation tool pushed the boundaries of AI-driven visual production, particularly through its introduction of native 2K resolution output. Since its initial public beta release, Midjourney has rapidly evolved, transitioning from producing dreamlike, often abstract imagery to generating highly detailed and photorealistic visuals. Each version, from V1 to V7, progressively enhanced image coherence, aesthetic quality, and prompt adherence.

Midjourney V8 represents a significant technical leap. The ability to generate images at native 2K resolution (2048 x 2048 pixels or equivalent aspect ratios) directly from a prompt substantially reduces the need for external upscaling tools, streamlining the workflow for professional artists and designers. Prior versions often required additional processing to achieve print-ready or high-fidelity digital assets, introducing potential artifacts or loss of detail. V8’s enhanced resolution is complemented by improvements in its underlying neural networks, leading to more nuanced lighting, realistic textures, and a greater understanding of complex compositional requests. Early reviews from the beta testing phase highlighted V8’s superior ability to render intricate details, such as individual strands of hair, delicate fabric textures, and subtle facial expressions, with unprecedented accuracy for an AI generator.

The implications for creative industries are profound. Concept artists, illustrators, advertisers, and game developers can leverage V8 for rapid prototyping, mood board generation, and even final asset creation. The speed at which high-quality visuals can be produced is poised to disrupt traditional production pipelines, offering cost efficiencies and accelerating creative cycles. Dr. Kenji Tanaka, a leading researcher in AI aesthetics at the Institute for Advanced Computing, noted, "Midjourney V8’s native 2K output is more than just a resolution bump; it signifies a maturation of generative AI to a point where it can reliably produce production-ready assets. This places immense pressure on traditional visual production methods, but also opens up entirely new avenues for creativity and efficiency."

However, the rapid advancement of tools like Midjourney V8 also reignites discussions around authorship, copyright, and the ethical implications of AI-generated content. Concerns regarding the potential for deepfakes, the displacement of human artists, and the provenance of training data continue to be pressing issues. The ongoing "professional-grade AI tool race," as described by industry observers, sees Midjourney in direct competition with other powerhouses like Adobe Firefly and Stable Diffusion. While Firefly emphasizes seamless integration within Adobe’s creative suite and ethical training data sourcing, Midjourney continues to push raw image quality and artistic versatility. The competition is driving innovation at an unprecedented pace, with the next frontier widely acknowledged as "workflow coherence"—the ability of these tools to understand and integrate into the full design process, from initial concept to final deployment.

Craft Triumphs: The Artistry Behind KATSEYE’s Brand Identity

Amidst the technological advancements, the week also celebrated the enduring power of human creativity and meticulous craft through compelling brand identity work. One standout example was the branding for KATSEYE, a nascent music group, designed by the Seoul-based agency HuskyFox. This project served as a potent reminder that, even in an era dominated by AI-generated visuals and open-source assets, editorial taste, strategic thinking, and bespoke design remain irreplaceable.

KATSEYE’s brand identity, while not explicitly detailed in the original snippet, is indicative of a broader trend in the entertainment industry, particularly K-pop and global music markets, where visual identity is paramount to an artist’s success. HuskyFox, known for its sophisticated and culturally resonant branding solutions, likely developed a comprehensive visual system encompassing logo design, typography, color palettes, motion graphics, and photographic styles that encapsulate KATSEYE’s unique artistic persona and target demographic. The emphasis on "craft still matters" suggests a design that goes beyond generic aesthetics, featuring thoughtful details, innovative layouts, and a cohesive narrative that resonates deeply with audiences.

The success of such branding projects in a saturated market highlights the critical role of human designers. While AI can generate countless visual permutations, it lacks the nuanced understanding of cultural context, emotional resonance, and strategic foresight that a human creative director brings. "AI can create beautiful images, but it cannot conceptualize a brand’s soul or translate an artist’s vision into a cohesive, impactful identity," stated Ms. Anya Sharma, Creative Director at BrandWorks Global. "The KATSEYE branding by HuskyFox exemplifies how human ingenuity in design creates authentic connections, building loyalty and differentiation in a crowded marketplace."

This week’s recognition of KATSEYE’s branding by HuskyFox serves as a vital counterpoint to the AI narrative. It underscores that while AI tools enhance efficiency and expand creative possibilities, they are fundamentally tools. The ultimate arbiter of aesthetic value, strategic effectiveness, and emotional impact remains the human designer. The intricate balance between leveraging AI for speed and precision, and relying on human intuition for taste and meaning, is becoming the hallmark of leading design practices.

The Under-the-Radar Game Changer: CSS Studio’s Workflow Transformation

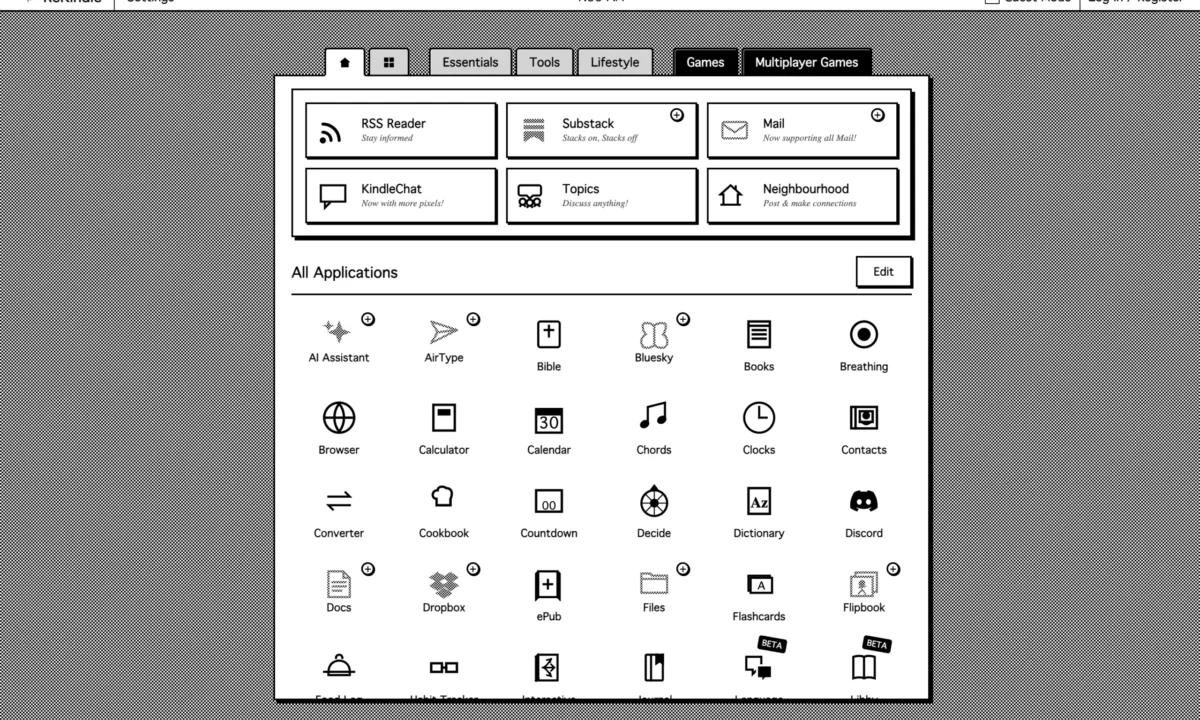

Beyond the high-profile releases, one tool quietly garnered significant attention as a "sleeper pick" of the week: CSS Studio. This platform, described as an "AI-native tool letting designers work visually while generating production-ready CSS," signals a pivotal shift in the design-to-development workflow. It sits within the rapidly expanding category of tools that leverage artificial intelligence to bridge the historical gap between design mockups and functional code.

The core premise of CSS Studio is to empower designers, particularly those who "ship their own work," to translate their visual concepts directly into clean, efficient, and production-ready Cascading Style Sheets (CSS). For years, designers have grappled with the challenge of hand-off—ensuring that their meticulously crafted designs are accurately translated by developers into code, often leading to discrepancies and iterative feedback loops. Tools like Figma, Sketch, and Adobe XD have streamlined the design phase, but the transition to code has remained a bottleneck.

CSS Studio addresses this by using AI to interpret visual design elements—such as spacing, typography, colors, and component layouts—and automatically generate the corresponding CSS code. This isn’t merely a template generator; the "AI-native" aspect implies a sophisticated understanding of design principles and best practices, aiming to produce semantic and optimized code rather than convoluted or inefficient outputs. The workflow implications are significant: designers can iterate visually, experiment with layouts and styles in real-time, and instantly see the corresponding code being generated. This reduces reliance on developers for minor styling changes, accelerates prototyping, and ensures pixel-perfect implementation of design intent.

"The potential of tools like CSS Studio is immense for individual designers and small teams," explained Mr. David Chen, a veteran full-stack developer and advocate for design-development synergy. "It democratizes web development, allowing designers with limited coding experience to bring their visions to life directly. While it won’t replace seasoned front-end developers for complex interactive systems, it drastically improves efficiency for static and component-based web interfaces." Data from a 2025 survey by WebDev Insights showed that over 40% of small to medium-sized businesses cited "design-to-code inefficiency" as a major project bottleneck, a statistic that tools like CSS Studio aim to mitigate.

This development highlights a broader industry movement towards low-code/no-code platforms infused with AI, enabling non-developers to create sophisticated digital products. CSS Studio, with its focus on generating high-quality CSS, positions itself as a critical component in this ecosystem, fostering greater autonomy for designers and fostering a more integrated creative process.

The Broader Landscape: Convergence and Coherence

The week of April 6-11, 2026, collectively painted a vivid picture of a design industry in flux and rapid evolution. The releases of Google Sans Flex, Midjourney V8, the recognition of KATSEYE’s branding, and the emergence of CSS Studio are not isolated events but interconnected threads in a larger narrative: the convergence of AI and design, the ongoing debate between open-source and proprietary ecosystems, and the redefinition of human creativity’s role.

The "professional-grade AI tool race" is accelerating, with fierce competition driving continuous innovation. While Midjourney V8 focused on resolution and quality, and Adobe Firefly emphasized integration and ethical data, the overarching goal for all players is "workflow coherence." This signifies a future where AI tools don’t just perform isolated tasks but seamlessly understand and assist through the entire design process—from ideation and conceptualization to prototyping, development, and deployment. This includes AI capable of generating entire design systems, anticipating user needs, and even adapting designs based on real-time performance data.

The ethical considerations surrounding AI remain central to this progression. As AI becomes more sophisticated, questions about intellectual property, the environmental impact of large language and image models, and the potential for job displacement will intensify. The industry is actively seeking frameworks and regulations to navigate these complex challenges, ensuring that technological advancement aligns with societal well-being and responsible innovation.

Ultimately, this standout week in design inspiration underscored a fundamental truth: technology is transforming the how, but human creativity still dictates the why. AI tools are becoming indispensable partners, automating mundane tasks and expanding the scope of what’s possible. However, the unique ability of human designers to imbue projects with cultural relevance, emotional depth, and strategic intent ensures that "editorial taste" and craftsmanship will continue to be invaluable differentiators. The synergy between advanced AI and human ingenuity is not just the present, but the undeniable future of design.

Looking Ahead: The Design-AI Symbiosis Continues

As the industry moves beyond this pivotal week, the trajectory is clear: the symbiosis between design and AI will only deepen. The next few months are expected to bring further advancements in AI’s ability to understand and generate multimodal content, including 3D models, video, and interactive experiences. The focus on workflow coherence will lead to more intelligent design assistants capable of learning individual designer preferences and predicting project needs. Open-source initiatives, inspired by Google’s move, may see more proprietary assets enter the public domain, further democratizing access to high-quality tools and resources. The ongoing dialogue between human craft and technological prowess will continue to shape the landscape, ensuring that design remains a vibrant, innovative, and deeply human endeavor, even as its tools become increasingly intelligent.