Adobe has announced a significant evolution in its creative software suite, integrating advanced artificial intelligence (AI) image editing capabilities directly into Photoshop and its standalone Firefly platform. These new features empower creators to articulate complex editing requests through natural language descriptions, draw directly on images for precise spatial targeting, and receive step-by-step guidance, fundamentally transforming the interaction paradigm from panel-and-slider adjustments to a more intuitive, conversational experience. This strategic move solidifies Adobe’s commitment to leveraging generative AI to enhance efficiency and democratize sophisticated image manipulation for both seasoned professionals and burgeoning enthusiasts.

A New Era of Conversational Editing in Photoshop

At the forefront of this innovation is the AI Assistant, now available in public beta for Photoshop on web and mobile. This feature introduces a chat panel that allows users to type or speak their desired edits, which Photoshop then executes automatically. The presence of a voice waveform UI at the bottom of the frame is a subtle yet powerful indicator of this paradigm shift, signaling that Photoshop is evolving beyond its traditional interface to become a more interactive and responsive creative partner. This conversational approach aims to reduce the learning curve associated with complex tools, making advanced editing techniques more accessible.

The true precision of this AI image editing approach is unlocked through AI Markup. This innovative feature enables creators to draw directly on their images—using arrows, circles, or rough outlines—and pair these visual cues with a text prompt. The results are strikingly accurate, demonstrating the AI’s ability to interpret spatial instructions with remarkable fidelity. For instance, an example showcases a fenced urban playground being seamlessly transformed into a field of vibrant orange poppies, with the background replacement precisely adhering to the annotated areas. This spatial targeting provides the AI with a crucial referent, offering more specific information than a vague phrase like "area behind the subject," thereby enhancing control and predictability in complex edits. This blend of natural language processing and visual guidance represents a significant leap forward in human-computer interaction within creative applications.

Adobe Firefly Image Editor: Web-Based Generative Power

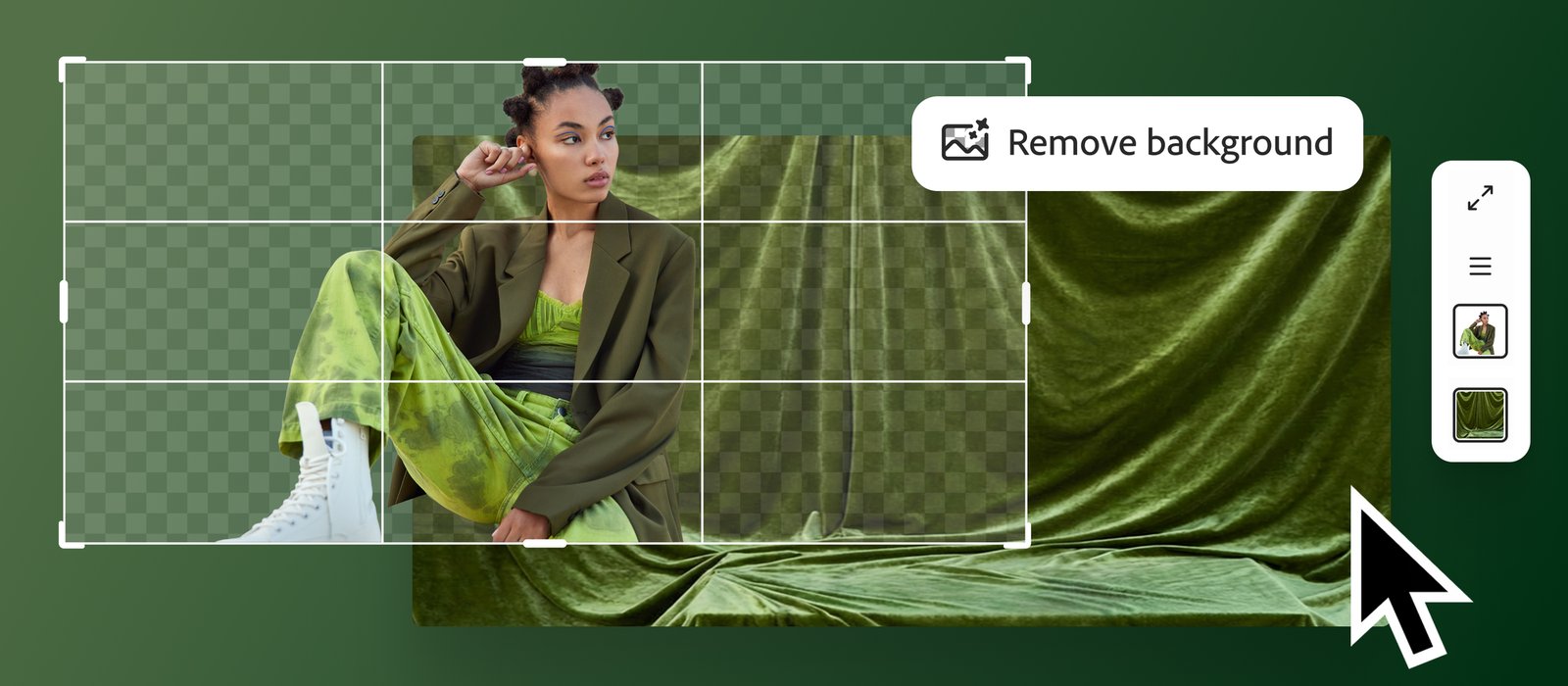

Complementing the Photoshop integration, the Adobe Firefly Image Editor expands the reach of generative AI with a suite of dedicated tools accessible directly in a web browser, requiring no Photoshop installation. This suite includes Generative Fill, Generative Remove, Generative Expand, Generative Upscale, and Remove Background. These tools are designed to tackle distinct editing tasks, offering focused functionality that minimizes ambiguity and streamlines the editing process.

For example, in press assets, a fashion shoot demonstrates the Remove Background tool mid-process, with a checkerboard transparency grid intelligently expanding outward from the subject, indicating a precise selection. Similarly, a product still life—featuring an orange coffee bag, a pink kettle, and a teal tile floor—illustrates Generative Remove isolating an object within a circular selection, ready for erasure. These examples underscore the tools’ capacity to operate at the element level, rather than affecting the entire frame, providing granular control crucial for professional-grade output. The web-based nature of Firefly makes these powerful generative capabilities available to a broader audience, including those who prefer lightweight or cloud-centric workflows. Firefly’s generative tools are live globally, while the Photoshop AI features are currently in public beta, indicating a phased rollout strategy for Adobe’s comprehensive AI vision.

Adobe’s Strategic Vision: Empowering Creators and Democratizing Creativity

Adobe’s overarching strategy behind these AI advancements is to offload the execution of complex tasks to artificial intelligence, allowing creators to concentrate on the core creative decision-making process. This philosophy is further exemplified by the AI Assistant’s ability to offer step-by-step guidance, not just automated changes. This "teach while it works" mode serves as an invaluable educational tool, helping users understand the underlying techniques while simultaneously achieving their desired outcomes. This approach aligns with Adobe’s long-standing mission to empower creators, from novices to seasoned professionals, by making sophisticated tools more intuitive and accessible.

The Firefly Image Editor’s browser-based accessibility is particularly significant. By removing the barrier of software installation, Adobe is tapping into the growing segment of creators who operate primarily in lightweight or web-based environments. Each tool in the Firefly suite is purpose-built for a specific editing task—be it background removal, object erasure, or content expansion—rather than combining them into a single, all-purpose prompt. This intentional separation maintains a focused interface and significantly reduces ambiguity when describing an edit, ensuring a more predictable and satisfying user experience. This strategic segmentation also allows for continuous improvement and specialization of each AI model.

Background and Evolution of AI in Adobe’s Ecosystem

Adobe has been a pioneer in digital imaging for decades, with Photoshop celebrating over 30 years of innovation. While the current generative AI wave feels revolutionary, Adobe’s integration of AI has been incremental and strategic. Features like Content-Aware Fill, introduced years ago, were early precursors to today’s generative capabilities, leveraging machine learning to intelligently fill selections. More recently, Neural Filters in Photoshop showcased the potential of deep learning to apply complex stylistic and corrective edits with remarkable ease. These previous integrations laid the groundwork for the more sophisticated, conversational AI now being deployed.

The broader landscape of generative AI has seen an explosion of innovation with models like DALL-E, Midjourney, and Stable Diffusion capturing public imagination. Adobe’s entry into this space with Firefly in March 2023 was a deliberate and differentiated move. A key distinction for Firefly is Adobe’s commitment to ethical AI development, particularly concerning training data. Firefly was trained on a proprietary dataset of licensed content and Adobe Stock images, aiming to mitigate copyright concerns and ensure fair compensation for artists whose work contributes to the models. This approach positions Adobe as a responsible leader in the rapidly evolving AI creative domain, addressing critical industry concerns about data provenance and creator rights.

Market Impact and Industry Implications

The introduction of these advanced AI capabilities is poised to have a profound impact across various sectors of the creative industry. For professional photographers, designers, and marketers, the AI Assistant and Firefly tools promise significant gains in efficiency. Tasks that previously required meticulous manual selection, masking, or intricate layer work can now be accomplished in seconds with a simple text prompt or a few drawn lines. This frees up valuable time, allowing professionals to focus more on conceptualization, client communication, and overall artistic direction rather than repetitive technical execution. Early estimates suggest that such AI integrations could reduce routine editing times by 30-50%, depending on the complexity of the task, translating into substantial cost savings and increased output capacity for creative agencies and individual freelancers alike.

The democratization of advanced editing techniques is another critical implication. Complex image manipulations, once the exclusive domain of highly skilled Photoshop experts, are now within reach for casual users, small business owners, and content creators without extensive technical training. A student needing to remove a background for a presentation, a small e-commerce seller wanting to clean up product photos, or a social media influencer looking to quickly adapt images for different platforms can now leverage AI to achieve professional-looking results with unprecedented ease. This lowers the barrier to entry for high-quality visual content creation, potentially fostering a new wave of digital creativity.

From a competitive standpoint, Adobe’s aggressive push into generative AI reinforces its dominant position in the creative software market. While numerous startups and open-source projects offer compelling AI imaging tools, Adobe’s strength lies in integrating these capabilities seamlessly into an established, industry-standard ecosystem. Photoshop’s user base, estimated to be in the tens of millions globally, provides a massive platform for immediate adoption. Furthermore, the ethical framework around Firefly’s training data provides a distinct advantage, offering users greater confidence in the commercial viability and legal safety of their AI-generated content compared to models trained on less transparent datasets.

However, the rapid advancement of AI in creative tools also raises important considerations. The debate around authenticity and the potential for "deepfakes" continues to evolve. While Adobe emphasizes responsible use, the increasing ease of manipulating images necessitates ongoing discussions about digital ethics, content provenance, and the development of robust detection mechanisms. Furthermore, the role of human creativity itself is being redefined. While AI can automate tasks, the discerning eye, artistic vision, and conceptual prowess of human creators remain indispensable. The future likely involves a synergistic relationship where AI acts as a powerful co-pilot, augmenting human ingenuity rather than replacing it.

The Technical Underpinnings and Future Outlook

Underpinning these new features are sophisticated large language models (LLMs) and diffusion models, which have been trained on vast datasets to understand natural language instructions and generate highly realistic imagery. The conversational interface in Photoshop’s AI Assistant relies on advanced natural language processing (NLP) to interpret user requests, while the generative capabilities in both Photoshop and Firefly leverage state-of-the-art image generation models to perform tasks like content creation, removal, and expansion. The AI Markup feature, in particular, showcases multimodal AI, combining visual input (drawings) with textual input (prompts) to achieve a higher degree of control and accuracy.

Looking ahead, Adobe is expected to continue its relentless pace of AI integration across its entire Creative Cloud suite. We can anticipate more conversational interfaces in other applications like Illustrator, Premiere Pro, and After Effects, as well as further refinement of generative capabilities. The focus will likely remain on enhancing user control, improving the fidelity and artistic quality of AI-generated content, and ensuring the ethical deployment of these powerful technologies. The goal is to move towards an era where creative tools are not just instruments, but intelligent collaborators that understand intent, anticipate needs, and empower creators to push the boundaries of what’s possible with unprecedented speed and ease. The ongoing public beta for Photoshop’s AI Assistant signifies Adobe’s commitment to iterative development, leveraging real-world user feedback to refine these groundbreaking features before their full global release.