In an era characterized by an unprecedented volume of digital information, the ability to conduct rigorous, accurate research has transitioned from a specialized academic skill to a fundamental requirement for professional content creators. The initial interaction between a reader and a piece of content serves as a critical juncture for brand authority; a single factual error or a reliance on outdated data can permanently erode audience trust. As digital platforms become increasingly saturated with AI-generated text and unverified claims, the implementation of a structured research methodology is now the primary differentiator for creators seeking to establish long-term credibility and solve complex problems for their target demographics.

The Evolution of Information Retrieval and the Credibility Crisis

The landscape of digital research has undergone a significant transformation over the last two decades. In the early era of the commercial internet, information scarcity was the primary obstacle. Today, the challenge is information density and the subsequent difficulty of verifying source integrity. According to industry data, the "trust deficit" in digital media has widened, with a growing percentage of consumers reporting skepticism toward unverified online sources. This shift has forced professional creators to adopt more sophisticated investigative techniques, moving beyond surface-level keyword searches toward a multi-layered verification process.

The chronology of this shift can be traced back to the mid-2000s when academic institutions began formalizing "information literacy" frameworks. One of the most enduring models, the CRAAP test—developed in 2004 by Sarah Blakeslee and her team of librarians at California State University, Chico—has recently seen a resurgence in the professional content sector. What was once a tool for university students is now a vital rubric for digital publishers navigating a landscape rife with misinformation and "hallucinated" data points from generative AI.

Strategic Frameworks for Modern Content Research

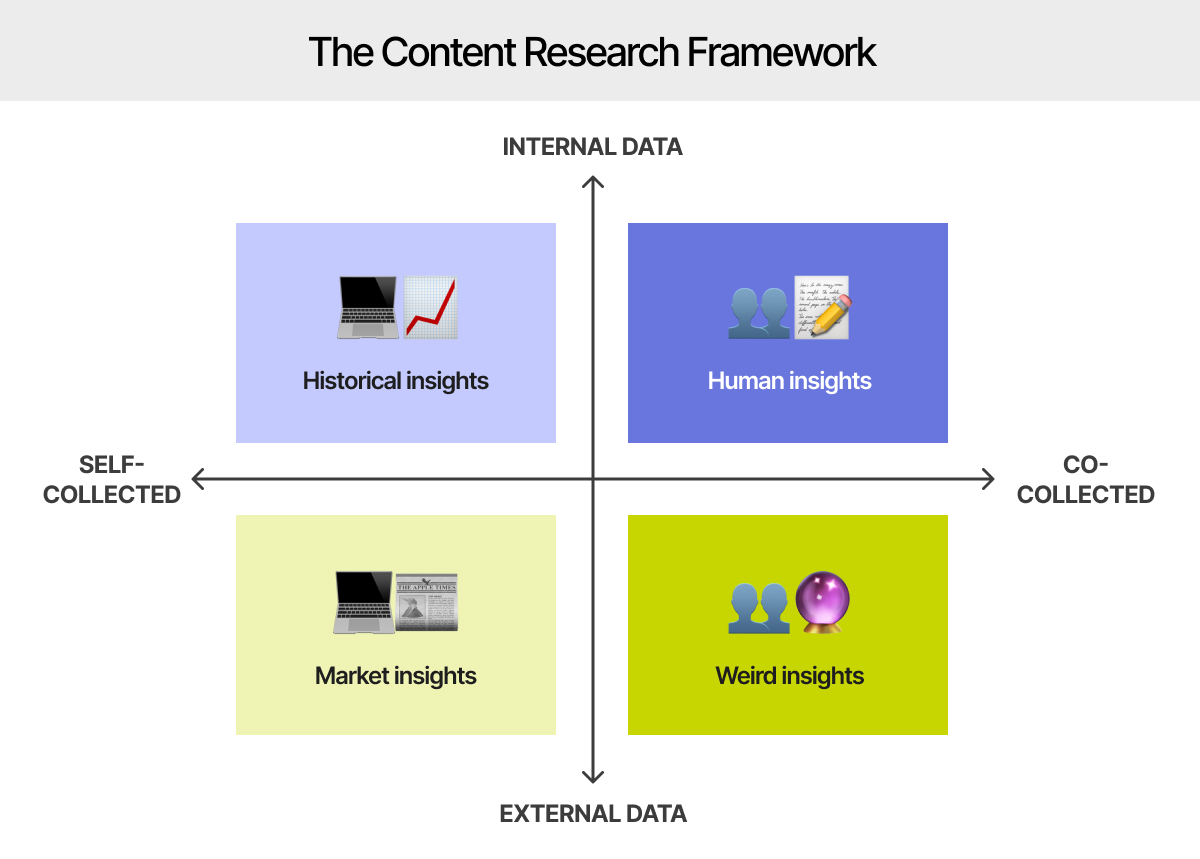

Professional research is not a linear task but a cyclical process that requires defined goals and organizational rigor. To build brand credibility, creators are increasingly adopting a "Data-Driven Content Framework," which prioritizes empirical evidence over anecdotal speculation. This process typically begins with the definition of specific informational goals: identifying the exact problem the content intends to solve and the specific questions the audience needs answered.

Once goals are established, the gathering phase utilizes a mix of primary and secondary sources. Primary research—such as interviews with subject matter experts or original data analysis—provides the highest level of authority. Secondary research involves the synthesis of existing reports, white papers, and peer-reviewed studies. The modern researcher must then "connect the dots," organizing disparate pieces of information into a cohesive narrative that offers unique insights rather than merely repeating existing tropes. This stage also involves a thorough "competitive scoping," where the researcher analyzes how other authorities in the niche have approached the topic, identifying gaps in the current discourse that their own content can fill.

Technical Precision: Leveraging Advanced Google Search Operators

Despite the rise of specialized databases, Google remains the primary tool for digital research. However, the standard search interface often yields results influenced by SEO optimization rather than objective relevance. To bypass these limitations, professional researchers utilize "search operators"—specialized commands that filter the web with surgical precision. Joshua Hardwick, Head of Content at Ahrefs, has highlighted several critical operators that streamline the investigative process:

- The "site:" Operator for Targeted Audits: This command allows researchers to limit results to a specific domain. For example, "site:gov" or "site:edu" ensures that results are pulled exclusively from government or academic institutions. It is also used by content managers to find indexing issues within their own domains or to locate specific pages on a competitor’s site that may not be easily accessible via standard navigation.

- The "related:" Operator for Market Analysis: This tool identifies websites that Google’s algorithms categorize as similar to a specific URL. This is essential for understanding a brand’s digital neighborhood and identifying peers or competitors within a specific niche.

- The "filetype:" Operator for Document Retrieval: Much of the world’s most valuable data is stored in PDF, PPTX, or XLSX formats rather than standard HTML pages. By using this operator, researchers can bypass blog posts and go directly to white papers, census reports, or technical manuals.

- Temporal Filters ("before:" and "after:"): In fast-moving industries like technology or finance, a source from 2022 may already be obsolete. Using date-specific operators allows researchers to see how a topic has evolved over a specific period or to find the most current data available.

- Combined Boolean Logic: By using "inurl:" or "in" in conjunction with parentheses and "OR" operators, researchers can search for multiple related topics across specific platforms like Quora or Reddit to identify the "pain points" and frequently asked questions of a specific community.

The CRAAP Test: A Rubric for Source Evaluation

Identifying a source is only the first step; evaluating its legitimacy is the more rigorous requirement. Academic institutions, including Purdue Global, emphasize that a source’s credibility is inextricably linked to the reputation of its creator and the transparency of its methodology. The CRAAP framework provides a comprehensive checklist for this evaluation:

- Currency: The timeliness of the information. Researchers must determine if the topic requires the most recent data (such as in medical or tech fields) or if older, foundational sources are acceptable.

- Relevance: The importance of the information for the specific needs of the audience. This involves checking if the source is at an appropriate level (not too elementary or too advanced) for the intended reader.

- Authority: The source of the information. This requires an investigation into the author’s credentials, organizational affiliations, and history of peer-reviewed contributions.

- Accuracy: The reliability, truthfulness, and correctness of the content. This is verified by cross-referencing the information with other independent sources and checking for a professional tone free of grammatical errors or emotional sensationalism.

- Purpose: The reason the information exists. Researchers must discern if the source’s intent is to inform, teach, sell, entertain, or persuade. Understanding the underlying bias—whether political, ideological, or commercial—is crucial for maintaining an objective stance in one’s own work.

Industry Implications and the Rise of Information Accountability

The shift toward high-standard research has significant implications for the "creator economy." As search engines like Google refine their algorithms to prioritize E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness), creators who neglect rigorous research are seeing a decline in visibility and engagement. Conversely, those who invest in deep research are finding higher conversion rates, as audiences are more likely to subscribe to and support creators who consistently provide accurate, high-value information.

Experts in the field of SEO and digital marketing suggest that we are entering an era of "Information Accountability." In this environment, the "Smooth Operator"—the researcher who can navigate complex data sets and verify sources with speed and accuracy—becomes a highly valued asset. This trend is also influencing how brands interact with their audiences; there is a move away from "clickbait" and toward "thought leadership," which is built entirely on the foundation of superior research.

Future Outlook: Research in the Age of Artificial Intelligence

As generative AI becomes more integrated into the research process, the role of the human researcher is evolving from a "finder" of information to a "verifier" of information. While AI can summarize large documents or suggest search queries, it is currently prone to "hallucinations"—the generation of false information presented as fact. This technological limitation makes the human application of the CRAAP test and the use of advanced search operators more critical than ever.

The future of digital content will likely be dominated by those who can synthesize the efficiency of AI with the skepticism and ethical standards of traditional journalism. By mastering the art of the research process, creators do more than just produce content; they contribute to a healthier digital ecosystem where facts are prioritized over noise and trust is the primary currency. The commitment to being an "upright researcher" is no longer just a professional preference; it is the cornerstone of sustainable digital growth and the primary safeguard against the rising tide of global misinformation.