The landscape of information retrieval is undergoing a significant transformation, driven by the rapid advancements in artificial intelligence. In February 2024, Fast Company [1] highlighted the burgeoning presence of conversational AI search engines, tools powered by large language models (LLMs) that are fundamentally reshaping how users interact with the vast expanse of the internet. These innovative systems aim to provide direct, summarized answers to user queries, moving beyond the traditional model of presenting a list of links. This shift, while promising enhanced usability, also introduces complex considerations regarding trust, explainability, and the subtle yet profound impact on user behavior and critical thinking.

The Genesis of a New Search Paradigm

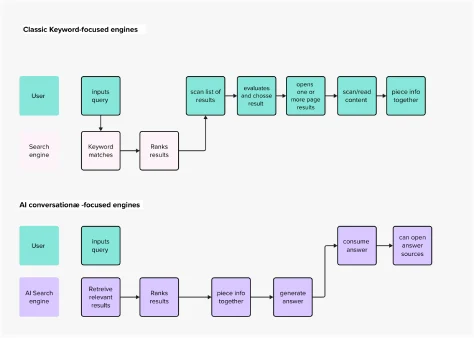

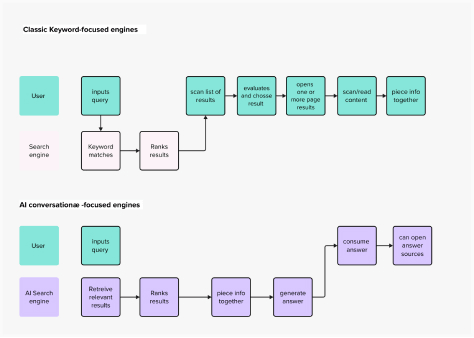

The current surge in conversational AI search engines is a direct consequence of the generative AI revolution, which burst into public consciousness with the widespread adoption of tools like ChatGPT in late 2022. This period marked a critical inflection point, demonstrating the power of LLMs to understand and generate human-like text, opening doors for applications far beyond simple chatbots. Prior to this, search engines predominantly operated on a keyword-matching paradigm, epitomized by Google’s long-standing dominance. Users would input keywords, and the engine would return a ranked list of web pages deemed most relevant, necessitating users to sift through results, evaluate sources, and synthesize information themselves.

Academic and scientific research has been at the forefront of this experimentation wave, as Nature reported in July 2023 [2], indicating a "frenzied enthusiasm" for what many perceive as a paradigm shift in information access. This excitement stems from the potential to dramatically streamline the search process, offering a more intuitive and conversational interface. Companies like Perplexity AI and Andi have emerged as early pioneers in this space, alongside established tech giants such as Microsoft, with its Copilot (formerly Bing Chat), and Google, with its Search Generative Experience (SGE), all vying to integrate generative AI capabilities into their search offerings. This movement represents a clear example of a "technology push" [3], where the sheer power of a new technological capability—generative AI—is driving market innovation and product development, often ahead of fully understanding its long-term societal and user implications.

Redefining the User Experience: A New Mental Model for Search

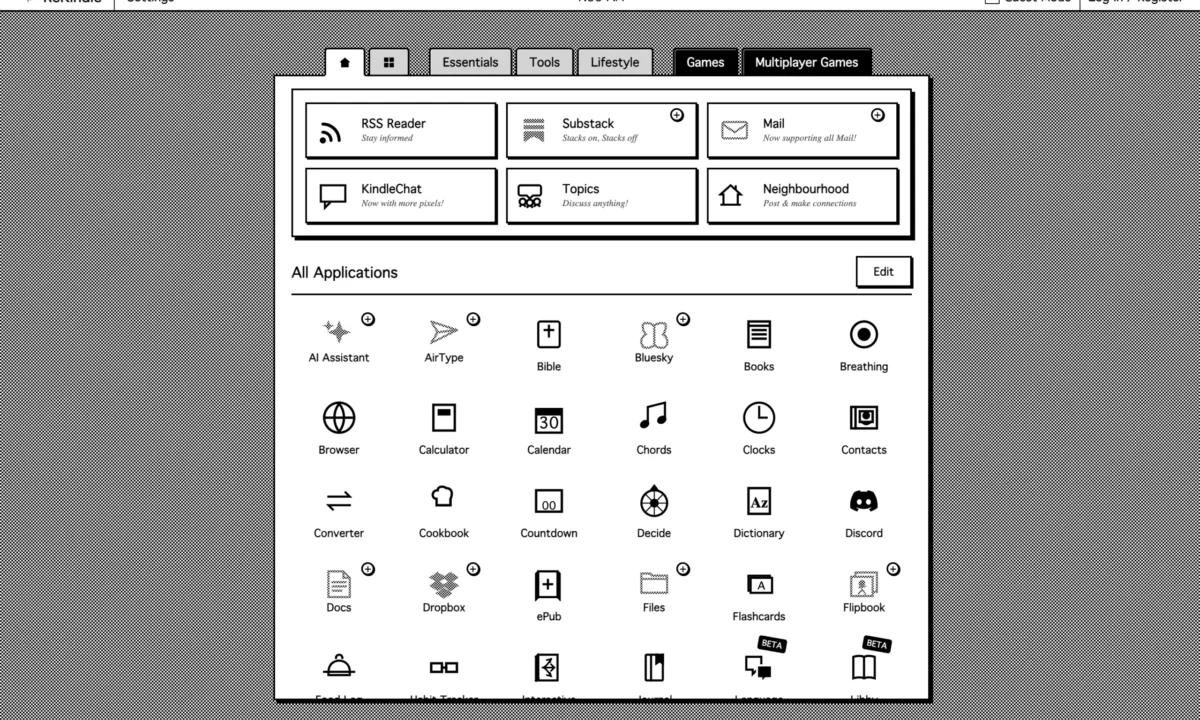

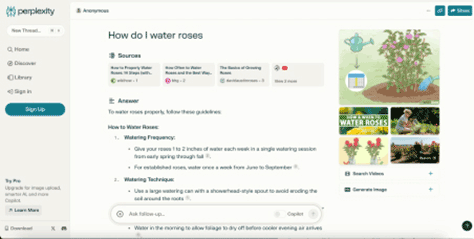

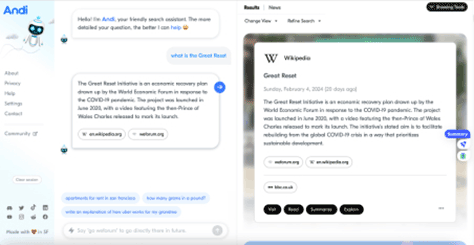

Conversational AI search engines are not merely incremental improvements; they propose a fundamentally different mental model for how users conceive of and interact with search. Platforms like Perplexity AI, as seen in its homepage, still feature familiar components such as an input field for queries and a central display for results. However, the underlying interaction pattern is less about navigating a directory and more about engaging in a dialogue, akin to conversing with a knowledgeable assistant. This conversational chatbot interface, popularized by ChatGPT, has become "front-of-mind" for many users, influencing expectations for how AI should function.

The radical departure from classic search lies in the output. Instead of a list of links, conversational AI search engines retrieve and summarize information from multiple sources, presenting a direct answer. Perplexity AI, for instance, collates snippets of information, directly addressing the user’s question. This approach significantly simplifies the user’s journey. In the traditional model, users would need to evaluate each individual search result, make educated guesses about content, open new pages, and then scan through dense text to find the answer. With AI search, the cognitive load is dramatically reduced. The system performs the synthesis, delivering what appears to be a definitive answer.

This direct Q&A pattern aligns closely with natural human conversation, fulfilling a crucial usability heuristic: "Match between system and the real world" [4]. By presenting information in a format that mirrors how humans naturally seek and receive answers, these AI-powered engines improve the usability and overall interaction of the search experience. Other usability heuristics are also addressed:

- Visibility of system status: While not always fully transparent in how it arrives at an answer, the presentation of a clear, concise response provides a sense of completion.

- Flexibility and efficiency of use: Experienced users can obtain answers much faster, bypassing the need to click through multiple links.

- Recognition rather than recall: Users don’t need to remember which search terms yielded the best results or specific URLs; the system delivers the synthesized information directly.

- Error prevention (to some extent): By pre-processing and summarizing, the system aims to prevent users from needing to interpret potentially conflicting information across various sources.

Andi, another conversational AI search engine, pushes this conversational mental model further in its layout and interactions, often prioritizing a chatbot-like display. Yet, as the original article points out, its information architecture—which displays a brief answer alongside a list of sources, encouraging users to click through—remains conceptually closer to the traditional SERP. This highlights a spectrum of design choices within the conversational AI search space, with varying degrees of deviation from established mental models.

Broader Implications: Trust, Explainability, and Human Agency

Despite the undeniable improvements in usability, the rise of conversational AI search engines brings forth a host of significant implications, particularly concerning trust, explainability, and the potential erosion of human critical thinking. When using tools like Perplexity AI, users are effectively delegating a substantial amount of decision-making to the AI. The system selects, extracts, and summarizes content, implicitly determining which sources are most relevant and how information should be presented. This process often operates as a "black box," neglecting a crucial aspect of AI explainability [5]. Users are not typically informed why certain sources were chosen over others, or how the synthesis was performed, which directly impacts the trustworthiness of the system.

Francesca Rossi, IBM’s Global Ethics Leader, underscores this point, stating that AI raises concerns about "its ability to make important decisions in a way that humans would perceive as fair… and the capability to explain its reasoning and decision-making" [6]. The absence of clear explainability fosters an environment where users might uncritically accept AI-generated answers, regardless of their accuracy or underlying biases. This risk is increasingly recognized in the enterprise world, where impending regulations like the EU AI Act and the prospect of substantial fines or reputational damage are driving a focus on responsible AI development. However, for individual "Internauts"—habitual and often highly skilled users of the internet—the implications for critical discernment are less immediately apparent but potentially more insidious.

As Gen Z expert Roberta Katz eloquently puts it, "first you make the building and then the building makes you" [7]. This analogy extends powerfully to digital architectures; the design of our apps and IT systems profoundly shapes user behavior. The danger with tools that offer ready-made, authoritative-sounding answers is that users may become accustomed to convenience, foregoing the crucial step of questioning accuracy or veracity. While these tools often provide links to source documents, it remains difficult for users to ascertain:

- Which sources are genuinely the most authoritative or reliable for a given query.

- The rationale behind the AI’s selection of particular sources and its subsequent summarization process.

This deeper delegation of decision-making inherent in generative AI could, in the long run, impact users’ critical thinking skills. The analogy of text messaging contributing to "sloppy writing" [7] serves as a cautionary tale. While the process of sifting through Google’s SERP, comparing findings, and synthesizing information manually might be more tedious, it constitutes a "healthy exercise for our analytical and creative skills," as the original article notes. This active engagement discourages uncritical over-reliance on AI models.

If the prevailing mental model of a search engine evolves into a tool that "always knows the right answer," the societal implications could be enormous. For trivial searches, like finding a recipe or removing a stain, this might be inconsequential. However, for critical knowledge in fields such as policymaking, scientific research, or civil discourse, the ability to discern truth from falsehood is paramount. The potential for the uncritical acceptance of AI-generated content, especially given the phenomenon of "AI hallucinations" [11] (where LLMs generate factually incorrect but plausible-sounding information), poses a significant threat to information integrity and public understanding.

Furthermore, the rise of conversational AI search engines carries profound economic and ecosystemic implications, particularly for content creators and publishers. Kevin Roose of The New York Times succinctly articulated this concern: "If AI search engines can reliably summarize what’s happening in Gaza or tell users which toaster to buy, why would anyone visit a publisher’s Web site ever again?" [8] This question strikes at the heart of the internet’s content economy, which largely relies on traffic to websites for advertising revenue and subscription models. If AI search engines become the primary interface for information consumption, bypassing direct visits to source sites, it could destabilize the very ecosystem that feeds these AI models with data.

Andi’s design, which prominently displays source links alongside a brief answer, offers a potential mitigation strategy. By encouraging users to click through to original sources, it demonstrates how UX and UI design can influence user behavior towards more desirable outcomes, supporting the content ecosystem while still offering AI-powered convenience.

Navigating the Future: Towards Trustworthy AI Search

The trajectory suggests that AI will indeed be the future of search. Generative capabilities offer numerous ways to enhance the user experience and expedite knowledge acquisition. The more pertinent questions, therefore, are:

- How can we develop AI search engines that are inherently trustworthy and transparent?

- How can we design these systems to foster, rather than diminish, human critical thinking and agency?

A study published in Nature [9] highlighted the conflicting views on AI science search engines among researchers, with some praising their utility and accuracy, while others expressed profound distrust due to inconsistent retrieval performance. This underscores that trust is the central impediment to widespread, confident AI adoption.

To build trustworthy AI search engines, two key areas of focus are essential:

-

Technological Advancement and Ethical Design:

- Accuracy and Reliability: This involves rigorous data curation, advanced retrieval-augmented generation (RAG) techniques [10] to ground LLM outputs in verifiable sources, and continuous evaluation of factual consistency. Addressing AI hallucinations is paramount.

- Explainability and Transparency: Implementing mechanisms that articulate how the AI arrived at its answer, why certain sources were prioritized, and the confidence level of the generated information. This could involve highlighting key sentences from sources, providing a confidence score, or allowing users to delve deeper into the AI’s reasoning process (e.g., GraphRAG [12]).

- Bias Mitigation: Actively identifying and reducing biases in training data and algorithmic decision-making to ensure fair and equitable information retrieval.

-

Regulatory Frameworks and User Education:

- Policy and Regulation: As new regulations, such as the EU AI Act, emerge to ensure safer and ethical uses of AI, these frameworks will increasingly dictate design and deployment standards for AI search engines. These regulations will likely mandate transparency, accountability, and robust risk assessments.

- User Empowerment: Designing interfaces that explicitly encourage critical engagement. This might include clear disclaimers about AI-generated content, prominent links to original sources, and features that allow users to easily verify information or explore alternative perspectives. The goal is to cultivate a user base that understands the capabilities and limitations of AI.

- Education and Media Literacy: Complementing technological and regulatory efforts with broader educational initiatives to enhance digital literacy and critical thinking skills in an AI-driven information environment.

Ultimately, the question of whether a good search experience—defined purely by convenience and speed—is also the right experience becomes increasingly relevant. Within a context where users are delegating significant cognitive tasks to LLMs, design efforts must prioritize accuracy, trustworthiness, and the comprehensiveness of search outputs, as emphasized by Microsoft Research [12]. This demands a holistic approach that transcends merely building a "super user-friendly conversational user interface," integrating ethical considerations and robust technical safeguards from the outset. The future of AI search engines hinges not just on their innovative capabilities, but on their ability to foster an informed, discerning, and critically engaged user base.