The digital landscape is undergoing a profound transformation with the ascendancy of conversational Artificial Intelligence (AI) search engines, a development highlighted by Fast Company in February 2024. These sophisticated tools, powered by large language models (LLMs), represent a significant departure from traditional keyword-based search. They are engineered to directly answer user queries by retrieving, synthesizing, and summarizing information from vast swathes of internet data, fundamentally reshaping how individuals access and interact with digital information. This evolution is not merely an incremental update but a paradigm shift, prompting a critical examination of its usability implications, its impact on the user experience, and broader societal ramifications.

The Evolution of Search: A New Paradigm Emerges

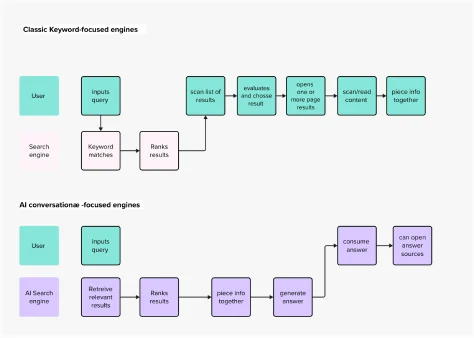

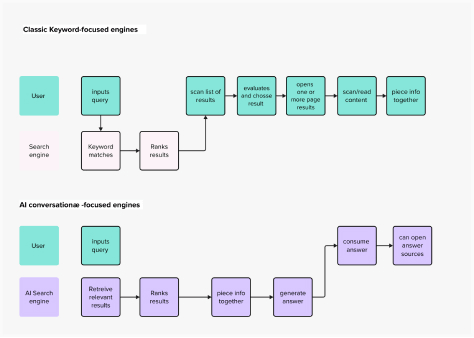

For decades, the internet search experience has been largely defined by the "Google model" – a list of hyperlinks that users navigate to find answers. This model, while immensely successful, requires users to actively evaluate sources, sift through content, and piece together information. The advent of generative AI, particularly the public emergence of powerful LLMs like ChatGPT in late 2022, ignited a wave of innovation, ushering in a new era of conversational interfaces. This "technology push," as described by innovation theorists, where a powerful new capability drives market evolution, has rapidly translated into a burgeoning ecosystem of AI-powered search applications. Academic and scientific communities are at the forefront of this experimentation, exploring the potential of AI to streamline research and information retrieval.

Before the current AI surge, search engines primarily functioned as directories, indexing web pages and ranking them based on relevance algorithms. Early search engines like AltaVista and Lycos gave way to the dominance of Google, which refined the art of delivering relevant links through its PageRank algorithm. Users developed a mental model of search as a process of query formulation, link selection, and subsequent content consumption. However, this established paradigm is now being challenged by AI-driven systems that aspire to deliver direct answers, mirroring human conversation. The frenzied enthusiasm around these tools stems from their promise to bypass the laborious sifting process, offering an instant gratification model that aligns with contemporary demands for efficiency.

Deconstructing the New Mental Model for Search

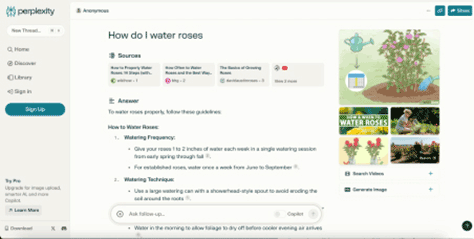

Conversational AI search engines introduce a radically different mental model for information retrieval. Platforms such as Perplexity AI exemplify this shift. Upon visiting Perplexity’s homepage, users encounter familiar elements: an input field for queries, a central display area for results, and supplementary links. Yet, the underlying interaction fundamentally diverges from its predecessors. Instead of a list of blue links, Perplexity presents a synthesized, coherent answer, drawing from multiple sources and often citing them within the generated text. This mirrors the conversational chatbot interface popularized by ChatGPT, where users engage in a dialogue to refine their information needs.

Figure 1 (as depicted in the original context) of Perplexity’s home page showcases this blend of familiar and novel elements. The input field is central, but the output is an integrated response, not a fragmented list. This approach simplifies the user journey, moving from "find information about X" to "tell me about X." The user’s goal remains constant – to find an answer – but the path to that answer is drastically shortened. This directness fulfills a crucial usability heuristic: minimizing user effort and cognitive load.

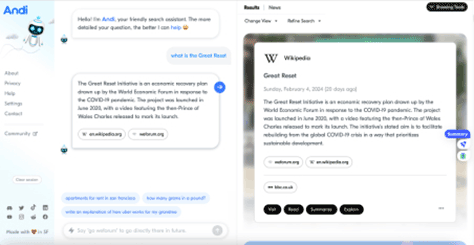

Another notable example is Andi, which takes the conversational mental model to an even greater extreme in its visual layout and interactive elements (Figure 3 in the original). While its interface strongly resembles a chatbot, Andi’s information architecture often maintains closer ties to classic search, presenting a brief, direct answer alongside a prominent list of source links. This hybrid approach suggests an ongoing experimentation within the industry to balance the immediacy of AI-generated answers with the transparency and control offered by traditional link-based results.

The development of these AI search engines represents a classic "technology push" scenario. The capabilities of generative AI, particularly LLMs, are so potent that developers are exploring novel applications, often before fully understanding the long-term user implications or societal impacts. While the innovation is undeniable, the extent to which this evolution truly benefits users, rather than simply leveraging a new technology, remains a key question for designers and ethicists alike.

Enhancing the Search Experience: Usability and Efficiency

The immediate benefits of conversational AI search engines to usability and interaction design are clear. By directly answering questions, these tools dramatically reduce the cognitive load on users. Instead of evaluating a dozen links, clicking through multiple pages, and extracting snippets, users receive a collated, summarized response. This aligns perfectly with the "Q&A pattern" inherent in human conversation, making the interaction feel more natural and intuitive.

Drawing on Jakob Nielsen’s usability heuristics for user-interface design, AI-powered search engines appear to tick several boxes for enhancing the user experience:

- Match between system and the real world: The conversational interface mimics human dialogue, making it highly intuitive for users accustomed to asking questions and receiving direct answers.

- Flexibility and efficiency of use: Expert users can quickly get concise answers, while novices benefit from the system’s ability to interpret complex queries and provide comprehensive summaries.

- Recognition rather than recall: Users don’t need to remember precise keywords; the AI can understand natural language queries and often infer intent.

- Error prevention: By synthesizing information, the system reduces the chance of users misinterpreting individual sources or missing critical details, though it introduces new types of "errors" related to AI accuracy.

- Aesthetic and minimalist design: Many AI search interfaces prioritize clean layouts focused on the conversational exchange, reducing visual clutter.

For example, when a user asks Perplexity a complex question, the response format often matches that of a well-structured article, complete with headings, bullet points, and citations. This contrasts sharply with the fragmented experience of scanning multiple traditional search results. Market research consistently shows a user preference for immediate, relevant information, and AI search delivers this with unprecedented efficiency. A 2023 study by Statista indicated that convenience and speed are paramount for digital users, traits that conversational AI search inherently prioritizes.

However, the question arises: does this superior convenience necessarily equate to the "right" experience for the future of information retrieval? The efficiency gains are clear, but the broader implications warrant deeper scrutiny.

Broader Implications: Trust, Explainability, and Human Agency

The unparalleled convenience offered by conversational AI search engines comes with significant trade-offs, particularly concerning trust, explainability, and the subtle erosion of human agency. When using a tool like Perplexity, users are effectively delegating a substantial portion of their decision-making and critical evaluation to an AI. The generative technology selects, extracts, and summarizes content, presenting it as an authoritative answer. The challenge lies in the system’s current inability to transparently communicate why specific sources were chosen over others or how the summary was constructed. This lack of AI explainability, a critical aspect of trustworthy AI systems, directly impacts user confidence.

As Francesca Rossi, IBM’s Global Ethics Leader, emphasizes, building trust in AI hinges on its ability to make fair decisions, align with human values, and explain its reasoning. Without this transparency, AI systems can become "black boxes," making it difficult for users to assess the veracity or bias of the information provided. While the enterprise world is grappling with these concerns due to impending regulations and the specter of fines or reputational damage, individual users, often described as "Internauts" due to their habitual internet usage, may be less aware of the inherent risks.

The architectural design of digital tools, much like physical buildings, shapes user behavior and perception. As sociologist Roberta Katz notes, "first you make the building and then the building makes you." Similarly, the design of AI search engines influences how users interact with information and, potentially, their cognitive habits. There is a tangible risk that users could become accustomed to readily accepting convenient, authoritative-sounding AI-generated answers without critically questioning their accuracy or underlying methodology.

While most AI search engines provide links to source documents, several critical questions remain unanswered for the average user:

- Selection Bias: How were the sources chosen? Were all relevant perspectives considered?

- Synthesis Accuracy: How accurately does the AI interpret and synthesize complex information from diverse sources? Is the summary balanced and unbiased?

- "Hallucinations": Are there instances where the AI fabricates information or presents plausible but incorrect facts, a known challenge with LLMs?

The first issue, selection bias, is also present in traditional search, where algorithms determine link ranking. However, the second and third issues become far more pronounced with generative AI, which actively constructs narratives. Even with perfect explainability, the sheer ease of obtaining a summarized answer could, over time, diminish users’ critical thinking skills. Just as some argue that text messaging has impacted formal writing abilities, an over-reliance on AI for immediate answers might reduce the inclination or capacity for analytical information processing.

The act of sifting through Google’s search results, comparing different articles, and synthesizing information independently, while more tedious, serves as a valuable exercise for analytical and creative thought. It fosters information literacy and encourages a healthy skepticism necessary for navigating a complex information environment. If the prevailing mental model of a search engine transforms into a tool that "always knows the right answer," the societal implications for critical discernment, particularly in sensitive fields like policymaking, science, or civil discourse, could be profound.

Beyond critical thinking, there’s a significant economic implication for content creators and publishers. Kevin Roose of The New York Times succinctly articulated the concern: if AI search engines reliably summarize information, why would users visit original publisher websites? This poses an existential threat to the ad-revenue models that sustain much of the internet’s free content. Some AI search engines, like Andi, attempt to mitigate this by prominently displaying source links, encouraging users to click through and engage with the original content. This highlights the power of UX and UI design in guiding user behavior towards more desirable outcomes for the broader information ecosystem.

Industry Responses and the Regulatory Landscape

Major technology players are not ignoring the shift toward conversational AI search. Google has introduced its Search Generative Experience (SGE), integrating generative AI directly into its search results to offer summarized answers alongside traditional links. Microsoft has integrated OpenAI’s technology into Bing Chat, aiming to capture market share by offering a more interactive and conversational search experience. These moves indicate a clear industry consensus that AI will be integral to the future of search.

However, the rapid deployment of these technologies has outpaced the development of robust regulatory frameworks. Governments worldwide are beginning to address the ethical and safety concerns surrounding AI. The European Union’s AI Act, for instance, aims to establish a comprehensive legal framework for AI, categorizing systems by risk level and imposing stricter requirements on high-risk applications, which could include powerful search engines. These regulations will likely mandate greater transparency, explainability, and accountability from AI developers, pushing for design efforts focused on accuracy, trustworthiness, and the comprehensiveness of search outputs.

A study published in Nature revealed conflicting views among scientific researchers regarding AI science search engines; some found them incredibly useful and accurate, while others expressed deep distrust due to inconsistent retrieval performance. This underscores the current variability in AI performance and the paramount importance of trust for widespread adoption.

To foster trust and ensure responsible innovation, two key areas of focus are emerging:

-

Technological Advancements:

- Retrieval-Augmented Generation (RAG): This technique combines the generative power of LLMs with robust information retrieval systems, allowing AI to ground its answers in specific, verifiable sources, thus reducing "hallucinations" and improving accuracy.

- Enhanced Source Attribution: Clearly linking generated content to its original sources, potentially with confidence scores or indicators of source reliability.

- Bias Detection and Mitigation: Developing sophisticated algorithms to identify and neutralize inherent biases in training data or the LLM’s interpretation.

-

Design and Policy Frameworks:

- User Education: Empowering users with the knowledge to critically evaluate AI-generated content and understand its limitations.

- Transparency by Design: Integrating explainability features directly into the user interface, showing how answers were derived and why certain sources were prioritized.

- Ethical AI Guidelines: Establishing industry-wide standards and best practices for developing and deploying AI search engines that prioritize fairness, accountability, and user well-being.

- Auditing and Verification Mechanisms: Independent third-party audits to assess the accuracy, fairness, and safety of AI search systems.

The Future of Search: Navigating Innovation and Responsibility

The question is no longer if AI will be the future of search, but how it will be shaped. Generative capabilities offer undeniable enhancements to the user experience, facilitating faster access to knowledge. However, the critical inquiries now revolve around ensuring this powerful technology is deployed responsibly:

- How can AI search engines provide the convenience of direct answers without compromising the user’s ability to critically evaluate information?

- What design principles and technical safeguards are necessary to build AI search systems that are demonstrably trustworthy, transparent, and accountable?

The emerging regulatory environment, coupled with ongoing research into AI explainability and bias mitigation, suggests a future where a "good" search experience must also be the "right" experience. As users increasingly delegate decision-making to LLMs, the focus of design and development efforts must transcend mere user-friendliness. It must encompass accuracy, trustworthiness, and the comprehensiveness of information, ensuring that while technology evolves, human intellectual agency and critical discernment remain paramount. This requires a collaborative effort from technologists, ethicists, policymakers, and users themselves to define a future of search that is both innovative and profoundly responsible.