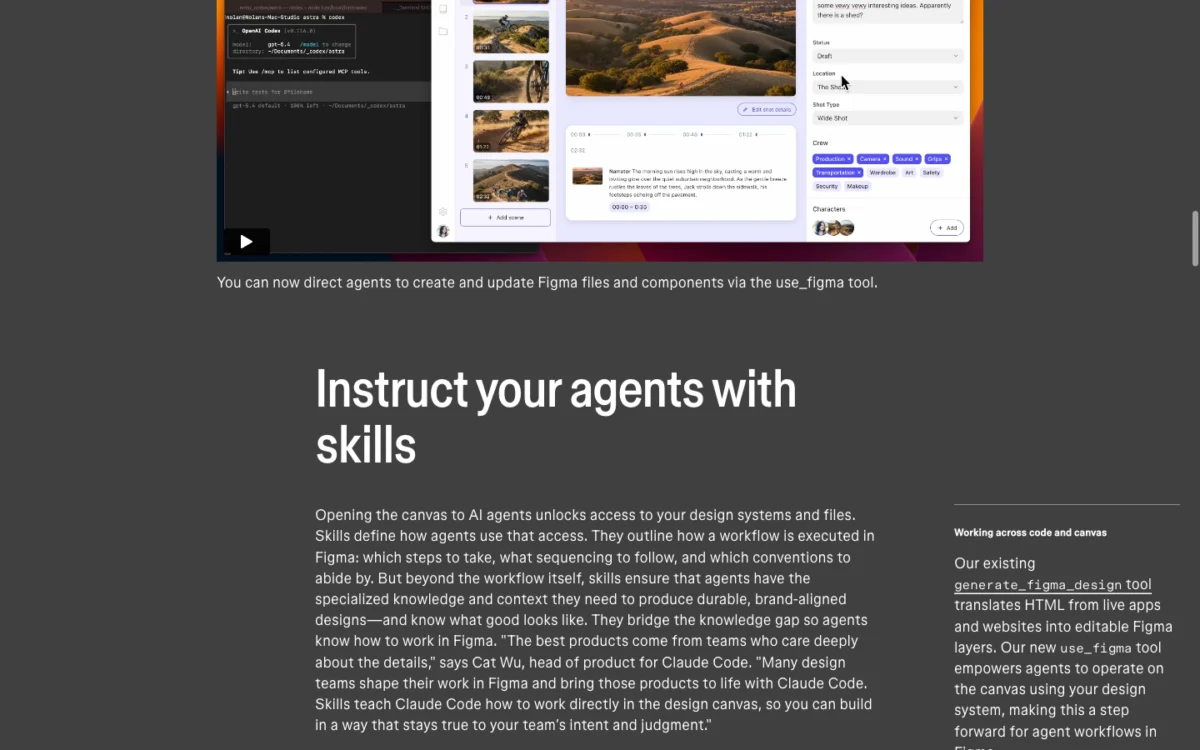

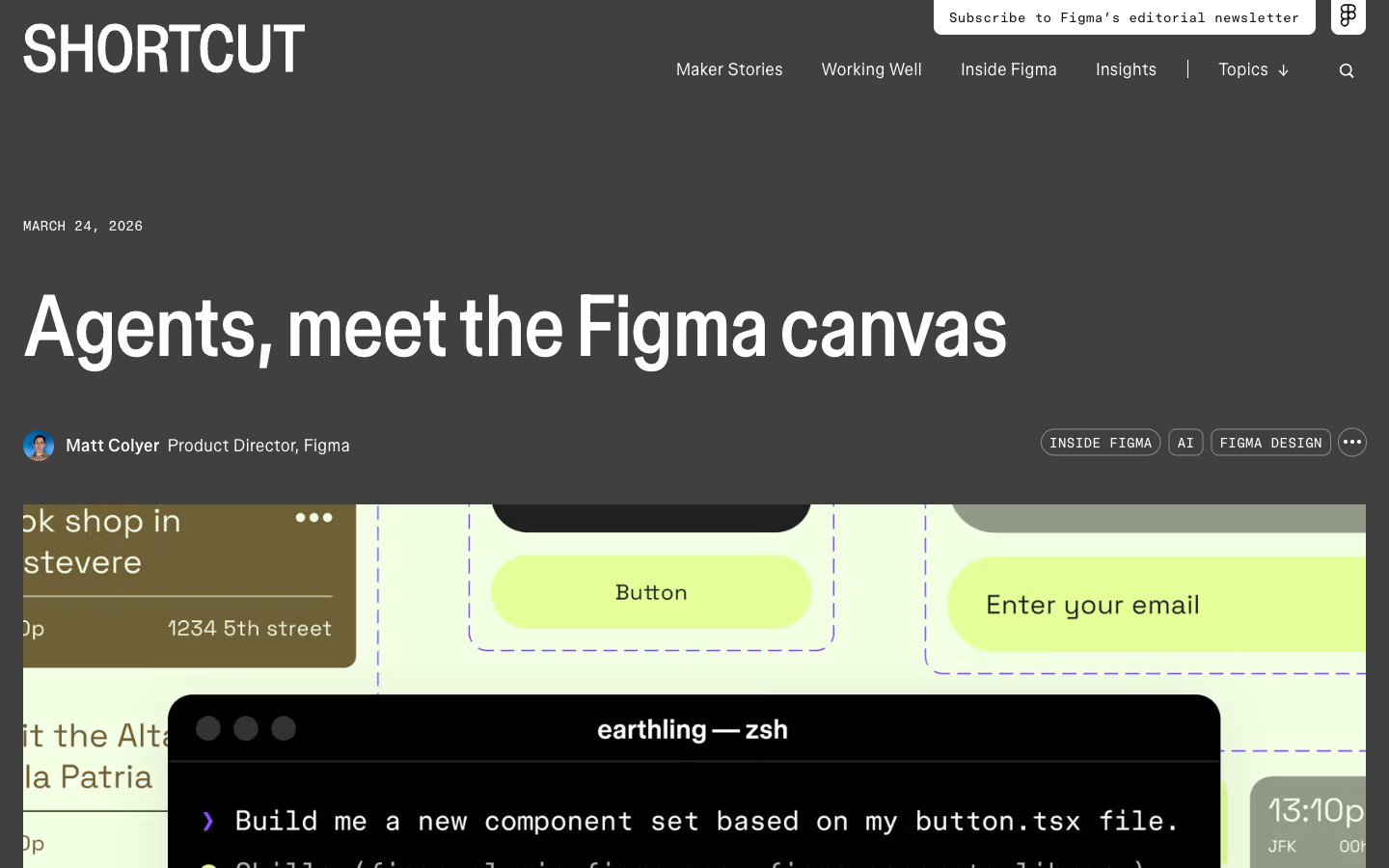

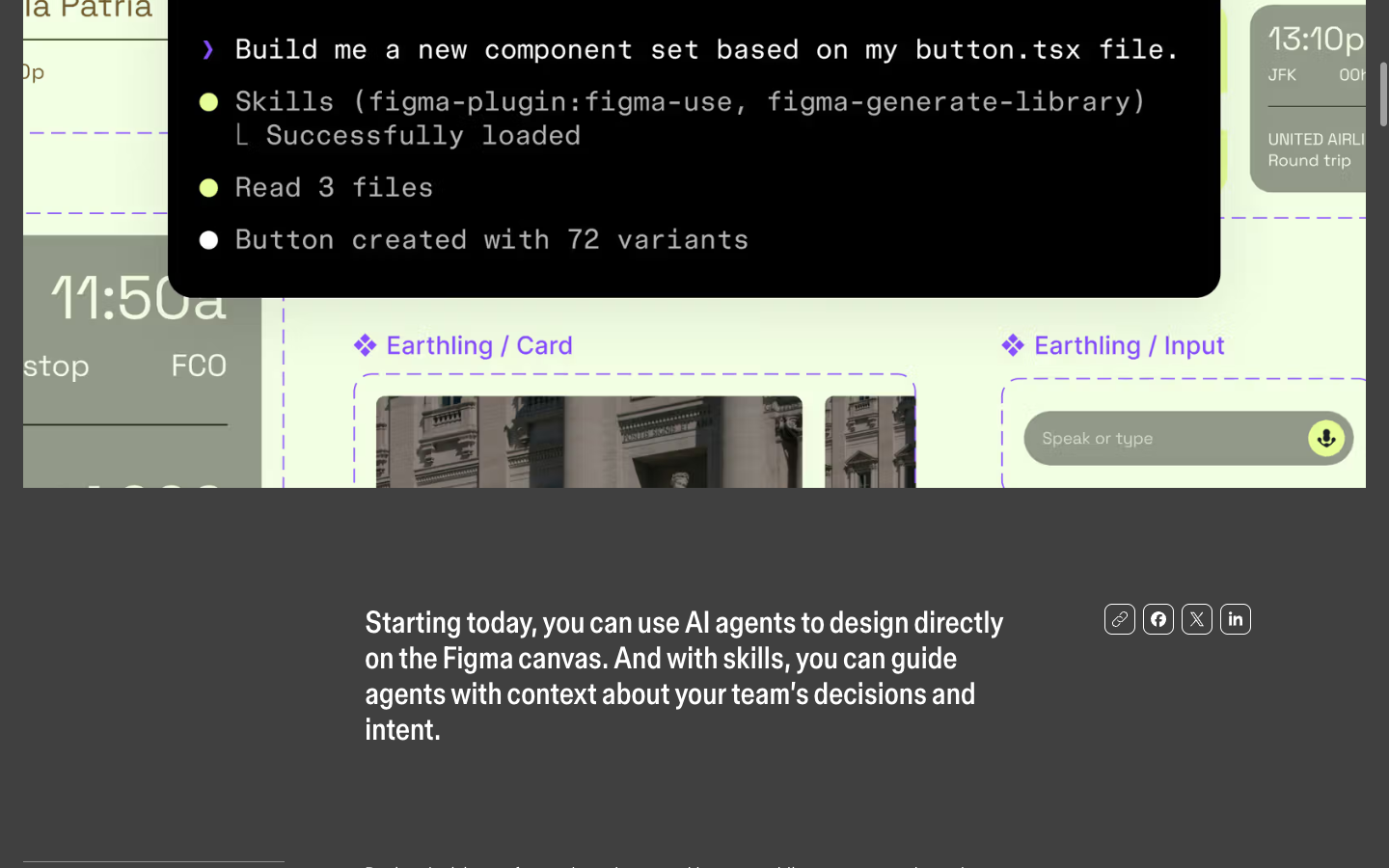

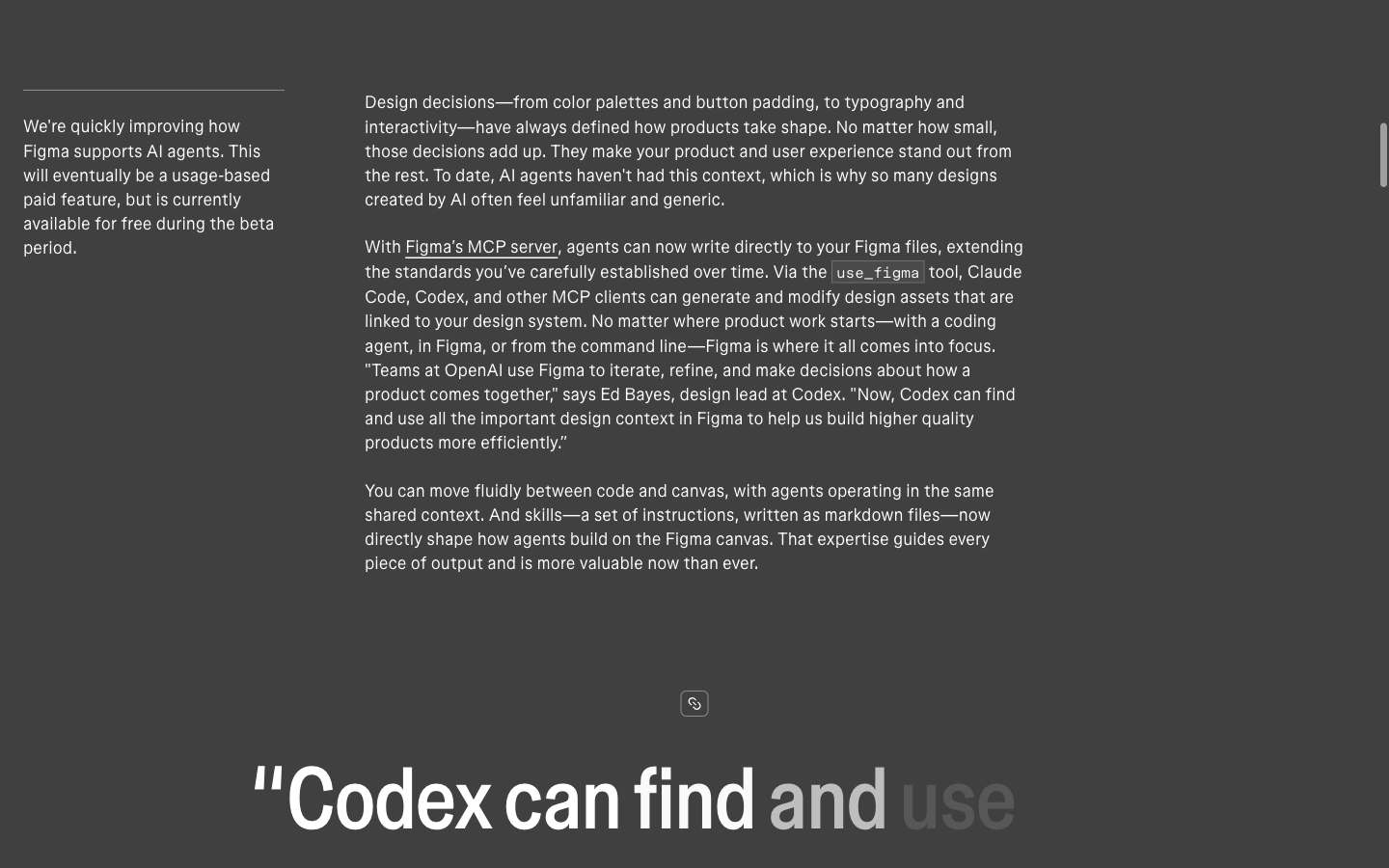

On March 24, 2026, Figma unveiled a transformative leap in design automation, announcing the direct integration of artificial intelligence agents with its collaborative design canvas. This landmark development, revealed on the company’s official blog, empowers advanced coding agents such as Claude Code, Codex, Cursor, Copilot CLI, Augment, Factory, Firebender, and Warp with unprecedented write access to Figma design files. At the heart of this innovation is the use_figma MCP (Machine-Composable Protocol) tool, which serves as the conduit, allowing AI agents to directly manipulate core design elements including frames, components, variables, auto layout configurations, and design tokens, all meticulously wired to an organization’s existing design system. This eliminates the historically tedious and error-prone process of manually transcribing design specifications between disparate tools, marking a pivotal moment in the convergence of design and development workflows.

The significance of this announcement extends beyond mere automation, introducing a paradigm shift through the innovative "Skills" framework. Skills are essentially markdown files that function as instruction manuals for Figma AI agents, imbuing them with an understanding of a team’s specific operational methodologies. These files delineate which components an agent should prioritize, the optimal sequence of actions to undertake for a given task, and the adherence to established design conventions. Nine community-built skills were launched concurrently with the feature, providing immediate utility and examples, including /figma-generate-design, /apply-design-system, and /sync-figma-token. Crucially, teams retain the ability to author their own custom skills, enabling tailored automation that mirrors their unique workflows and brand guidelines. This robust framework means that an AI agent, when equipped with a meticulously crafted skill file, can operate with the discernment and adherence to standards akin to a seasoned designer who has absorbed every nuance of a team’s comprehensive component documentation.

The Evolution of AI in Design: A Chronological Context

Figma’s latest announcement is not an isolated event but rather a significant progression in the broader trajectory of AI integration within the creative and development industries. The journey towards intelligent design automation has been a gradual yet accelerating process, with roots tracing back to early attempts at algorithmic design and generative art.

- Early 2010s: Foundations of Design Systems: The concept of design systems gained widespread adoption, emphasizing reusable components, consistent styling, and standardized documentation. This period laid the groundwork for structured design assets that would later become machine-readable.

- Mid-2010s: Rise of Collaborative Design Tools: Figma itself emerged as a disruptor, centralizing design workflows and fostering real-time collaboration, thereby creating a unified environment ripe for future automation.

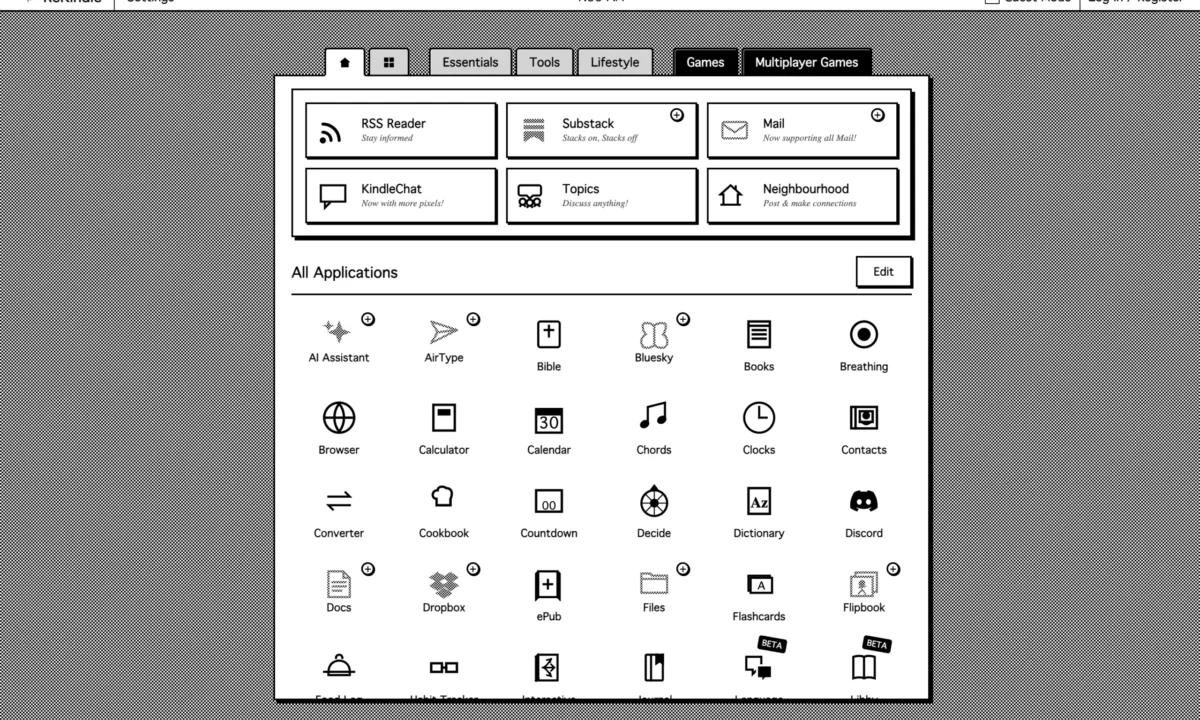

- Late 2010s – Early 2020s: Introduction of AI in Design Assistance: Initial AI applications focused on assistive tasks, such as intelligent image recognition, automated background removal, and smart content suggestions within design tools. Figma’s own

generate_figma_designtool, which converts live HTML into editable Figma layers, represents an earlier foray into bridging the code-design gap through AI-powered conversion rather than direct generation or manipulation. This tool demonstrated the potential for AI to streamline the intake of external content into the Figma ecosystem, setting the stage for more active, generative capabilities. - 2023-2025: Proliferation of Large Language Models (LLMs) and Coding Agents: The rapid advancement of LLMs like Claude, GPT, and specialized coding agents significantly expanded the capabilities of AI to understand natural language prompts, generate code, and interact with complex systems. This period saw a surge in tools like GitHub Copilot and Cursor, which began to augment developer workflows directly within integrated development environments (IDEs).

- March 24, 2026: Figma’s Canvas Opens to Agents: This marks a critical inflection point. Instead of merely assisting or converting, AI agents are now granted the agency to directly write to the design canvas. This leap from passive assistance to active creation and modification fundamentally redefines the interaction model between AI and design. The integration of coding agents like Claude Code and Cursor highlights Figma’s strategic move to connect the design canvas not just with design-centric AI, but with AI tools already proficient in understanding and generating code, thereby directly addressing the code-design synchronization challenge.

This chronological progression illustrates Figma’s commitment to leveraging cutting-edge AI to solve long-standing industry challenges, particularly the friction between design and development. By opening its canvas, Figma is not just adding a new feature; it’s ushering in a new era of intelligent design systems.

Deep Dive into the use_figma MCP Tool and Supported Agents

The use_figma MCP tool acts as the crucial interface, translating the operational directives of AI agents into actionable commands within the Figma environment. This tool is built upon an architecture designed for interoperability, allowing a diverse range of coding agents to interact seamlessly with Figma’s robust API.

The list of initially supported agents underscores the breadth of this integration:

- Claude Code (Anthropic): Known for its advanced reasoning and code generation capabilities, enabling complex design logic to be translated into Figma elements.

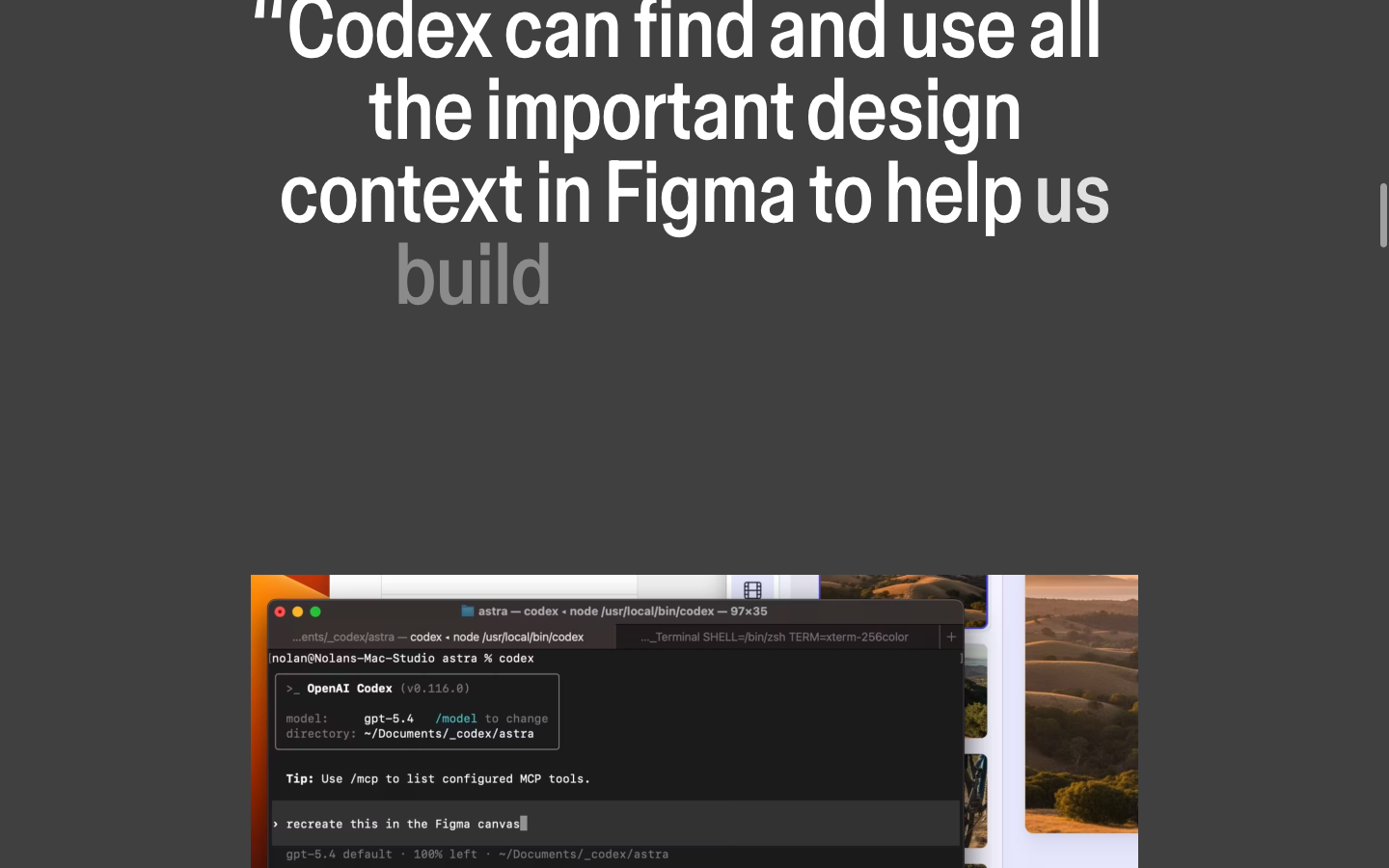

- Codex (OpenAI): A powerful code-generation model that can interpret natural language and produce various programming languages, now extended to Figma’s proprietary structure.

- Cursor: An AI-powered code editor that enhances developer productivity, now capable of directly manifesting design elements based on coded instructions.

- Copilot CLI (GitHub): Extending GitHub’s popular AI pair programmer to command-line interfaces, allowing developers to generate design assets via terminal commands.

- Augment: Likely referring to specialized AI tools focused on augmenting human creativity or specific development tasks, now connected to the design canvas.

- Factory: Potentially an internal or emerging tool focused on automating component creation or assembly.

- Firebender: An unconfirmed or niche AI tool, possibly specialized in front-end development or visual asset generation.

- Warp: A modern, AI-enhanced terminal that can now integrate design generation into its workflow.

The ability for these agents to directly manipulate Figma assets means that design elements such as frames, which define canvases or sections; components, the reusable building blocks of a UI; variables, which store design properties like colors or fonts; auto layout, Figma’s powerful responsive design feature; and design tokens, the atomic units of a design system (e.g., color.primary.500), can all be programmatically created, modified, and synchronized. This unprecedented level of granular control positions AI agents not just as assistants, but as active participants in the design system lifecycle, capable of ensuring pixel-perfect consistency and adherence to established design principles without manual intervention.

The Skills Framework: Bridging Human Intent and AI Execution

The "Skills" framework represents the intelligence layer that personalizes AI agent behavior to a team’s specific needs. These markdown files are more than just configuration settings; they are executable blueprints that imbue agents with context and operational intelligence.

Each skill file can define:

- Component Selection Logic: Guiding agents on which specific components from a library to use under various conditions (e.g., "for a primary call-to-action, always use

Button/Primarywithstate: default"). - Action Sequencing: Prescribing the order of operations for complex tasks (e.g., "first create a frame, then add a header component, followed by a body text block, and finally a footer component").

- Convention Adherence: Ensuring consistency in naming layers, grouping elements, applying styling, and maintaining accessibility standards (e.g., "all input fields must have a corresponding label and follow WCAG guidelines for contrast").

The initial nine community-built skills provide a powerful starting point:

/figma-generate-design: Likely a broad skill for generating entire design layouts or sections based on high-level prompts./apply-design-system: A critical skill for ensuring all generated or modified designs strictly adhere to the established design system’s rules and tokens./sync-figma-token: Potentially for synchronizing design tokens between Figma and development environments, maintaining a single source of truth.

The ability for teams to write their own skills is perhaps the most impactful aspect, enabling unparalleled customization. This democratizes AI agent training, allowing design operations teams, senior designers, or even developers to codify their specific expertise into reusable agent instructions. This approach effectively scales expert knowledge, ensuring that even junior designers or new team members, through the agents they command, can produce work consistent with the highest team standards. The analogy of an agent reading "every page of your component documentation" aptly captures the profound impact on design system governance and output quality.

Why Figma AI Agents Change Design System Thinking

For decades, design systems have been meticulously crafted with human consumption in mind. Every naming convention, usage guideline, and piece of documentation has been carefully written for designers and developers to read, comprehend, and apply. The arrival of Figma AI agents fundamentally alters this paradigm, introducing a new primary consumer for design system documentation: the machine.

This shift means that the quality and structure of a design system now directly correlate with the quality of AI agent output. A messy component library with inconsistent naming conventions, ambiguous usage notes, or a chaotic token structure will inevitably lead to poor, unreliable, and potentially unusable results from AI agents. Conversely, a clean, well-annotated, and logically structured design system will enable agents to produce work that is remarkably closer to production-ready on the first pass, significantly reducing the need for human oversight and correction. This pushes design system governance from a "nice-to-have" for human efficiency to an absolute imperative for effective AI integration.

Inferred Statement – Design System Lead: "For years, we’ve focused on making our design system intuitive for human designers. With Figma AI agents, the imperative has shifted. The question is no longer just ‘Is our system easy for humans to understand?’ but ‘Is our system structured and documented rigorously enough for an AI to act upon it with precision?’ This means an renewed focus on semantic naming, strict token hierarchies, and machine-readable documentation. It’s an exciting challenge that promises unprecedented consistency and efficiency."

The feature works in tandem with existing capabilities, such as the generate_figma_design tool, which converts live HTML into editable Figma layers. This combination creates a powerful feedback loop: AI agents can not only generate new designs but also maintain code-design synchronization by adapting existing designs based on code changes, generate accessible annotations automatically, and create screen variants at an unprecedented scale. For instance, an agent could be tasked with generating 50 different screen variants for various device sizes and user states, all while ensuring adherence to accessibility guidelines and the brand’s design system, a task that would be prohibitively time-consuming for human designers alone.

Broader Impact and Implications for the Design and Development Landscape

The introduction of direct write access for AI agents into Figma carries profound implications across the entire product development lifecycle.

The Evolving Role of Designers

While some might fear automation, the consensus among industry experts is that AI agents will augment, not replace, human designers. The role of the designer is poised to evolve towards higher-level strategic thinking, creative problem-solving, and the "orchestration" of AI tools. Designers will increasingly become "AI whisperers," tasked with crafting precise prompts and, crucially, developing and refining the "Skills" markdown files that guide agent behavior. This requires a new skillset, often dubbed "prompt engineering for design," where understanding the nuances of how AI interprets instructions becomes as important as understanding design principles. Designers will spend less time on repetitive, pixel-pushing tasks and more time on innovation, user research, and ensuring the overall coherence and impact of the user experience.

Enhanced Developer-Designer Collaboration

The traditional handoff process between design and development, often a source of friction and miscommunication, stands to be significantly streamlined. With AI agents capable of writing directly to the design canvas, and potentially generating corresponding code snippets or updating design tokens across systems, the gap between design intent and coded reality narrows considerably. Developers can trust that design assets generated by agents are intrinsically linked to the design system, reducing the need for manual translation or correction. This fosters a more collaborative environment where both disciplines can focus on their respective strengths, with AI acting as a sophisticated bridge.

Inferred Statement – Software Engineer: "The manual translation of design specs into code has always been a bottleneck. With Figma AI agents writing directly to the canvas and adhering to a shared design system, we anticipate a drastic reduction in iteration cycles and design-to-development friction. It frees us to focus on complex logic and performance, knowing the UI foundation is already robust and consistent."

Scalability and Consistency at Unprecedented Levels

One of the most immediate benefits is the ability to achieve scalability and consistency that was previously unattainable.

- Mass Customization: AI agents can generate countless design variants for A/B testing, personalization, or different locales with minimal human input.

- Automated Accessibility: By embedding accessibility guidelines into "Skills," agents can automatically generate designs that comply with standards like WCAG, producing accessible annotations and ensuring proper contrast, semantic structure, and keyboard navigation.

- Global Design Systems: For large enterprises, maintaining consistency across multiple products, brands, and international teams becomes vastly more manageable when AI agents are enforcing the rules.

The Business Model and Future Adoption

Figma is making these powerful AI agent capabilities available free during its beta phase. This strategy encourages widespread adoption, allowing teams to experiment, develop custom skills, and clean up their design systems without immediate financial commitment. Following the beta, a usage-based pricing model will be implemented. This approach is common for advanced AI services, reflecting the computational resources required. The success of this model will depend on the perceived value and the efficiency gains experienced by early adopters. Organizations that see significant reductions in design time, development costs, and improvements in product quality will likely embrace the pricing model.

Challenges and Future Considerations

While the benefits are substantial, challenges remain:

- Design System Overhaul: Many organizations will need to invest significant time and resources into cleaning, structuring, and thoroughly documenting their existing design systems to make them truly "machine-actionable." Poorly structured systems will lead to "garbage in, garbage out" scenarios.

- Governance and Oversight: Establishing clear governance models for AI-generated designs will be crucial. Human oversight remains essential to ensure creative intent is met, ethical considerations are addressed, and unexpected AI behaviors are mitigated.

- Security and Data Privacy: As AI agents gain direct write access, questions around data security, intellectual property, and privacy will become even more pertinent, requiring robust safeguards and clear policies.

- The "Black Box" Problem: Understanding why an AI agent made a particular design choice can sometimes be challenging. Future developments will likely focus on interpretability and explainability of AI actions.

Ultimately, the real question for every design team is no longer solely how to document their system effectively for humans, but whether their design system is structured and refined well enough for intelligent agents to leverage it autonomously and efficiently. Figma’s latest innovation is not just a feature; it is a catalyst for a fundamental re-evaluation of design processes, design system architecture, and the very nature of human-computer collaboration in creative fields. The era of the intelligent design system has officially begun, promising to unlock new levels of creativity, efficiency, and scale for product development worldwide.