The burgeoning field of Generative User Interface (GenUI) Design is poised to revolutionize web design, shifting paradigms from static, pre-defined layouts to dynamically generated, hyper-personalized digital experiences. This innovative approach leverages advanced Artificial Intelligence (AI) models to create interfaces tailored in real-time to individual user needs, context, and preferences. While promising unprecedented levels of customization and efficiency, the rapid ascent of GenUI also brings critical concerns, particularly regarding the consistent and reliable provision of accessible experiences for all users.

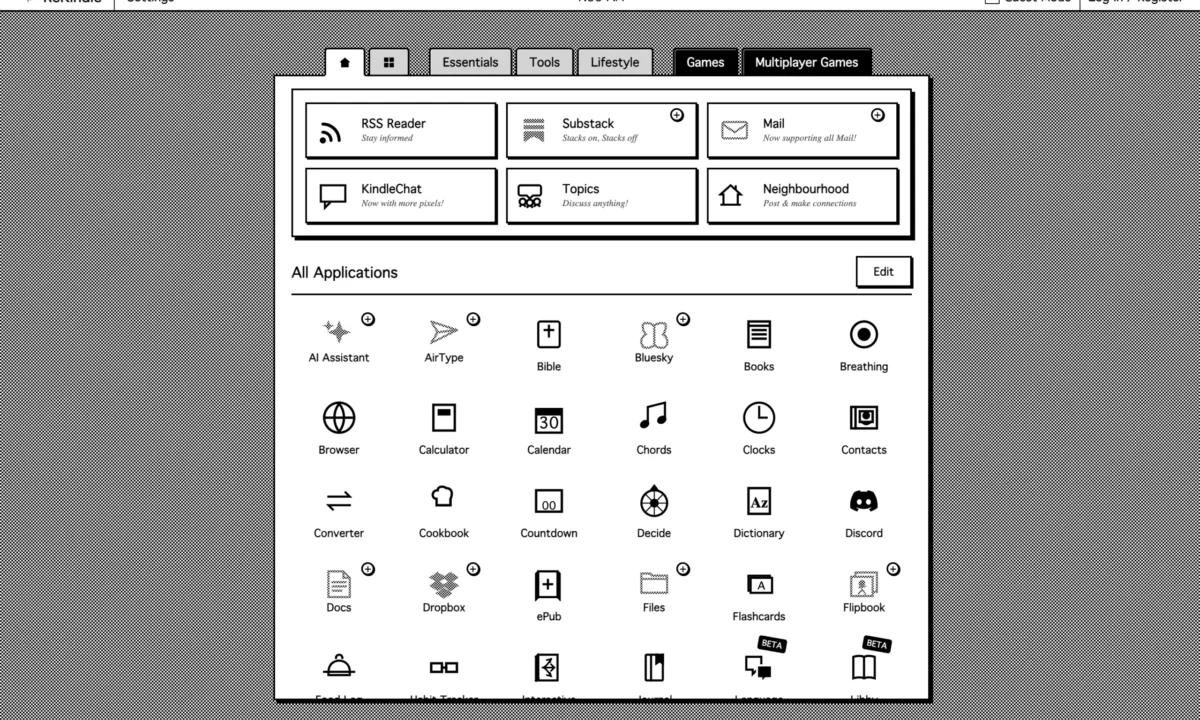

The concept of Generative UI is gaining significant traction within the tech and design communities. Early commercial applications, such as Figma Sites, have emerged, touting the ability to rapidly construct websites from simple prompts. These early forays, however, have also highlighted the inherent complexities of deploying nascent AI technologies as production-ready tools, particularly concerning foundational principles like accessibility. The core innovation lies in the AI’s capacity to analyze user needs and context—including inputs, instructions, behaviors, and preferences—and subsequently produce an interface custom-tailored to that specific individual. This represents a fundamental departure from traditional UI design, where designers anticipate a broad range of user needs and build a singular, albeit adaptable, interface. With GenUI, each interaction can theoretically result in a unique "snowflake" experience, where no two users encounter precisely the same interface.

Defining the Generative UI Paradigm

To fully grasp the transformative potential and challenges of GenUI, it is essential to understand its definitions as articulated by leading research and industry groups:

- Google Research defines Generative UI as a new modality where "the AI model generates not only content, but the entire user experience. This results in custom interactive experiences, including rich formatting, images, maps, audio and even simulations and games, in response to any prompt (instead of the widely adopted ‘walls-of-text’)." This definition emphasizes the comprehensive nature of AI’s output, extending beyond mere text generation to encompass rich, interactive multimedia experiences.

- The Nielsen Norman Group (NN/Group), a renowned authority in user experience, offers a concise definition: "A generative UI (genUI) is a user interface that is dynamically generated in real time by artificial intelligence to provide an experience customized to fit the user’s needs and context." This highlights the dynamic, real-time, and context-aware nature of GenUI, central to its personalization capabilities.

- UX Collective further elaborates, stating that "A Generative User Interface (GenUI) is an interface that adapts to, or processes, context such as inputs, instructions, behaviors, and preferences through the use of generative AI models (e.g., LLMs) in order to enhance the user experience. Put simply, a GenUI interface displays different components, information, layouts, or styles, based on who’s using it and what they need at that moment." This definition underscores the AI’s role in interpreting diverse contextual cues to render highly adaptable and individualized interfaces.

These definitions collectively paint a picture of GenUI as a technology that moves beyond static design templates or simple content recommendation. Instead, it envisions an AI actively constructing the entire user journey, from layout and component selection to content delivery and interactive elements, all optimized for the immediate user and their specific requirements.

Generative AI vs. Predictive AI: A Critical Distinction

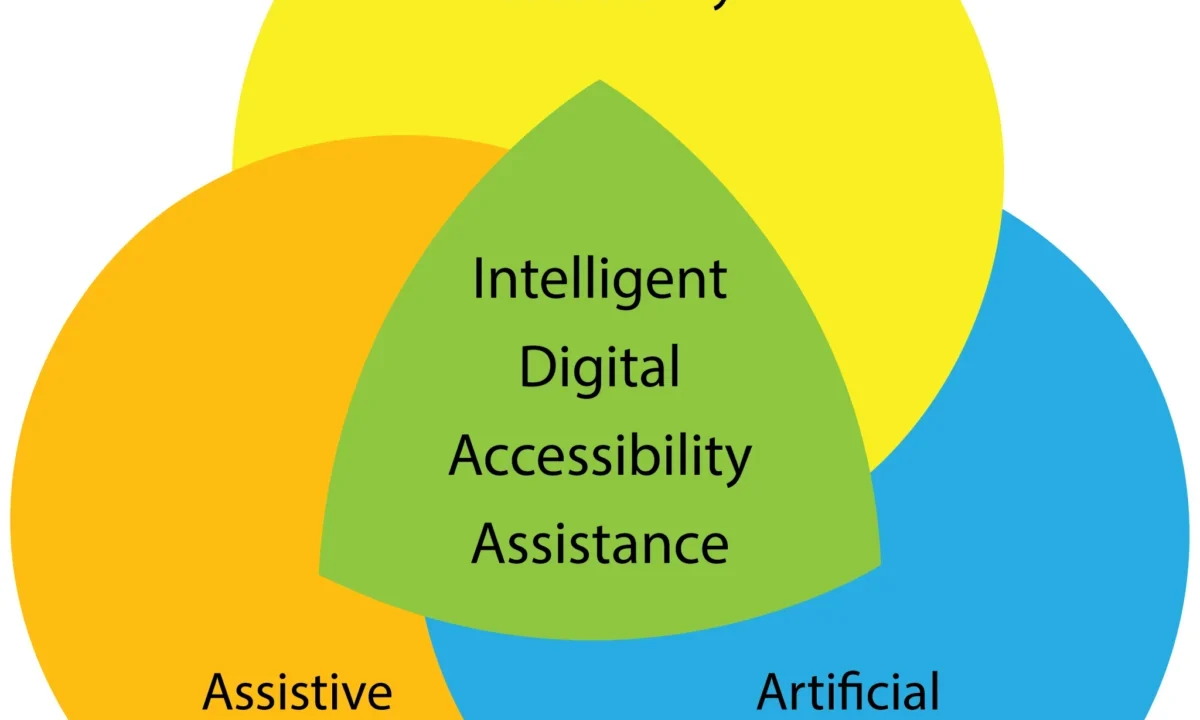

The broader category of Artificial Intelligence is often simplified, but for understanding GenUI, distinguishing between predictive AI and generative AI is crucial. While both leverage machine learning, their primary functions and outputs differ significantly:

| Feature | Predictive AI | Generative AI |

|---|---|---|

| Inputs | Typically uses smaller, more targeted datasets as input data, focusing on patterns for specific outcomes. (Source: Smashing Magazine) | Trained on vast, diverse datasets containing millions of sample content, learning complex patterns and structures. (Source: U.S. Congress) |

| Outputs | Forecasts future events, identifies trends, or predicts outcomes based on historical data. (Source: IBM) | Creates novel content, including audio, code, images, text, simulations, and videos, that did not previously exist. (Source: McKinsey) |

| Examples | Fraud detection systems, credit scoring, personalized recommendations (e.g., Netflix, Amazon), medical diagnosis tools. | Large Language Models (LLMs) like ChatGPT and Claude, image generators like DALL-E and Midjourney, video generators like Sora, music composers like Suno, and code assistants like Cursor. |

Predictive AI excels at identifying correlations and making informed guesses about future states or classifications. It answers "what is likely to happen?" or "what is this?" In contrast, generative AI’s strength lies in its ability to understand the underlying structure of data and create entirely new, coherent, and often highly creative outputs that adhere to learned patterns. When discussing GenUI, we are specifically referring to the generative capacity of AI – its ability to create a user interface from scratch, rather than merely predicting the most effective existing interface for a user. This distinction is paramount because it implies a level of autonomous creation that traditional UI/UX design has never before encountered.

The Rise of AI in Web Development and Early Adopters

The conceptualization of GenUI has rapidly moved from theoretical discussions to practical implementation. The burgeoning market for AI-powered website builders reflects a strong industry belief in the technology’s potential to streamline development and enhance personalization. Companies like Figma, with its "Figma Sites," were early and prominent entrants, aiming to enable users to create functional websites "on the fly with prompts." This commercial push, while demonstrating the viability of prompt-based design, also became an early battleground for critical evaluation.

Beyond Figma, numerous other platforms have integrated or are actively developing GenUI capabilities. WordPress.com has introduced an AI website builder, leveraging its extensive ecosystem to offer AI-assisted site creation. Other major players in the website building and hosting space have followed suit, including Squarespace, Wix, and GoDaddy, all incorporating AI tools to simplify and accelerate the design process for their users. Emerging platforms like Vercel (known for its frontend development tools), Lovable, and Reeady are also contributing to this competitive landscape, each aiming to carve out a niche in the generative web design market. These companies are driven by the promise of increased efficiency, reduced development costs, and the potential to deliver highly customized user experiences at scale. The global generative AI market, valued at approximately $11.3 billion in 2023, is projected to grow substantially, indicating robust investment and development in this sector.

The Accessibility Imperative: A Looming Challenge for GenUI

While the potential for hyper-personalized interfaces is appealing, the rapid advancement of GenUI has brought a critical issue to the forefront: accessibility. The fundamental concern is whether GenUI can reliably output experiences that cater to all users, irrespective of their impairments—be they visual, auditory, cognitive, motor, or other specific needs. The current consensus among accessibility experts suggests that early results have been far from satisfactory.

One of the most visible examples of this struggle came with the launch of Figma Sites. Despite its innovative premise, initial feedback from the accessibility community was overwhelmingly negative. Critiques highlighted numerous shortcomings, including poor semantic structure, inadequate contrast ratios, missing alternative text for images, and keyboard navigation issues—all critical components of an accessible web experience. These flaws underscored the challenge of AI autonomously generating complex interfaces that adhere to established Web Content Accessibility Guidelines (WCAG).

In response to this severe pushback, Figma did announce updates and publish a guide for improving accessibility on Figma-generated sites. However, many experts found these efforts to be limited, often placing the burden of ensuring accessibility back on the user rather than intrinsically building it into the AI’s output. Adrian Roselli, a prominent accessibility consultant, extensively documented these limitations, arguing that the advice offered was often superficial and did little to address the systemic issues of AI-generated inaccessible code. This reaction highlights a persistent problem in tech innovation: accessibility often remains a lagging consideration, an afterthought rather than a foundational principle.

The debate around GenUI and accessibility intensified with a controversial claim made by Jakob Nielsen, a foundational figure in usability. In 2024, Nielsen famously posited that Generative UI would not only be capable of producing accessible experiences but would eventually replace accessibility practitioners altogether as the technology evolved. This assertion drew fierce criticism from the accessibility community, including experts like Adrian Roselli, Léonie Watson, Sarah Horton, and Sheri Byrne-Haber. Critics argued that Nielsen’s view demonstrated a fundamental misunderstanding of accessibility, which is not merely a technical checklist but a complex discipline involving human empathy, contextual understanding, and a deep knowledge of diverse user needs. Many pointed out that AI, by its very nature, learns from existing data, and if that data is replete with inaccessible patterns (as much of the web currently is), the AI is likely to perpetuate, rather than solve, accessibility issues. A year later, Nielsen partially walked back his claims, acknowledging some limitations but largely maintaining his optimistic outlook on AI’s long-term potential in this area.

The lack of emphasis on accessibility in foundational AI design resources further compounds these concerns. For instance, Google’s "People + AI Guidebook," while championing "human-centered" design principles, reportedly makes no explicit mention of accessibility. This oversight is particularly troubling given Google’s extensive research into GenUI and its position as a leader in AI development. For GenUI to truly represent the "future" of web design and development, accessibility cannot remain a secondary consideration; it must be ingrained in the very fabric of its design and algorithmic training. This requires a proactive, ethical approach to AI development, ensuring that models are trained on diverse, accessible datasets and that output generation is rigorously evaluated against universal design principles and WCAG standards.

Technological Foundations and Emerging Tools

Despite the challenges, the technological underpinnings of GenUI are rapidly advancing, offering glimpses into its future capabilities. Google, a major proponent of the technology, maintains a public repository of examples demonstrating how various user inputs can be translated into diverse interactive interfaces. This research highlights the versatility of AI in rendering different layouts, components, and functionalities based on specific user prompts.

Taking this a step further, Google’s Project Genie bills itself as a platform capable of creating "interactive worlds" that are "generated in real-time." While access is currently invite-only, the ambition behind Project Genie underscores the long-term vision for GenUI: to create not just static pages, but entire dynamic, immersive digital environments that respond fluidly to user interaction and context.

For developers looking to integrate GenUI into their applications, Google also offers a GenUI SDK (Software Development Kit) designed for Flutter apps. This SDK allows developers to connect to various Large Language Model (LLM) providers and leverage their generative capabilities to create adaptive interfaces directly within their applications. Such tools empower developers to experiment with and implement personalized UI experiences, pushing the boundaries of what is possible in application design.

Beyond Google, other innovators are contributing to the adaptive GenUI space. Thesys, for example, is exploring how AI can facilitate intelligent, adaptive system design. Similarly, CopilotKit offers tools and playgrounds for developing generative UI, emphasizing the integration of AI assistance into the development workflow to build more dynamic and responsive interfaces. These initiatives demonstrate a growing ecosystem around GenUI, with tools and frameworks emerging to support its widespread adoption and integration into various digital products.

Broader Implications and the Future Landscape

The advent of Generative UI Design carries profound implications for the entire digital ecosystem, from individual users to professional designers and developers, and even the ethical considerations of AI.

- For Users: The promise is a highly personalized and efficient online experience. Imagine a website that instantly reconfigures itself to present information in a format best suited to your cognitive load, language preference, device, and even current emotional state. This could significantly reduce friction and enhance user satisfaction. However, it also raises questions about user control, transparency, and the potential for "filter bubbles" or manipulation if personalization becomes too opaque or aggressive.

- For Designers and Developers: GenUI is not necessarily an existential threat but a transformative tool. Designers may shift from pixel-perfect execution to higher-level strategic thinking, prompt engineering, and critical evaluation of AI-generated outputs. Their role could evolve into "AI orchestrators," guiding the generative process and refining the AI’s understanding of design principles and brand identity. Developers might spend less time on repetitive coding tasks and more on building the robust AI infrastructure, integrating generative models, and ensuring the technical performance and security of dynamic interfaces. The demand for skilled prompt engineers and AI ethicists will undoubtedly grow.

- Ethical Considerations: The ethical implications are vast. Bias in AI models, derived from biased training data, could lead to discriminatory or exclusionary interfaces. Data privacy becomes even more critical as AI collects and interprets intimate user contexts to generate personalized UIs. The question of creative ownership—who owns the design generated by an AI?—also emerges. Furthermore, the "black box" nature of some generative models poses challenges for accountability and debugging, especially when accessibility failures or security vulnerabilities arise.

- The Future of Accessibility: The central challenge remains whether GenUI can truly deliver on its promise of inclusivity. The future likely involves a hybrid approach: AI assisting in the generation of accessible components and layouts, but with robust human oversight and specialized accessibility audits. Integrating accessibility standards (like WCAG) directly into the AI’s training data and evaluation metrics will be paramount. This could involve developing specialized AI models specifically trained on accessible design patterns and user interaction data from individuals with disabilities. The goal should not be to replace accessibility practitioners but to augment their capabilities, empowering them to create more inclusive digital experiences at scale.

In conclusion, Generative UI Design represents a monumental leap forward in web development, offering an exciting vision of hyper-personalized and dynamically responsive digital experiences. Its potential to streamline workflows and tailor interfaces to individual users is undeniable. However, the journey towards widespread adoption must be navigated with caution, particularly concerning the critical imperative of accessibility. The early missteps with commercial GenUI tools serve as a vital reminder that innovation must be coupled with ethical responsibility and a steadfast commitment to universal design principles. For GenUI to truly fulfill its promise as the future of web design, it must evolve into a technology that not only creates but also includes, ensuring that the digital world becomes more accessible and equitable for everyone.