The digital landscape of 2024 and 2025 has undergone a fundamental shift, moving from a period of information scarcity to an era of overwhelming data saturation. For content creators, journalists, and brand strategists, the ability to navigate this environment is no longer a secondary skill but a primary requirement for professional survival. Establishing a positive first impression through well-researched content has become the cornerstone of audience retention and brand authority. As the barrier to entry for publishing remains low, the "trust deficit" among global readers has increased, making the rigorous verification of facts, the utilization of advanced search techniques, and the application of academic evaluation frameworks essential components of the content lifecycle.

The Evolution of Search and Information Retrieval

The history of digital research has transitioned through several distinct phases. In the early 1990s, information retrieval was limited to basic directories and rudimentary keyword matching. With the advent of Google in 1998 and its subsequent dominance of the search market—currently holding over 90% of the global search engine market share—the methodology for finding information became more sophisticated. However, the rise of Search Engine Optimization (SEO) "spam" and the proliferation of AI-generated content have increasingly cluttered search engine results pages (SERPs), often burying high-quality, primary sources under layers of derivative content.

In response to this complexity, professional researchers have returned to "Boolean-style" search operators to bypass algorithmic biases. These commands, often referred to as Google Search Operators, allow users to filter the vast index of the web with surgical precision. According to data from search analytics firms, the use of advanced operators can reduce research time by up to 50% by eliminating irrelevant domains and file types from the results.

Technical Methodologies for Advanced Digital Research

The application of search operators represents a technical approach to information gathering that separates professional researchers from casual users. Joshua Hardwick, Head of Content at Ahrefs, emphasizes that these tools are vital for both content discovery and technical audits.

One of the most powerful tools in the researcher’s arsenal is the "site:" operator. This command restricts results to a specific domain, allowing a creator to audit a competitor’s output or find specific mentions of a topic within a trusted institution’s archives. When combined with "inurl:" or "in", it enables the discovery of specific sub-topics that may not be surfaced by general keyword searches.

Furthermore, the "filetype:" operator serves a critical function in identifying primary data sources. While blog posts often summarize findings, professional research frequently requires access to original PDF reports, Excel datasets, or Powerpoint presentations. By targeting specific extensions like .pdf or .xlsx, researchers can access white papers and census data that provide the statistical backbone for authoritative content.

The strategic use of "related:" search operators also facilitates competitive analysis. This command allows creators to identify websites that Google’s algorithm perceives as being in the same niche. Understanding these clusters is essential for "scope out the competition" phases, where creators must identify gaps in existing discourse to provide unique value to their audience.

The CRAAP Test: A Framework for Source Reliability

Once information is gathered, the secondary challenge is verification. The "CRAAP" test, originally developed by Sarah Blakeslee and her team of librarians at California State University, Chico, has become the industry standard for evaluating the integrity of digital sources. This framework is increasingly taught in journalism schools and corporate communications departments to mitigate the risk of spreading misinformation.

Currency: The Timeline of Relevance

In fast-moving sectors such as technology, finance, and medicine, the "Currency" of information is paramount. Data from 2022 regarding artificial intelligence, for instance, may be fundamentally obsolete by 2025. Professional standards suggest that researchers must prioritize the most recent publications while checking for "evergreen" facts that remain stable over decades.

Relevance and Authority

The "Relevance" of a source determines its fit for the intended audience, while "Authority" examines the credentials of the author. Purdue Global’s research experts note that a source is only as credible as the reputation of its creator. This involves verifying professional backgrounds, checking for peer-reviewed citations, and ensuring that the author has a demonstrated history of expertise in the specific subject matter.

Accuracy and Purpose

"Accuracy" involves cross-referencing claims against multiple independent sources. A single outlier report, even from a reputable domain, may not be sufficient to support a major claim. Finally, "Purpose" examines the intent behind the information. Researchers must distinguish between objective reporting and persuasive content. While bias does not inherently disqualify a source, failing to identify that bias can lead to a loss of audience trust.

The Economic Impact of Research Credibility

The "Creator Economy" is currently valued at approximately $250 billion and is projected to reach nearly $480 billion by 2027. In this high-stakes environment, the financial cost of poor research is significant. Brand credibility acts as a form of "social capital" that directly correlates with conversion rates and subscriber loyalty.

A 2023 study on consumer trust indicated that 81% of consumers need to trust a brand to buy from them. For content-led businesses, that trust is built through the accuracy of the information provided. One high-profile error or the citation of a debunked source can lead to a permanent loss of "Domain Authority" and a decline in organic search rankings, as search engines like Google continue to refine their "E-E-A-T" (Experience, Expertise, Authoritativeness, and Trustworthiness) guidelines.

Data-Driven Content Organization

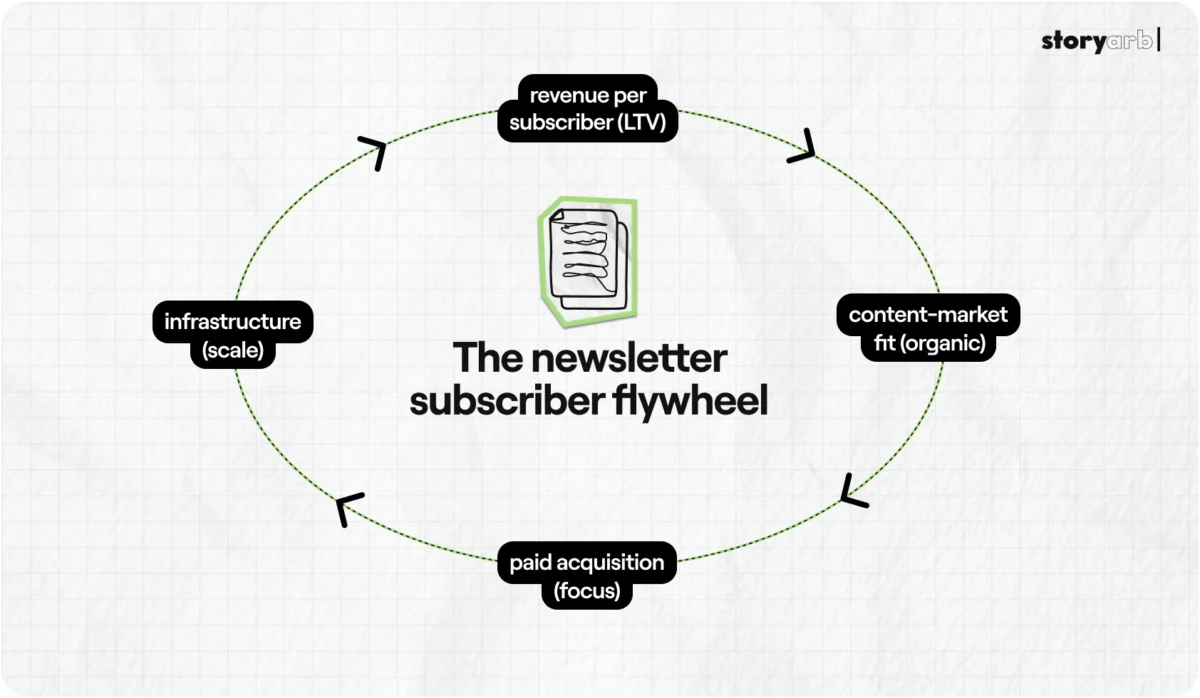

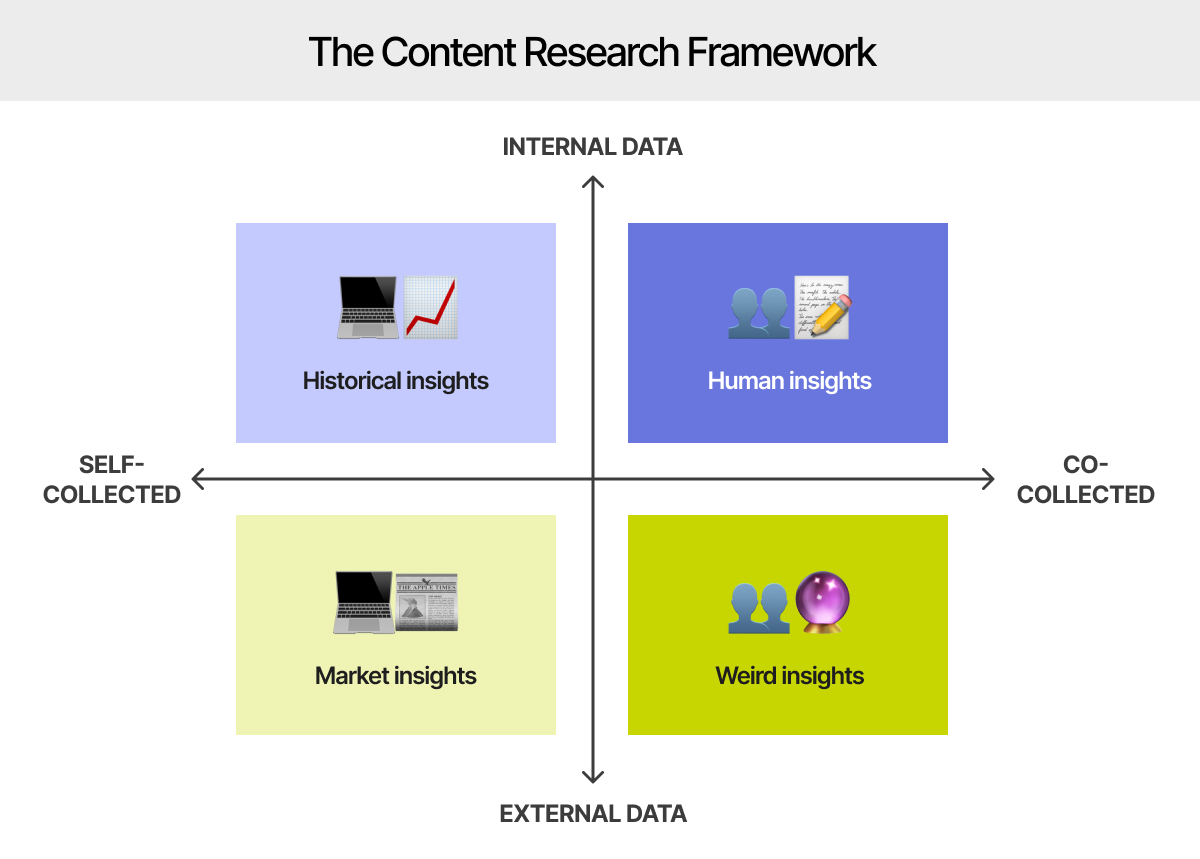

The transition from raw research to a finished article requires a structured framework. The "Content Research Framework" often utilized by data-driven journalists involves four key stages:

- Goal Definition: Establishing the specific problem the content aims to solve.

- Resource Gathering: Utilizing the aforementioned search operators to collect a diverse range of primary and secondary sources.

- Connection and Organization: Identifying patterns within the data and organizing them into a logical narrative.

- Competitive Auditing: Ensuring the content offers a perspective or depth of data not found in existing high-ranking articles.

This systematic approach prevents "information overload" and ensures that the final product is not just a collection of facts, but a cohesive solution to a reader’s query.

Official Responses and Industry Standards

Major platforms and educational institutions have begun taking proactive steps to standardize digital research. Google’s "Search Essentials" (formerly Webmaster Guidelines) provides clear instructions on how to ensure content is discoverable and credible. Simultaneously, tools like Ahrefs and Semrush have evolved to provide "Site Explorers" and "Traffic Checkers" that allow creators to verify the legitimacy of their peers’ data.

Academic institutions, such as the University of Toronto and Purdue Global, have published extensive guides on identifying "fake news" and "sensationalism." These guides emphasize that professional tone, the absence of spelling errors, and the transparency of research methods are the hallmarks of a dependable source.

Broader Implications and Future Outlook

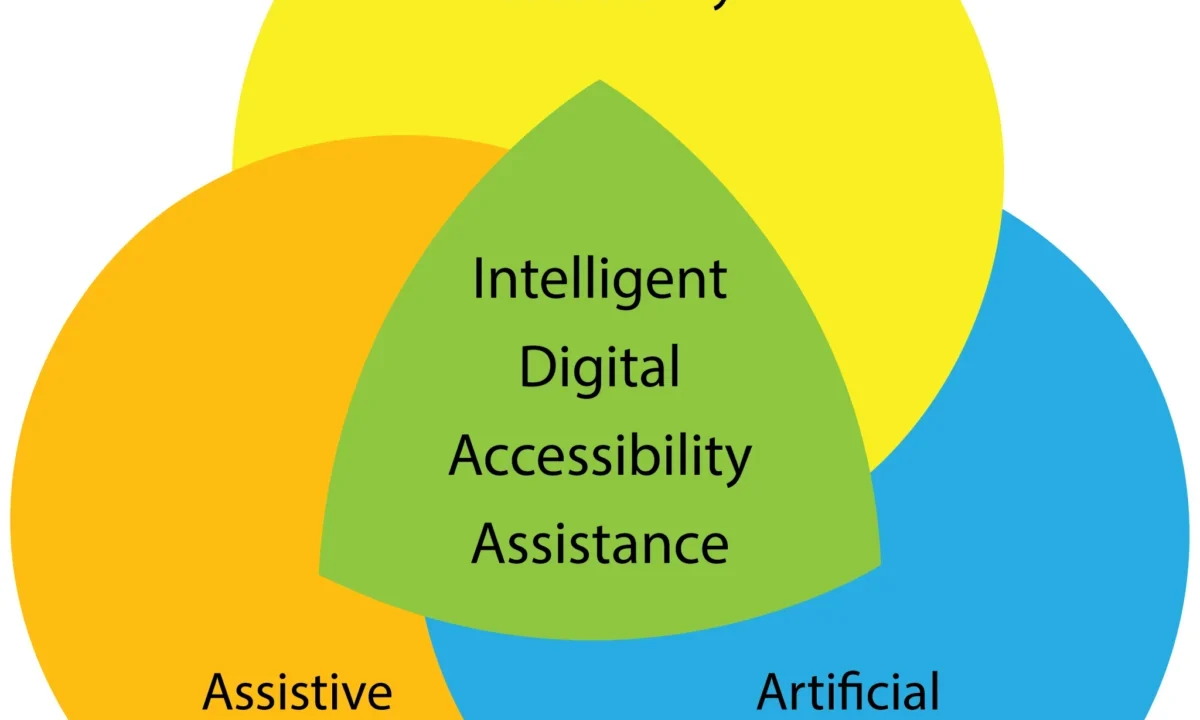

As generative AI becomes more integrated into search engines through features like "Search Generative Experiences" (SGE), the role of the human researcher is shifting from "finder" to "verifier." While AI can summarize existing information, it is prone to "hallucinations"—the generation of false or misleading data presented as fact.

This technological shift increases the value of the human "upright researcher." In the future, the most successful content creators will be those who can blend the efficiency of AI-assisted search with the rigorous, manual verification processes of traditional journalism. The ability to identify "indexing issues" or "noindex rules" to protect proprietary data will also become a vital skill for creators looking to monetize their research through paywalls or specialized newsletters.

In conclusion, the mastery of research is not merely an academic exercise but a strategic business necessity. By utilizing advanced search operators, adhering to the CRAAP framework, and maintaining a commitment to transparency, creators can build a foundation of trust that is resilient to the volatility of the digital attention economy. The "embarrassing first impression" of inaccurate content is a risk that modern professionals cannot afford to take in a world where data is the most valuable currency.