The Enduring Challenge of Design Handoff: A Historical Perspective

For decades, the journey from design concept to live product has been fraught with challenges, largely centered around the "handoff" phase. Historically, designers would craft visually compelling mockups using tools like Adobe Photoshop or Illustrator. These static images would then be handed over to developers, who were tasked with translating pixels into functional code. This process was inherently prone to discrepancies, requiring extensive communication, numerous iterations, and often leading to a final product that diverged from the initial design vision.

The evolution of design tools saw the rise of specialized applications like Sketch, Adobe XD, and ultimately, Figma. These platforms introduced vector-based canvases, component libraries, and collaborative features, significantly streamlining the design process and improving consistency. Figma, in particular, revolutionized the industry with its browser-based collaborative environment, allowing multiple designers to work simultaneously on a single file and providing inspectable code snippets for developers. While these advancements considerably reduced the friction of the handoff, they did not entirely eliminate the fundamental disconnect: the design remained a representation of the code, not the code itself. Developers still had to manually interpret and translate design specifications—be it spacing, typography, or interactive states—into functional HTML, CSS, and JavaScript. This translation layer often introduced errors, slowed down development cycles, and necessitated a constant back-and-forth, costing valuable time and resources. Industry reports consistently highlight that design-to-development misalignment remains a top frustration for both creative and engineering teams, impacting project timelines and budget adherence.

Paper.design’s Foundational Premise: Design as Native Code

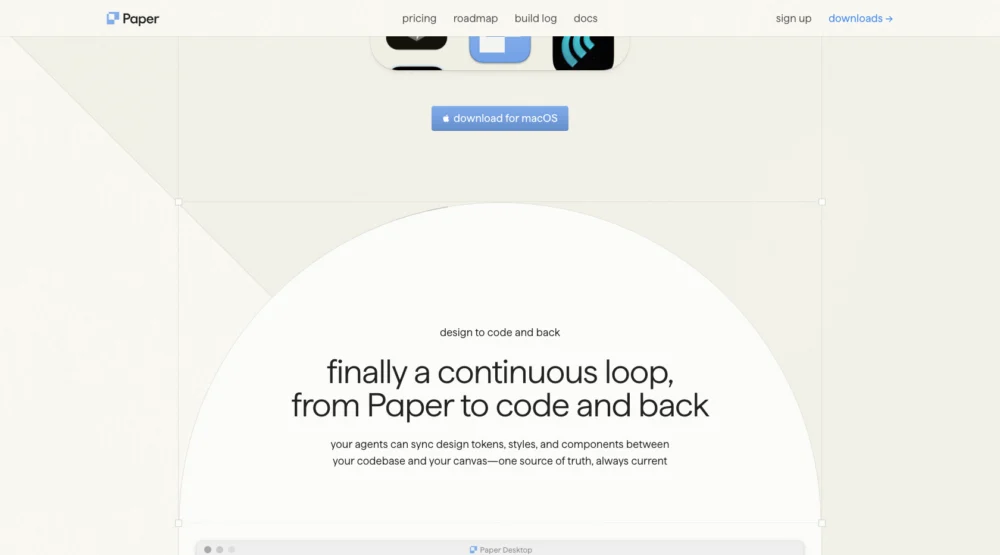

Paper.design challenges this traditional paradigm by positing a radical alternative: what if the design was the code from its inception? This core philosophy underpins its entire architecture, aiming to dissolve the handoff problem rather than merely optimize it. The platform is built on the premise that a design tool should inherently understand the language of the web, thereby creating a continuous, rather than fractured, loop between creative intent and technical execution.

Embracing Web Standards for Seamless Integration

At the heart of Paper.design is its HTML/CSS native canvas. Unlike traditional design tools that often rely on SVG (Scalable Vector Graphics) for their canvases—which, while excellent for resolution independence and graphical precision, still require interpretation for web layout—Paper’s canvas directly speaks HTML and CSS. Every artboard created within Paper.design is fundamentally structured using web standards. This means that properties critical for web development, such as padding, gap, flex direction, and other intricate CSS layout attributes, are natively understood and manipulated within the design environment. There is no translation layer, no complex export step that might inadvertently break responsive spacing or visual fidelity. This direct correspondence ensures that what a designer sees in Paper is a remarkably accurate representation of what a developer will implement, accelerating the development process and drastically reducing post-design adjustments. This approach aligns with the growing demand for design systems that are inherently code-aware, fostering greater consistency and efficiency across large-scale projects.

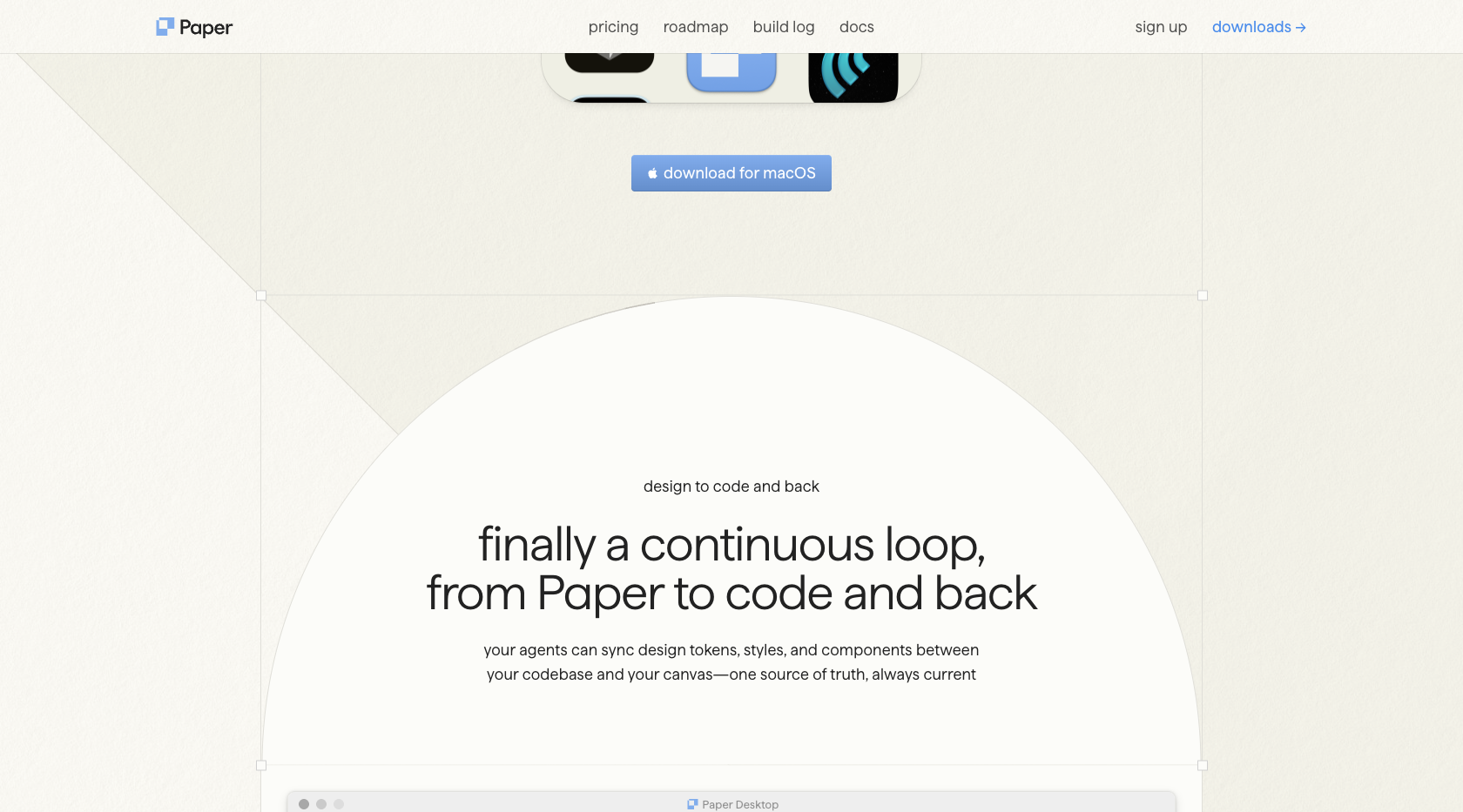

The Continuous Design-to-Code Loop

Paper.design’s innovation extends beyond mere web standard compliance; it establishes a genuinely bidirectional relationship between design and code, a feature that many tools have aspired to but few have fully realized. In this continuous loop, designers create within Paper, and through integrated AI agents, design tokens, and components are automatically synced to the project’s codebase. Crucially, this relationship is not unidirectional. Changes made directly within the code environment can flow back into the Paper.design canvas, instantly updating the visual representation. This live synchronization eliminates the typical version control headaches associated with design files and codebases, ensuring that both creative and engineering teams are always working from the most current state.

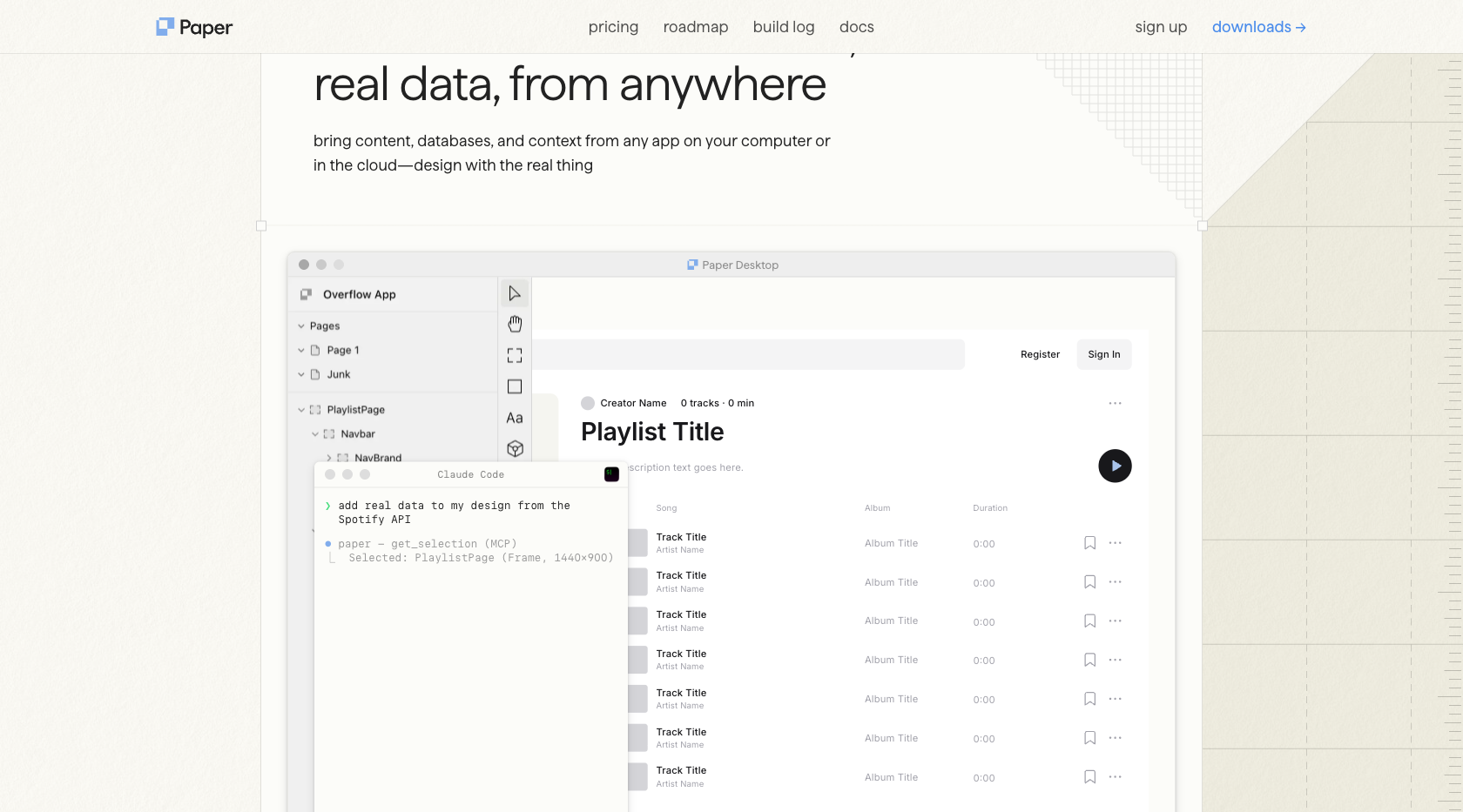

Furthermore, Paper.design leverages its MCP integration to connect with real-world data sources. An AI agent can dynamically pull content from popular platforms like Notion, Figma, or any custom API directly into the design. This capability is transformative for data-driven designs, allowing designers to visualize layouts with actual content from databases, content management systems, or external services, rather than relying on placeholder text or static mock data. This real-time data integration is invaluable for applications like e-commerce sites displaying product catalogs, news portals showing live articles, or dashboards visualizing dynamic metrics, ensuring that designs are robust and adaptable to changing content.

Unleashing Visual Creativity: The Power of GPU Shaders

While its code-centric approach forms the brain of Paper.design, its GPU-accelerated shaders represent its artistic heart, providing an unparalleled visual superpower. The platform boasts one of the most comprehensive and impressive collections of native, real-time visual effects available within a design tool. These are not merely static Photoshop filters or cumbersome third-party plugins; they are sophisticated shaders that harness the raw processing power of the Graphics Processing Unit (GPU), offering deep parameter control and instant live previews.

Beyond Filters: Real-time, Controllable Effects

The lineup of shaders is designed to empower designers with cutting-edge visual aesthetics that are both performant and highly customizable. Examples include:

- Halftone CMYK: Recreates classic print-style halftone patterns with granular control over CMYK color channels, ideal for retro or stylized graphics.

- Grain Gradient: Introduces organic noise and texture to gradient fills, adding depth and a tactile quality often sought in modern digital aesthetics.

- Fluted Glass: Generates convincing reeded-glass surfaces, complete with realistic highlights, shadows, and distortion, perfect for creating sophisticated UI elements or background textures.

- Liquid Metal: Produces dynamic, chrome-like surfaces with extensive controls over shape, angle, and reflectivity, enabling futuristic or high-sheen visual effects.

- Halftone Dots: Offers vintage pop-art dot patterns, providing a distinct stylistic choice for illustrations and graphical elements.

- Mesh Gradient, Swirl, Water, Image Dithering, Paper Texture, and many more, each providing unique visual capabilities.

The critical distinction is that every one of these effects is GPU-rendered, ensuring smooth performance even with complex manipulations. Designers can tweak parameters in real-time, observing immediate changes on the canvas, fostering a more fluid and experimental creative workflow. This level of native integration and performance stands in stark contrast to traditional tools where such effects often require external plugins, rendering, or complex workarounds.

AI-Driven Aesthetic Manipulation

A truly groundbreaking aspect of Paper.design’s shaders is their deep integration with the MCP layer, allowing AI agents to read, understand, and manipulate these visual effects. This means a designer could, for example, prompt an AI agent like Claude to "adjust the intensity of the grain gradient on the hero image" or "swap the halftone pattern to a CMYK style with increased cyan density." This capability ushers in a new dimension of "vibe coding," where high-level artistic directives can be interpreted and executed by AI, dramatically accelerating the iterative process of visual refinement and exploration. It moves beyond mere automation of mundane tasks, enabling AI to participate in the subjective and nuanced aspects of aesthetic design, allowing designers to articulate creative intent through natural language.

The Agentic Canvas: Bidirectional AI via Model Context Protocol (MCP)

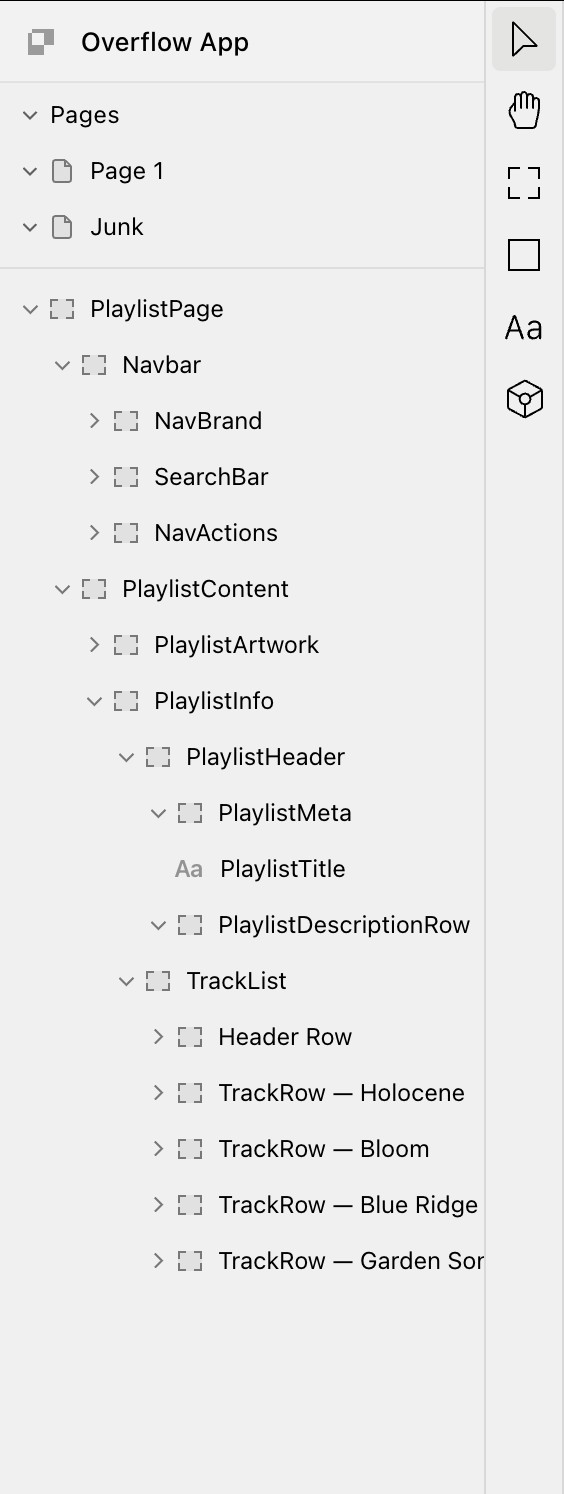

Paper.design’s embrace of AI is epitomized by its comprehensive Model Context Protocol (MCP) integration. The platform exposes 24 distinct tools through an authenticated MCP server, establishing it as a truly "agent-first" design environment. Crucially, unlike many existing tool integrations that permit AI agents only to read and analyze work, Paper.design supports full bidirectional access. This means an AI agent operating within environments like Claude Code or Cursor can not only inspect a design but also actively modify it.

A New Era of AI-Powered Workflows

The suite of tools available to AI agents through MCP is extensive and covers a wide range of design and development tasks.

- Read tools include

get_selection(to understand what element is currently targeted),get_jsx(to retrieve the underlying code structure),get_screenshot(for visual context), andget_computed_styles(to analyze applied CSS properties). These enable AI to gain a thorough understanding of the current design state. - Write tools such as

create_artboard,write_html,set_text_content, andupdate_stylesempower AI agents to directly manipulate the canvas. This bidirectional capability is a significant differentiator, moving beyond mere analysis to active creation and modification.

Setting up this integration is designed for simplicity. For users of Claude Code, a single terminal command—claude mcp add paper --transport http http://127.0.0.1:29979/mcp --scope user—establishes the connection. Cursor users benefit from a one-click deeplink, while compatibility extends to VS Code Copilot, Codex, and OpenCode out-of-the-box. This ease of integration ensures a low barrier to entry for developers and designers keen on exploring AI-driven workflows.

Practical Applications Across the Development Lifecycle

The practical workflows unlocked by Paper.design’s MCP integration represent genuinely new territory in digital product development:

- Automated Design System Management: An AI agent can monitor changes in a central design system (e.g., in Figma) and automatically sync design tokens, components, and style updates directly into the Paper.design canvas, ensuring consistency across all projects.

- Dynamic Content Population: Agents can pull real-time data from any API—be it Spotify for music content, an e-commerce backend for product listings, or a news API for article feeds—and dynamically populate UI elements within the design, making prototypes far more realistic and functional.

- Responsive Design Generation: An AI can analyze a desktop design and, using predefined rules or learned patterns, generate responsive layouts for tablet and mobile viewports, significantly accelerating the adaptation process.

- Code Generation and Version Control: Perhaps one of the most impactful applications, an agent can convert a Paper.design layout into production-ready code (e.g., React/Tailwind CSS) and even commit it directly to a GitHub repository, integrating design seamlessly into the continuous integration/continuous deployment (CI/CD) pipeline.

- Accessibility and Optimization: AI agents can analyze designs for accessibility compliance (e.g., contrast ratios, semantic HTML structure) and suggest or even implement improvements directly within the canvas. They can also optimize image assets or suggest performance enhancements.

These capabilities suggest a future where the line between designer and developer blurs further, with AI serving as a powerful intermediary, handling the repetitive and rule-based tasks, freeing human creativity for more complex problem-solving and innovative ideation.

Navigating the Competitive Landscape: Paper.design’s Unique Position Against Industry Giants

It is impossible to discuss Paper.design without acknowledging Figma, the undisputed industry standard for digital product design. Figma’s SVG-based canvas, robust collaboration features, and mature ecosystem have made it indispensable for virtually every professional design team globally. However, Paper.design is not positioned as a direct competitor seeking to replace Figma across all use cases; rather, it aims to redefine what a design canvas can achieve in an increasingly agent-first, code-centric world.

Figma’s Dominance and Paper’s Distinctive Niche

Figma excels in areas of precision, complex component management, and large-scale design system implementation, offering unparalleled collaboration tools crucial for large production teams. Its maturity, extensive plugin ecosystem, and user base provide significant advantages. Industry analysts agree that Figma will remain the go-to for established design workflows and collaborative ideation for the foreseeable future.

Paper.design, however, carves out a distinct niche by fundamentally altering the underlying technical substrate and interaction model. Its HTML/CSS canvas is inherently closer to the web’s native rendering mechanisms, making it optimized for direct manipulation by code-aware AI agents. While Figma has introduced its own MCP support, Paper.design’s implementation is designed from the ground up for bidirectional communication, allowing agents not just to read and analyze but also to write back and modify the canvas directly. This is a crucial distinction for enabling truly automated design workflows.

For visual effects, Figma relies heavily on a vast array of community-developed plugins, which, while powerful, can sometimes introduce performance overhead or inconsistencies. Paper.design, conversely, integrates GPU-accelerated shaders natively, offering highly optimized, real-time visual effects with deep parameter control directly within the canvas environment. This native integration ensures superior performance and a more cohesive user experience for applying complex visual styles.

Targeting the Designer-Developer Hybrid and AI-First Teams

Given its unique feature set, Paper.design’s ideal user profile currently leans towards the designer-developer hybrid, solo founders, or small teams that are actively building AI-first workflows using tools like Claude Code or Cursor. These users often possess a strong understanding of both design aesthetics and coding principles, making them uniquely positioned to leverage Paper.design’s code-native and agent-driven capabilities. For these innovators, the ability to design directly in web standards, integrate real data seamlessly, and automate repetitive tasks through AI agents offers a significant productivity boost and a more agile development process. It caters to those who prioritize a seamless transition from design to production-ready code over the extensive collaborative features required by massive enterprise teams. This strategic positioning allows Paper.design to address an emerging market segment that is increasingly seeking tools that bridge the gap between creative expression and technical implementation through intelligent automation.

Broader Implications for Design and Development Workflow

The advent of tools like Paper.design carries profound implications for the future of digital product creation. It signifies a maturation of the relationship between design and technology, moving beyond mere aesthetic considerations to a deeply integrated, code-aware process. For designers, it implies an increasing need for a foundational understanding of web technologies, pushing the boundaries of traditional visual design into the realm of system design and code architecture. The role of the designer may evolve to become more akin to a "design engineer" or "creative developer," capable of orchestrating AI agents and understanding the underlying technical constraints and possibilities.

For developers, it promises a significant reduction in grunt work associated with translating design specifications. By receiving design outputs that are already code-native and potentially even integrated into their version control systems, developers can focus more on complex logic, performance optimization, and innovative feature development. This shift could lead to more efficient teams, faster product cycles, and ultimately, higher quality digital experiences.

Moreover, the "agentic canvas" concept, where AI actively participates in the design process, opens doors for highly personalized and adaptive user interfaces. Imagine designs that can dynamically adjust based on user data, context, or even real-time environmental factors, all orchestrated by intelligent agents. While these advancements promise immense productivity gains, they also raise important considerations regarding the future of creative control, the ethical implications of AI-generated content, and the potential impact on job roles within the creative industries. However, if managed thoughtfully, tools like Paper.design have the potential to augment human creativity, allowing designers to focus on higher-level strategic thinking and conceptualization.

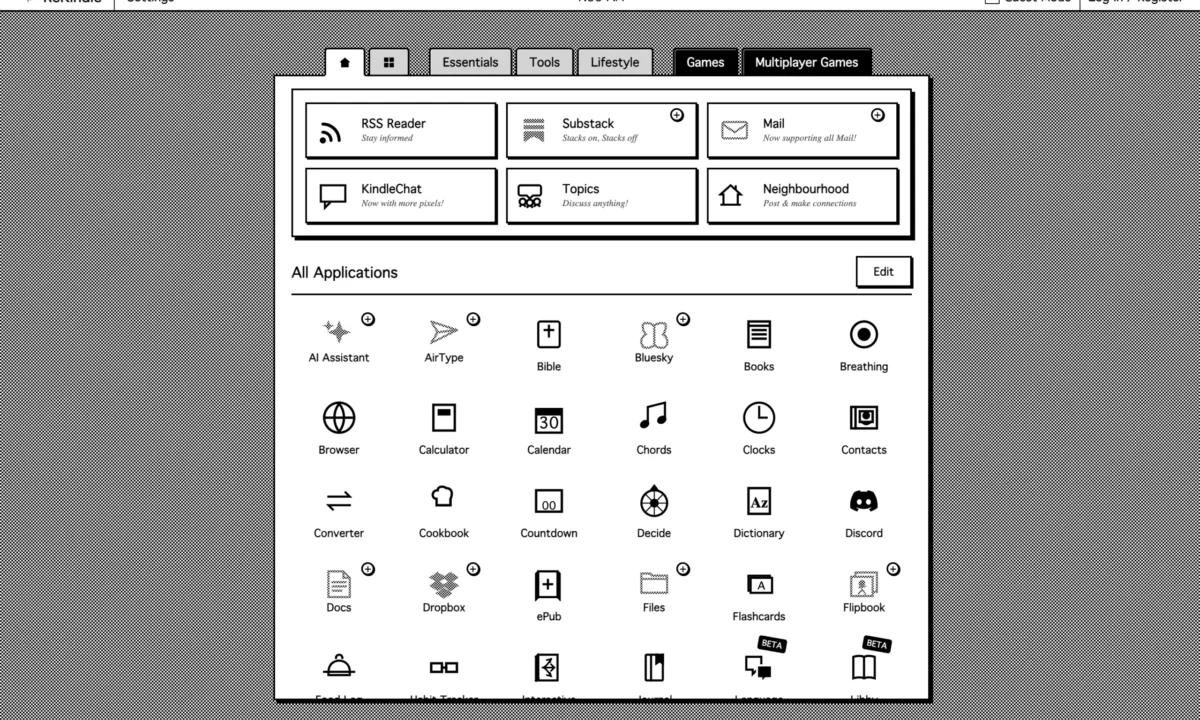

Accessibility and Adoption: Getting Started with Paper.design

Paper.design is readily accessible, offered as a macOS desktop application (Paper Desktop) for optimal performance and as a web application for broader compatibility. This dual availability ensures that users can choose the environment that best suits their workflow.

The platform employs a tiered pricing model designed to encourage exploration and scale with professional needs. A free tier is available, which includes 100 MCP calls per week. This allowance is sufficient for users to thoroughly explore the agent integration capabilities, experiment with AI-driven workflows, and understand the core premise of the tool without financial commitment. For professionals requiring more extensive usage, the Pro tier is priced at $20 per month, unlocking a generous 1 million MCP calls per week. This effectively provides unlimited access for most demanding workflows, catering to active designer-developers and small teams.

By making the tool accessible and offering a robust free tier, Paper.design invites a new generation of creators to experience a fundamentally different approach to digital design. It represents a bold step forward in catching up design tools to the rapid advancements seen in development methodologies over the past two years, signaling a future where design truly understands and is understood by code.